Data Science Dashboard & Workflow¶

Data Science Dashboard¶

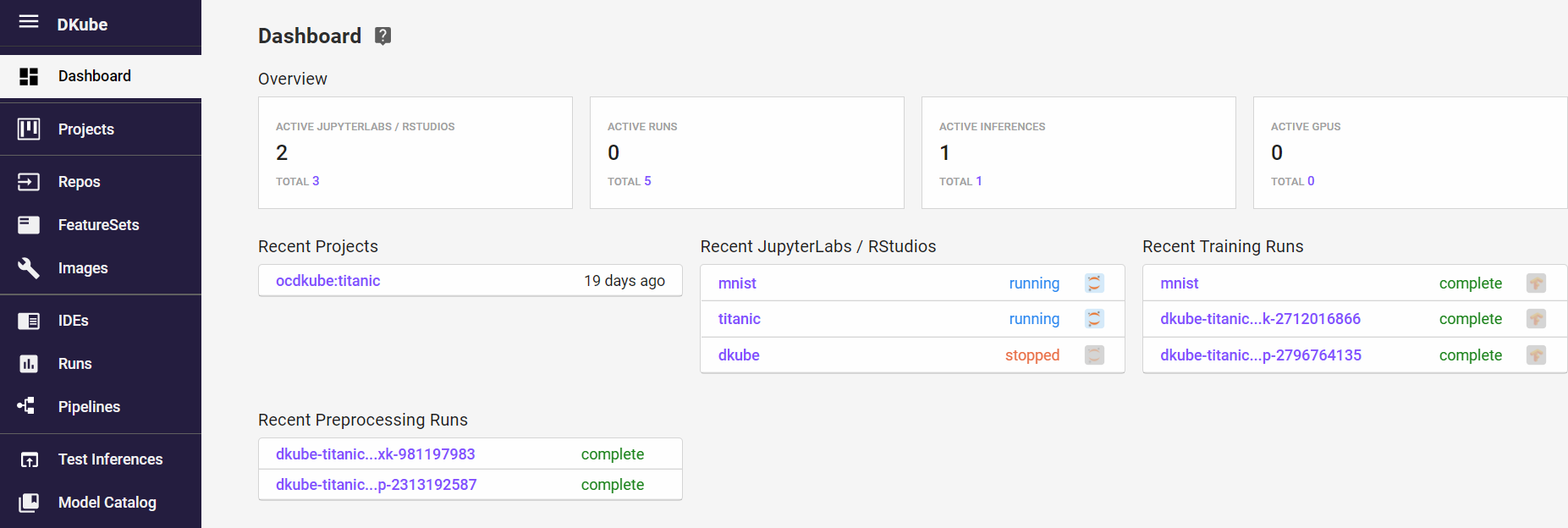

The Data Science dashboard provides an overview of the current state of the workflow.

From the dashboard, the user can go directly to recent jobs.

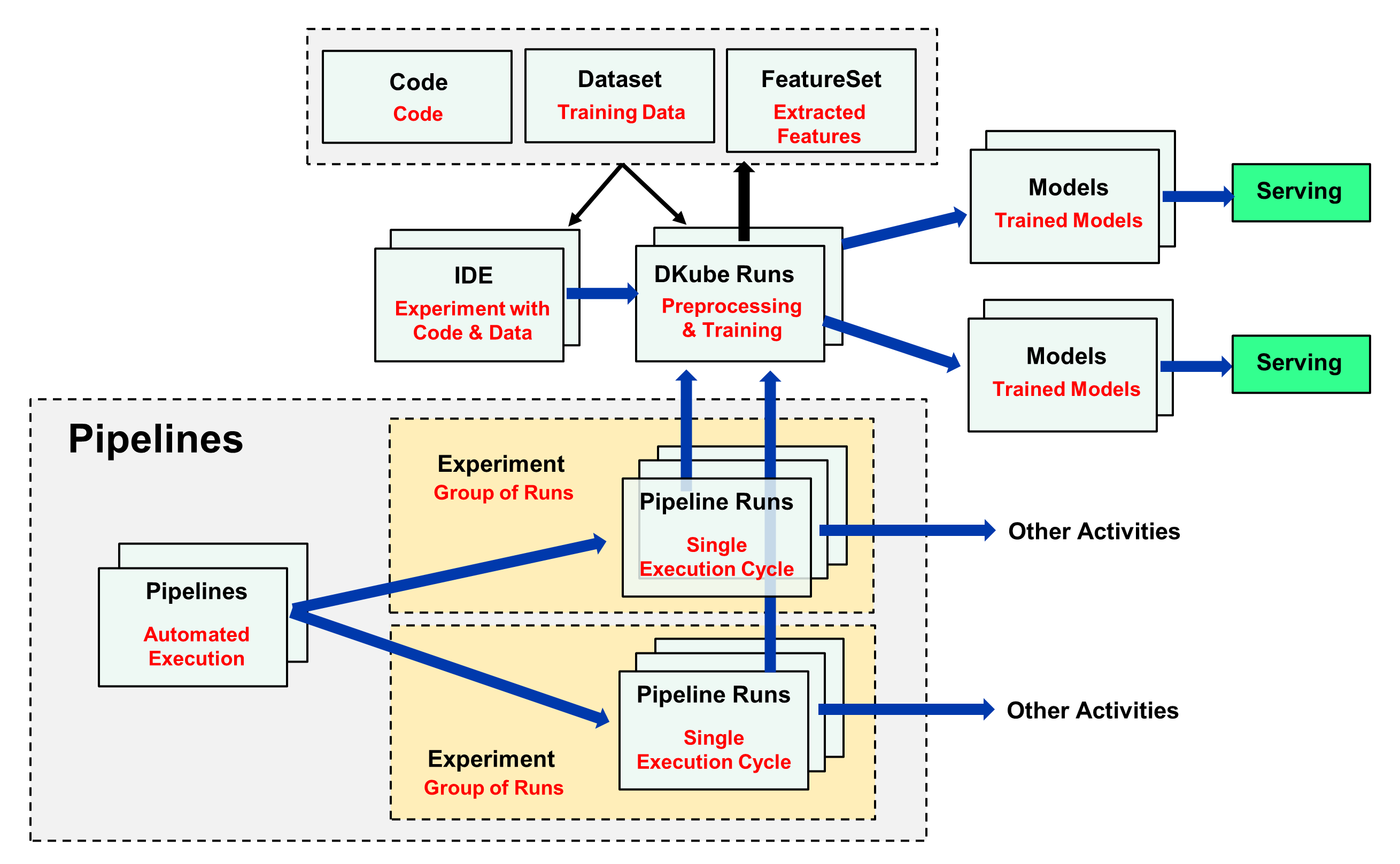

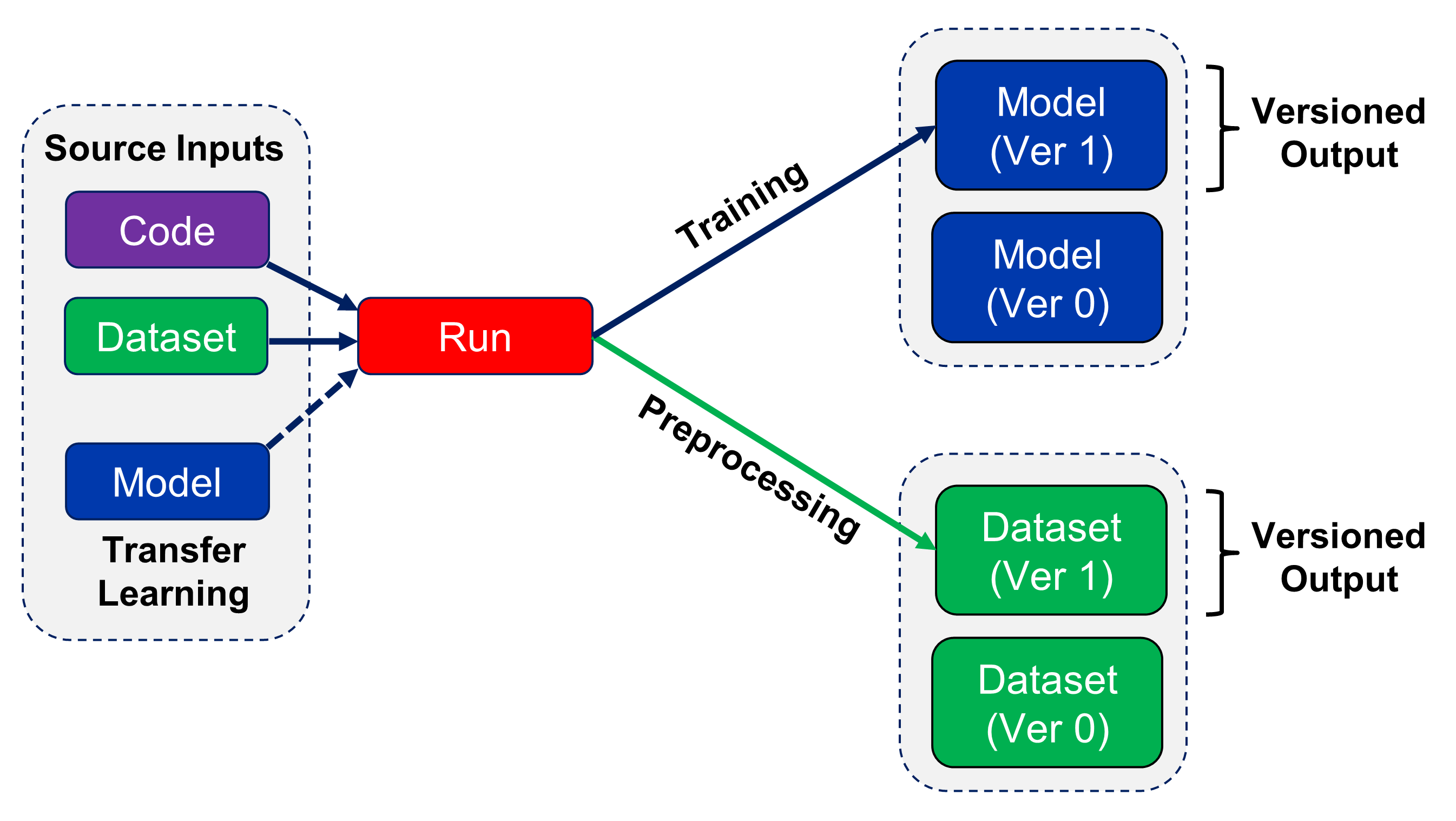

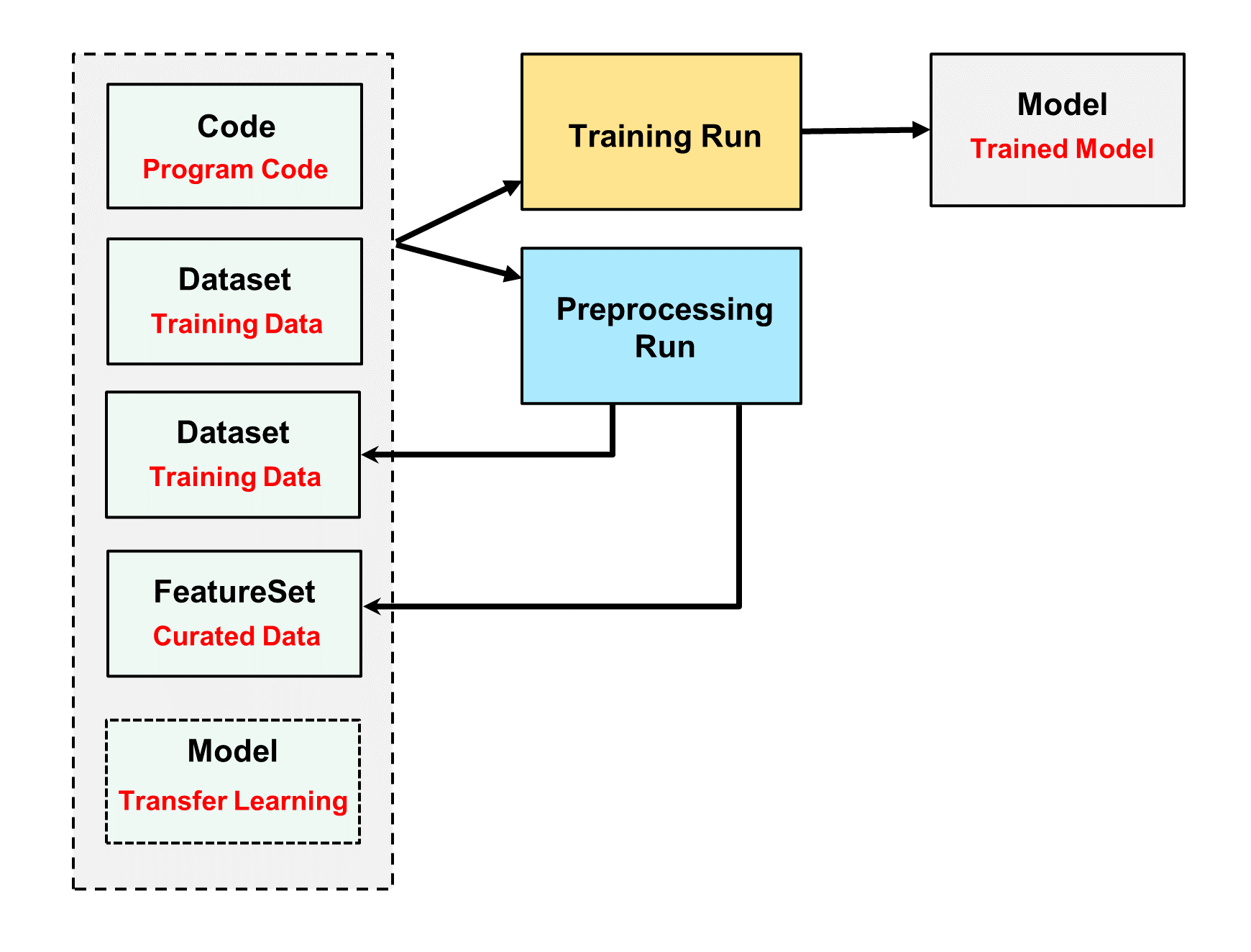

Data Science Workflow¶

Function |

Description |

|---|---|

Code |

Folder containing the program code for experimentation and training |

Dataset |

Folder containing the datasets for training |

FeatureSet |

Extracted Dataset |

Model |

Trained model that can be used for inference or transfer learning |

DKube Run |

Execution of DKube training or preprocessing code on dataset |

IDE |

JupyterLab or RStudio |

Pipeline |

Automated execution of a set of steps using Kubeflow pipelines |

Pipeline Run |

Single execution run of a Kubeflow Pipeline |

Experiment |

Group of Pipeline runs, used to enable better run management |

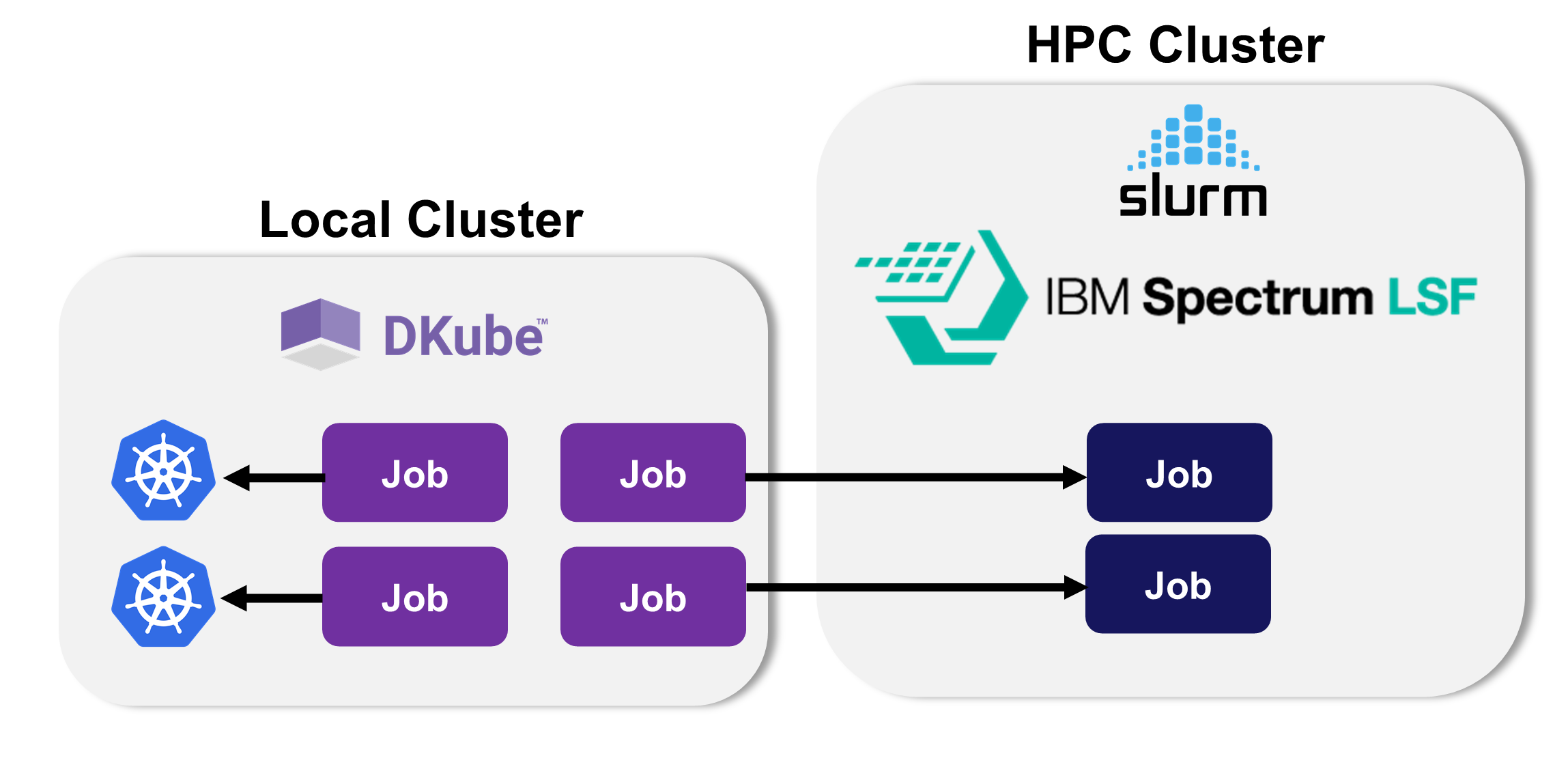

Multicluster Operation¶

DKube can execute Jobs on the local DKube cluster or send them to a remote cluster for execution. The workflow is as follows:

The external cluster is added and maintained as described at Multicluster Management

The user logs into the remote cluster (ife required) as described below

When a Run is submitted, the user decides if it should be submitted to the local cluster or the remote cluster. This is described at Configuration Submission Screen

If the execution is directed at the remote cluster, the appropriate plug-in translates the data and execution image to run on the remote cluster

The execution is performed on the remote cluster, and the status of the Run is sent back to the local cluster

All metadata remains on the local cluster

External Cluster Access¶

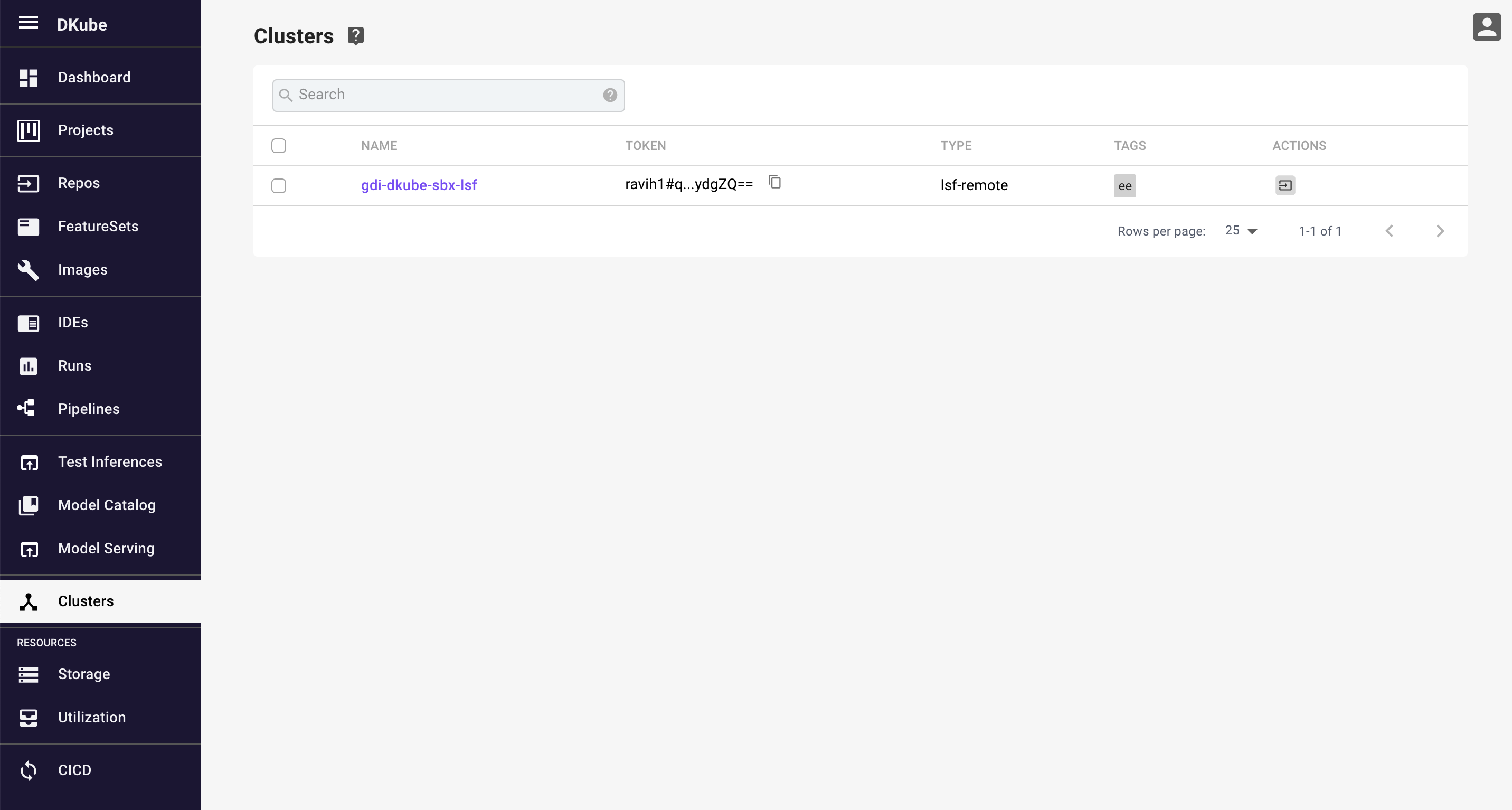

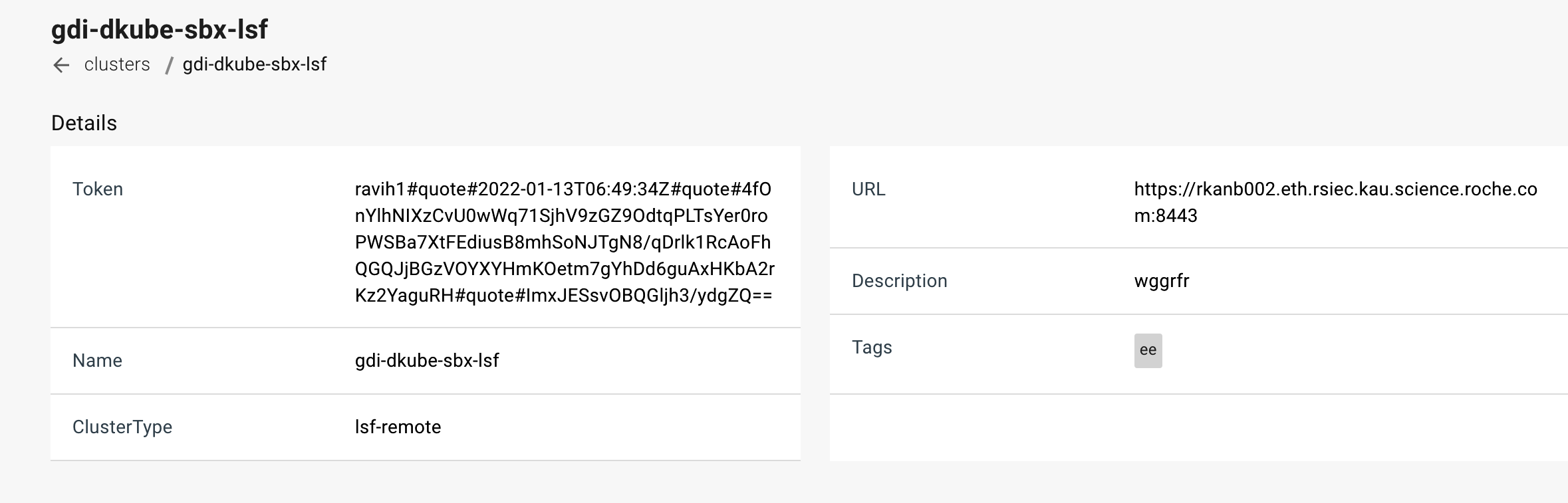

After the external cluster has been added by the Operator, it is available from the Data Science menu. A list of all of the external clusters is provided. More details on the cluster can be viewed by selecting the cluster name.

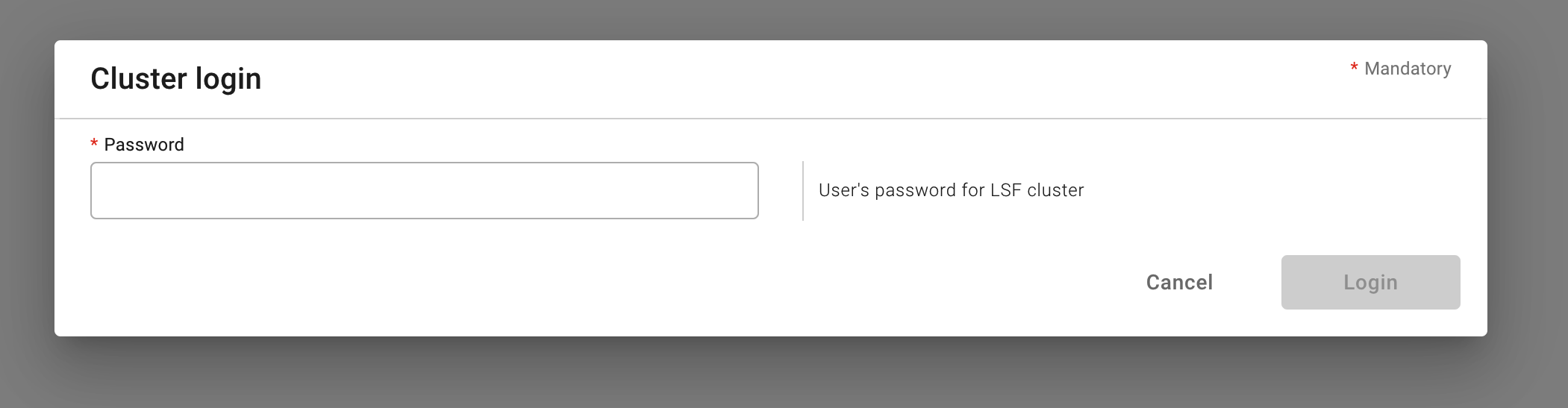

If a login is required as indicated during the Run submission screen, the login icon will bring up a popup where the credentials can be entered.

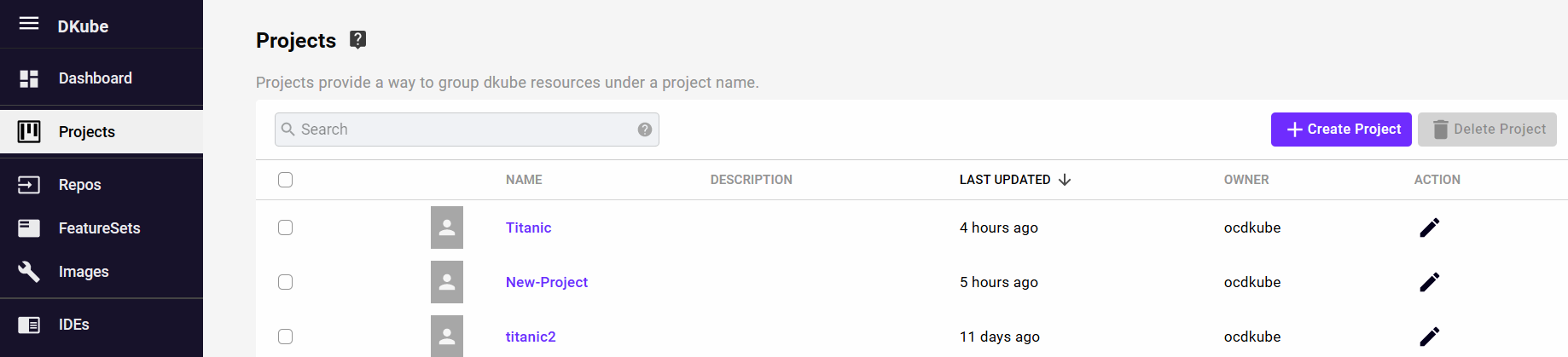

Projects¶

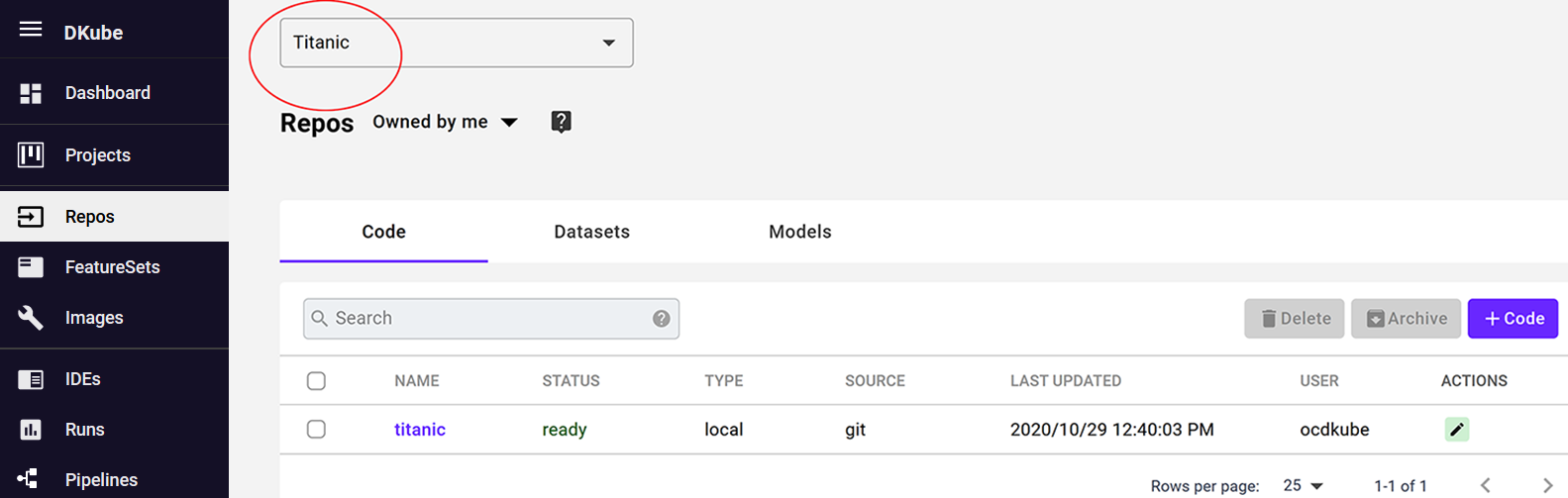

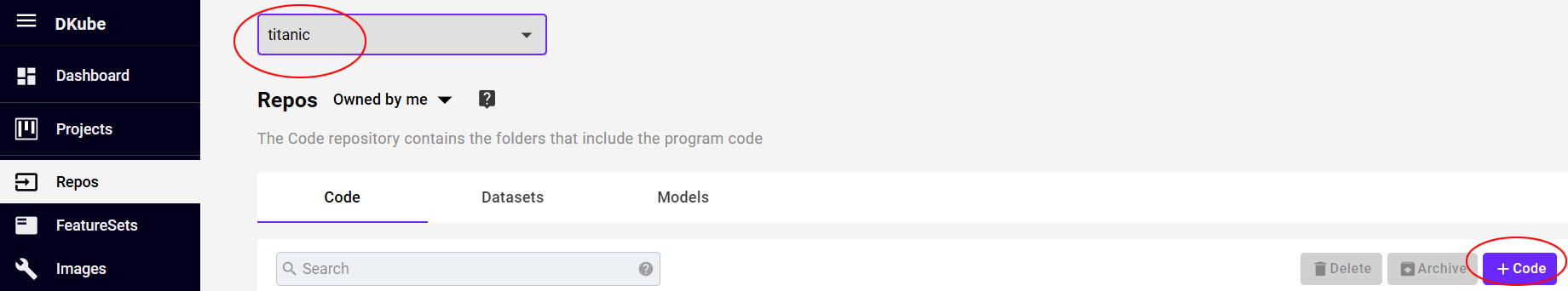

Projects allow the user to group entities into categories, and view them together. When a Project is selected, only the entities such as Code, Datasets, Models, Runs, etc for that Project will be shown.

The Project selection is made at the top of the screen for the entities that are filtered by Projects.

The user can select a specific Project, and see only the entities for that project, or select “All Projects”, and see all of the entities for all Projects.

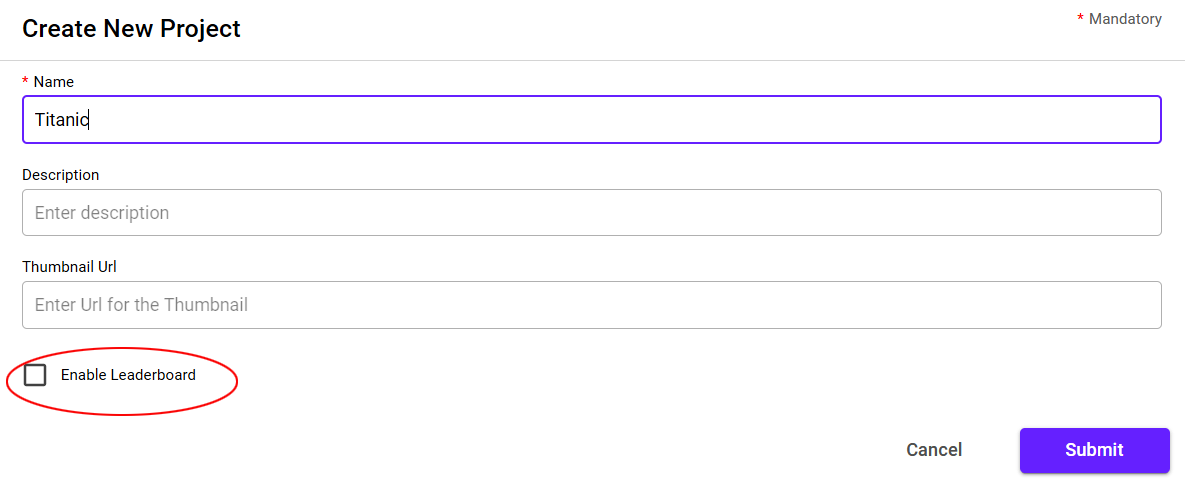

Create a Project¶

A Project is created by selecting the “+ Create Project” button. This will initiate a popup to fill in the details of the Project. Fill in the fields as select “Submit”.

Field |

Description |

|---|---|

Name |

User-defined name for the Project |

Description |

Optional user-defined information field |

Thumbnail URL |

Optional URL for a thumbnail image used for the Project |

Enable Leaderboard |

Select to add the Leaderboard capability as described at Leaderboard |

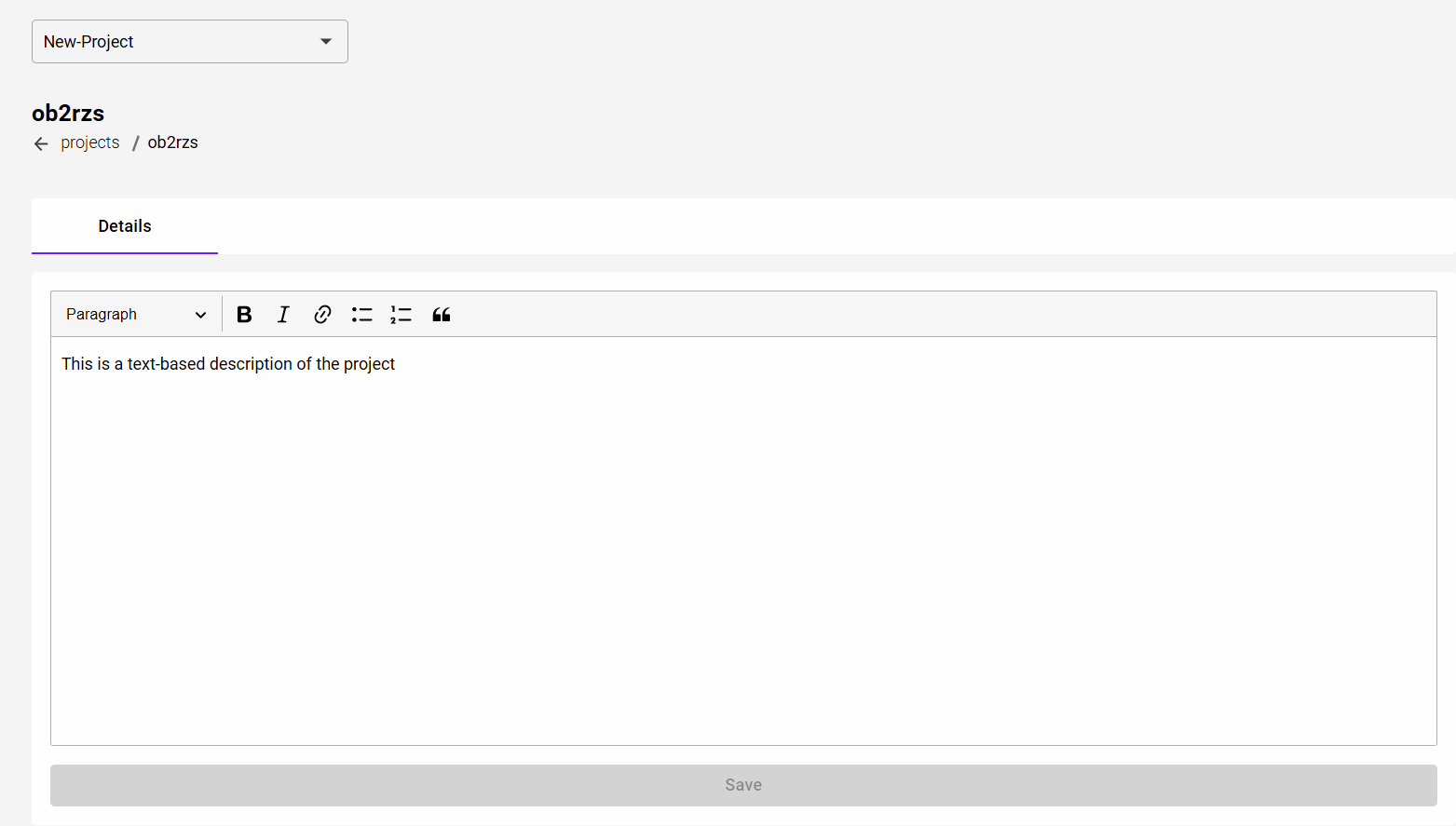

The details of the Project can be provided after creating the Project by selecting the Project name.

The initial Project fields can be edited using the Edit action button to the right of the Project list.

Create and View Project Entities¶

Entities such as Repos, IDEs, Runs, etc are created in the Project that is selected at the time the entity is created.

Note

Leaving the Project selection to “All Projects” allows a user to operate without any Project associated with it

Only the entities that are part of the Project will be viewed when a specific Project is selected at the top of the screen. Selecting “All Projects” will show entities that are associated with a Project as well as those that are not.

The Project that an entity is associated with can be changed using the Edit action button to the right of the entity name.

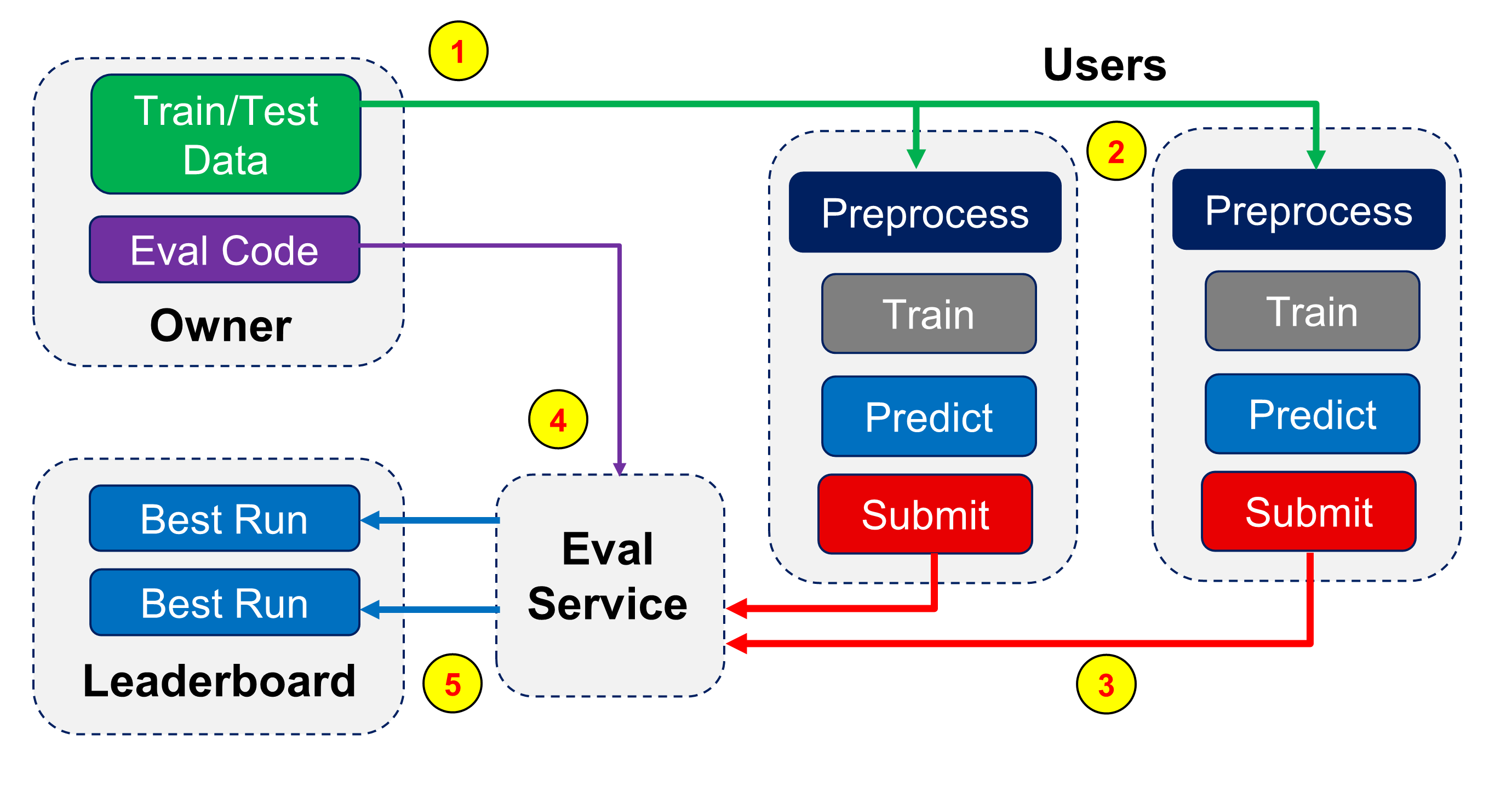

Leaderboard¶

Within a Project, a Leaderboard can be optionally enabled. This capability allows multiple users to cooperate based on a set of criteria set up by the owner of the Leaderboard. Users can run through the submission process more than once, and the best results for each participating user is shown in a table.

The workflow for the Leaderboard is as follows:

The Owner of the Project creates the training and test data repos for the Users to use

Each User creates the training and prediction code to achieve the goals

The Users submit their best models for evaluation

An evaluation service uses evaluation code created by the Project Owner to provide the outcome for the Leaderboard

The Leaderboard shows the best outcomes from each of the Users

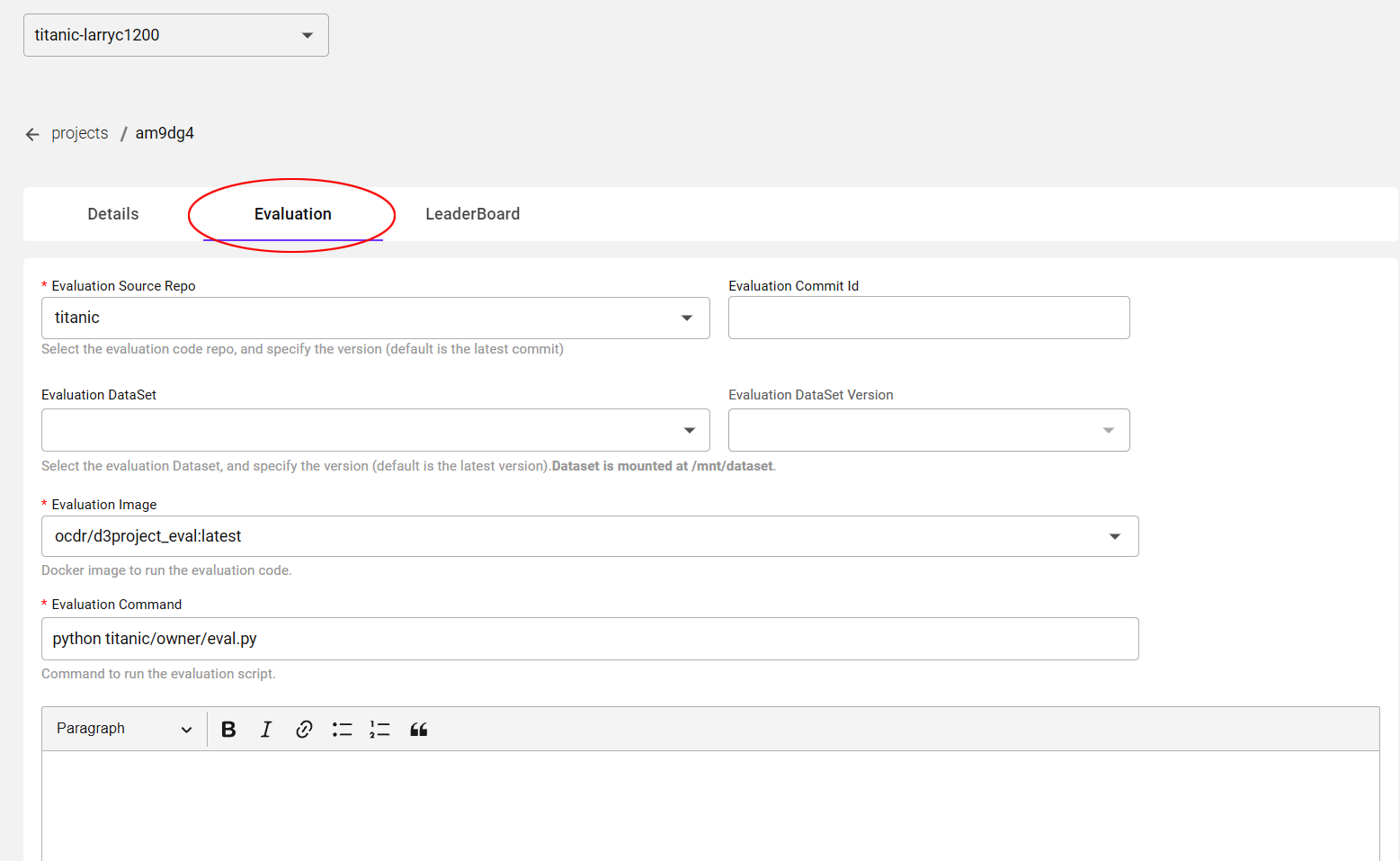

Set up the Leaderboard¶

When creating or editing a Project, the Leaderboard is enabled by selecting the “Enable Leaderboard” checkbox.

After enabling the Leaderboard option, there are more tabs provided after the Project has been created to set it up. The tabs are visible by selecting the Project from the list.

Field |

Description |

|---|---|

Evaluation Source Repo |

Evaluation code for the Leaderboard |

Evaluation Commit ID |

Optional Commit ID (defaults to most recent code repo commit) |

Dataset/Version |

Optional pointer to the Dataset to use for evaluation |

Evaluation Image |

Image to be used for evaluation (defaults to standard DKube image) |

Evaluation Command |

Program used to evaluate the results |

There is also a text-based field that the owner can use to provide the details of the Leaderboard.

Note

The evaluation screen is only visible to the project owner. The collaborator users only submit their results for evaluation, but cannot see what is being used to perform the evaluation.

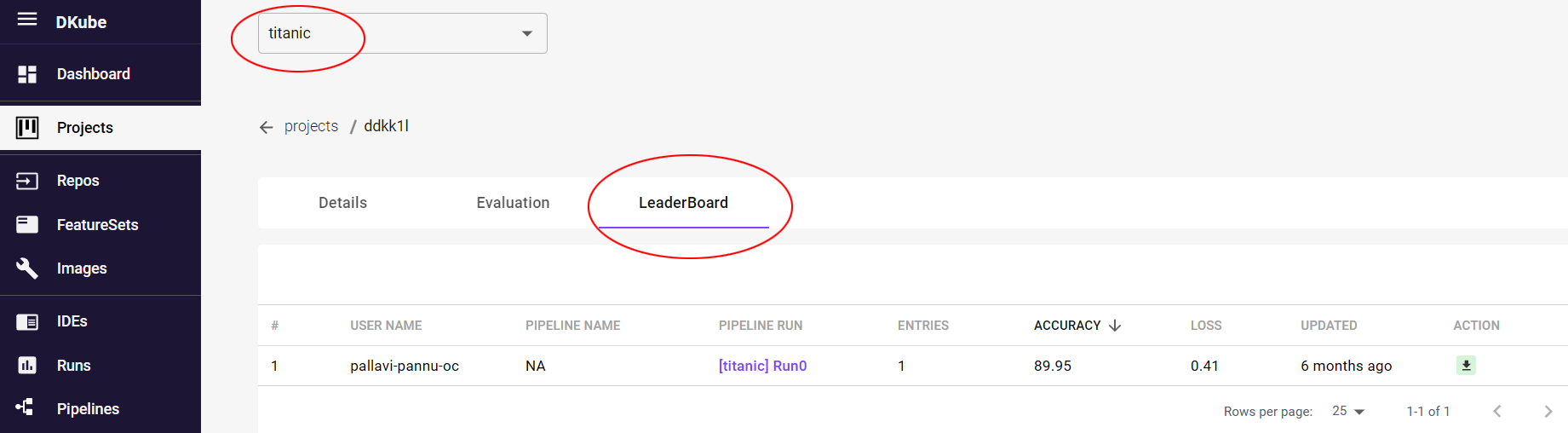

View the Results¶

The results of the Leaderboard can be viewed on the Leaderboard tab. The evaluation results can be compared and downloaded to a file for archiving or further analysis.

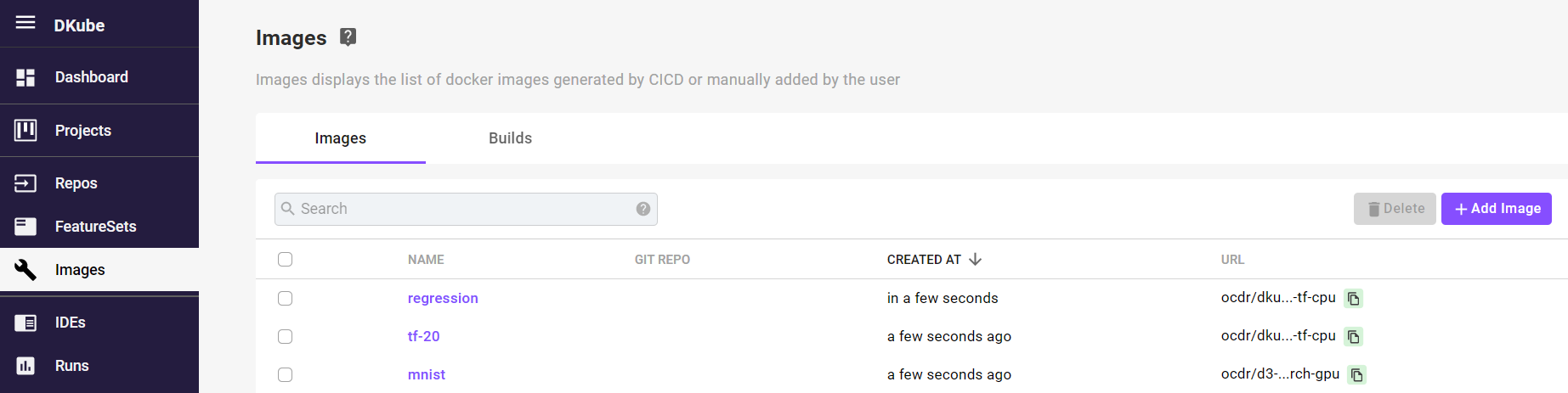

Images¶

DKube provides an image catalog that stores images for use in IDEs and Runs. The images can be added from an external source or built from a Code repo. The custom images will appear in the Image dropdown menu when creating an IDE or Run.

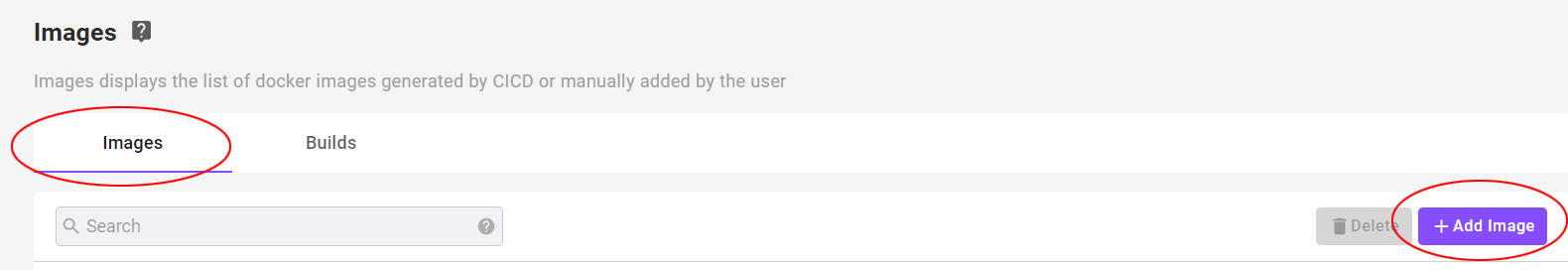

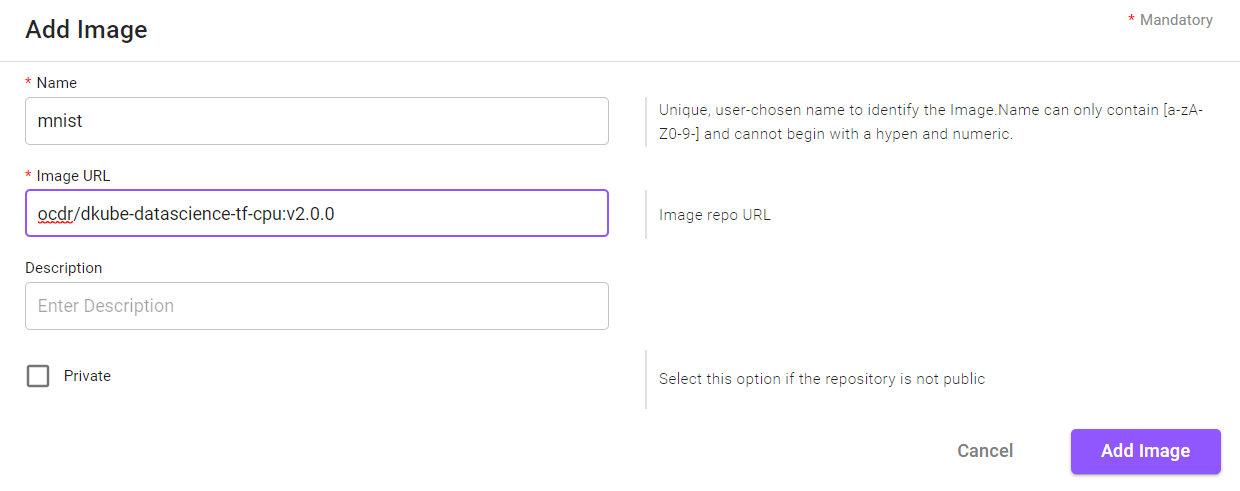

Add an Image¶

An image can be added to the image catalog by selecting the “+ Add Image” button on the top right. A popup appears with the necessary fields.

Field |

Value |

|---|---|

Name |

Unique user-chosen identification |

Image URL |

URL of image |

Description |

Optional, user-chosen field to provide details about the Image |

Private |

Select if the Image requires a Username and Password |

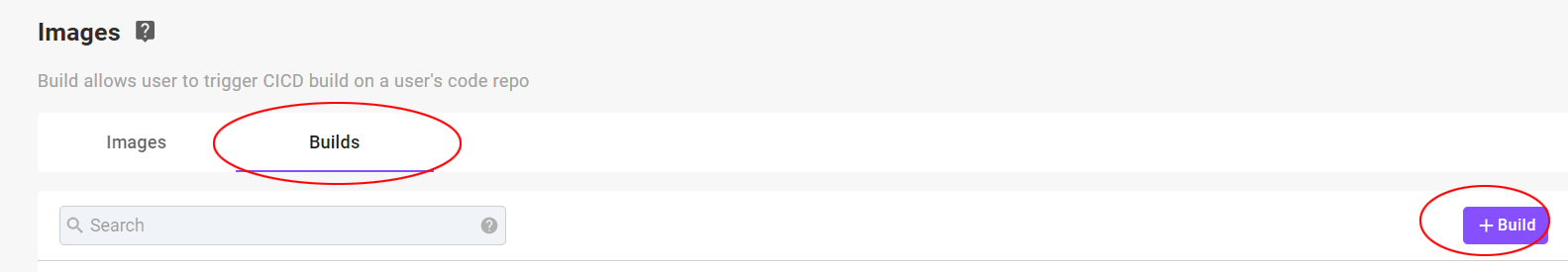

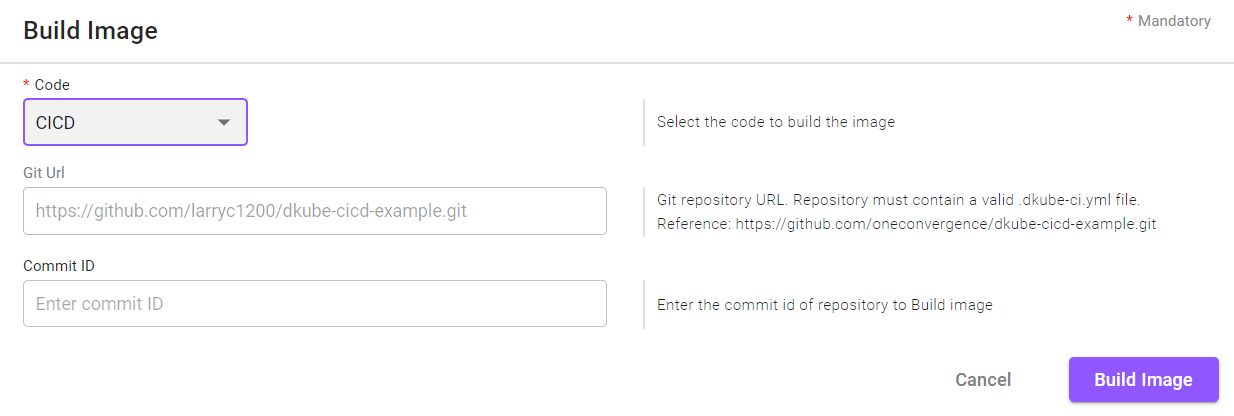

Build an Image¶

An image can be built from an existing Code repo using the CI/CD mechanism as described at CI/CD Image Creation

Building an image is accomplished by selecting the “+ Build” button in the button on the top right. A popup appears with the necessary fields.

Field |

Value |

|---|---|

Code |

Available Code repos |

Git URL |

Repository that contains the Code & Build instructions |

Commit ID |

Commit ID of the Code repo - latest if blank |

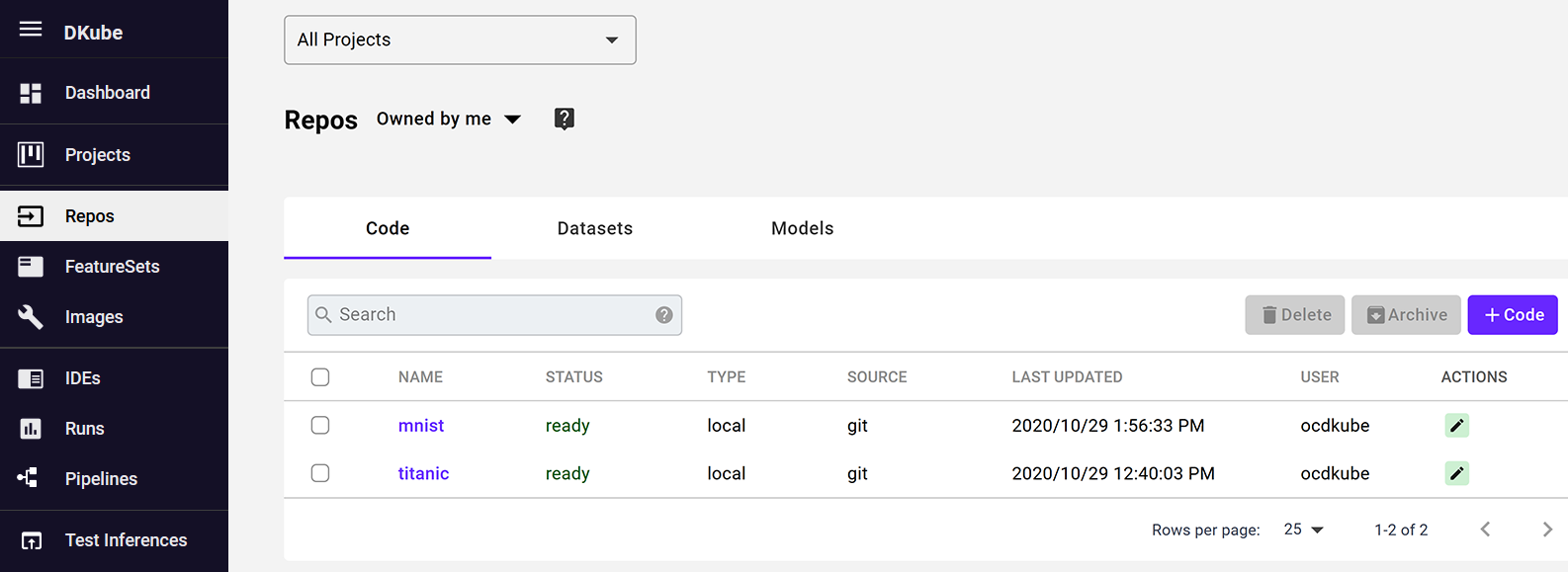

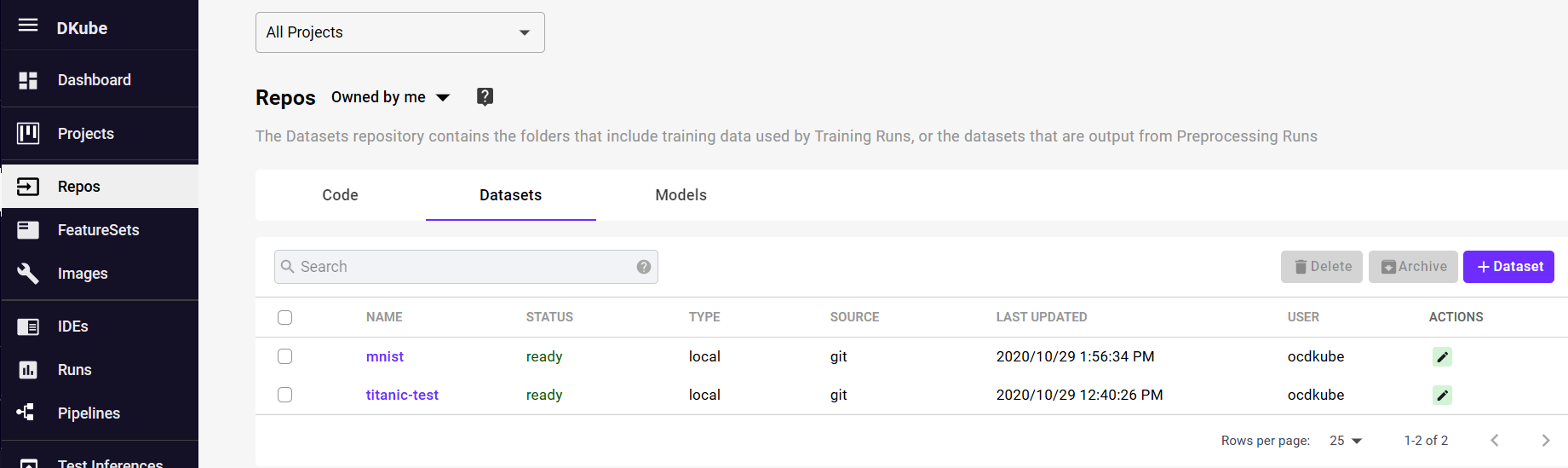

Repos¶

There are 4 types of repositories under the Repo menu item : Code, Datasets, FeatureSets, and Models.

In order to use them, they must first be accessible by DKube. There are different ways that the repositories can be accessed. These are described in more detail in the sections that describe each input source.

They can be uploaded to the internal DKube storage area. In this case, the data becomes part of DKube and cannot be updated externally.

They can be accessed as a reference pointer to a folder or file. In this case, the files can be updated by an external application and DKube will access the new version of the file on the next run.

Note

Entities (Code, Datasets, etc) will be created in the Project that is selected when the Repo is created. If “All Projects” is selected, the entity will not be associated with any Project.

Versioned vs Non-Versioned¶

Datasets & Models¶

The Dataset & Model repos can be optionally managed by the native DKube DVS versioning system, as explained at Versioning. When repos are created, the source selections guide whether the repo is part of the versioning system or not.

Note

The Datasets & Models need to be versioned with the DKube DVS system in order to be part of the full MLOps architecture. They can then be tracked in the lineage system, compared, etc.

Program Code¶

Code is not versioned within the native DKube system. It is normally developed and versioned in an IDE, then committed to a GitHub repository.

The behavior of which version of the code gets used depends upon whether it is being used by an IDE or a Run.

IDE |

The latest version of the product code will always be used. This is the case even if there is a specific commit ID filled in. The commit ID will be ignored. |

Run |

The code is uploaded into the local DKube storage based on the Commit ID field. If the Commit ID field is blank, the latest version of the code will be uploaded. If there is a specific GitHub Commit ID in the field, that version of the code will be uploaded and used. |

Code¶

The program code is contained in folders that are available within DKube to train models using datasets.

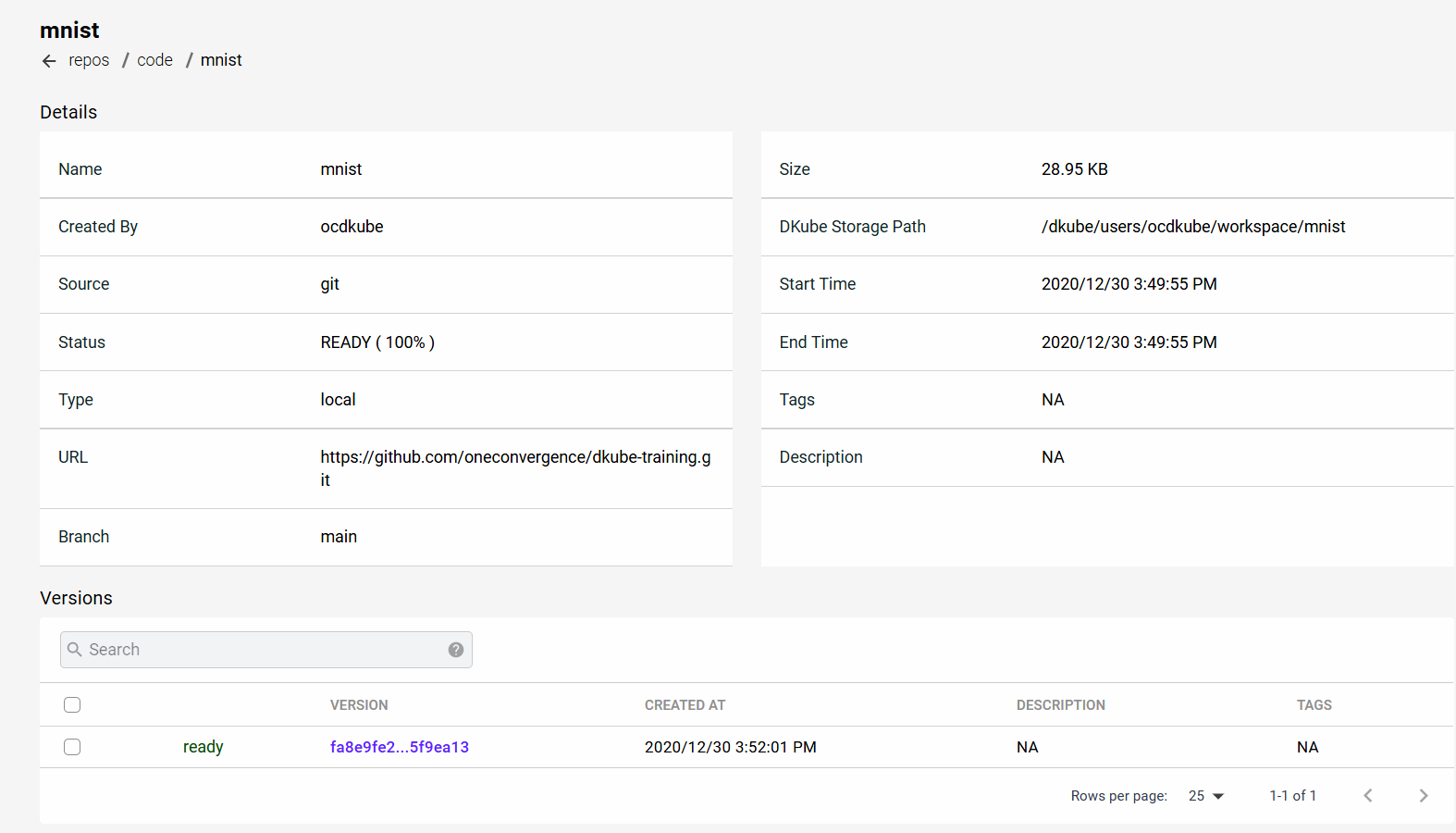

The Code screen provides the details on the programs that have been downloaded or linked by the current User, and programs that have been downloaded by other Users in the same Group.

The details on the code can be accessed by selecting the name. The details screen shows the source of the code, as well as a table of versions.

Add Code¶

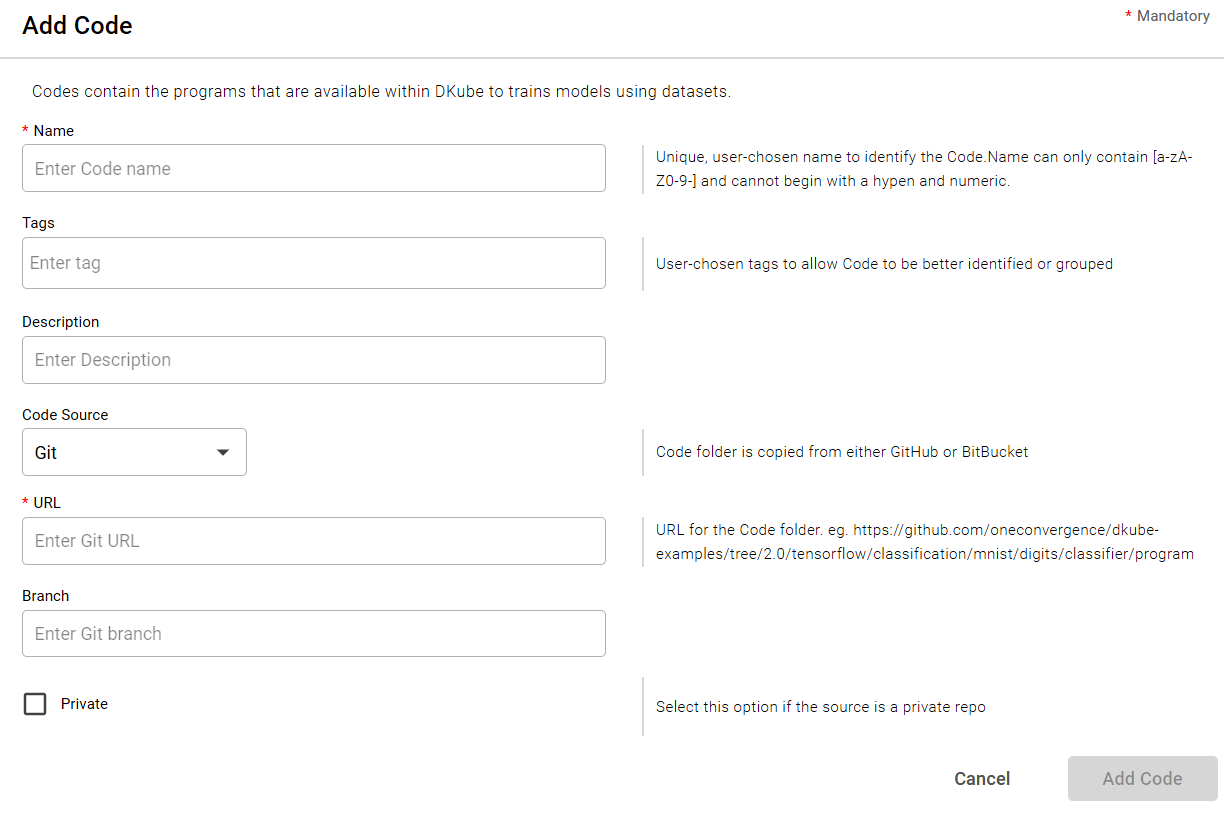

In order to add a code folder, and use it within DKube:

Select the “+ Code” button at the top right-hand part of the screen

Fill in the information necessary to access the code

Select the “Add Code” button

Fields for Adding Code¶

The input fields for adding code are described in this section.

Field |

Value |

|---|---|

Name |

Unique user-chosen identification |

Tags |

Optional, user-chosen detailed field to allow grouping or later identification |

Description |

Optional, user-chosen field to provide details about the Code |

Git¶

The Git selection creates a code folder from a GitHub, GitLab, or Bitbucket repo folder.

Access Type |

Uploaded to local DKube storage |

Field |

Value |

|---|---|

URL |

url of the directory that contains the program code |

Private |

Select this option if you need additional credentials to access the repo |

Bitbucket Access

When accessing a Bitbucket repository, the following url forms are supported.

url |

Authentication |

|---|---|

https://xxxx |

Authentication with username and password |

git@xxxx |

Authentication with ssh key |

ssh://git@xxxx |

Authentication with ssh key |

The following links provide a reference on how to access a Bitbucket repository:

Bitbucket Set Up Key Reference 1

Bitbucket Set Up Key Reference 2

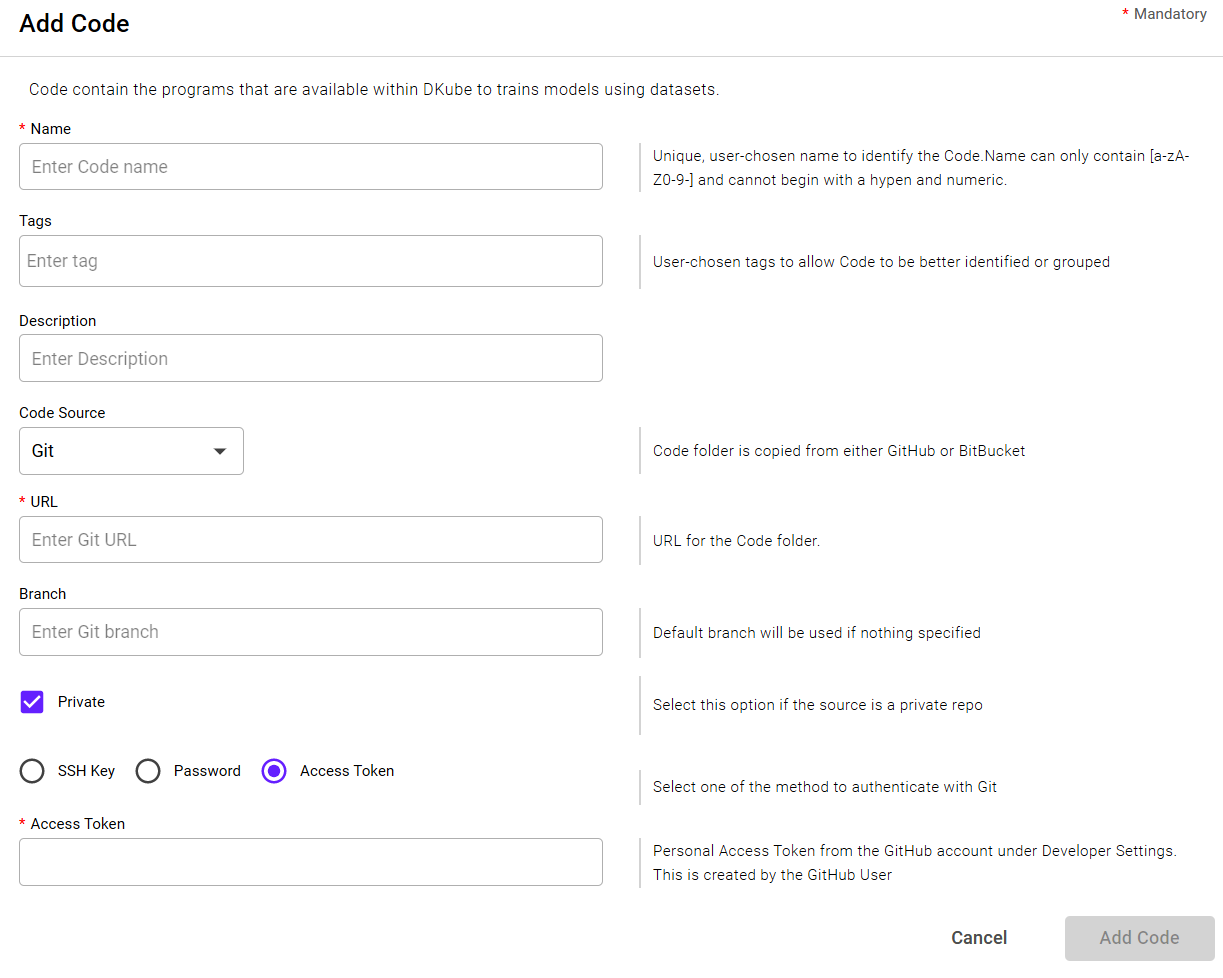

Private Authentication

When selecting a Private repo, more options are provided to enter the credentials. There are 3 different methods to enter credentials:

ssh key

Username and password

Authentication token

When using an ssh key:

The public key should be copied to the repository server

The private key should be copied to the local workstation, and uploaded from there

Note

DKube supports the pem file format and the OpenSSH & RSA private key formats

The following link provides a reference on how to create a GitHub token: Create GitHub Token

Note

The last set of credentials for each host and credential type will be saved in an encrypted form internally, and prepopulated in the fields

Delete a Code Repo¶

Select the Code to be deleted with the left-hand checkbox

Click the “Delete” icon at top right-hand side of screen

Confirm the deletion

Datasets¶

The Datasets contain the training data. Datasets can be added to DKube in the following ways:

Manually added for use as an input for a run with or without versioning capability, as explained in Versioning

Manually added as a placeholder for use as a versioned output

Created as an output from a preprocessing run

DVS provides a way for DKube to track versions within the application, and is explained at Versioning . To use this option, the DVS name will need to be created, as described in DVS. This will set up the metadata and data storage locations. A default DVS storage is created when DKube is installed.

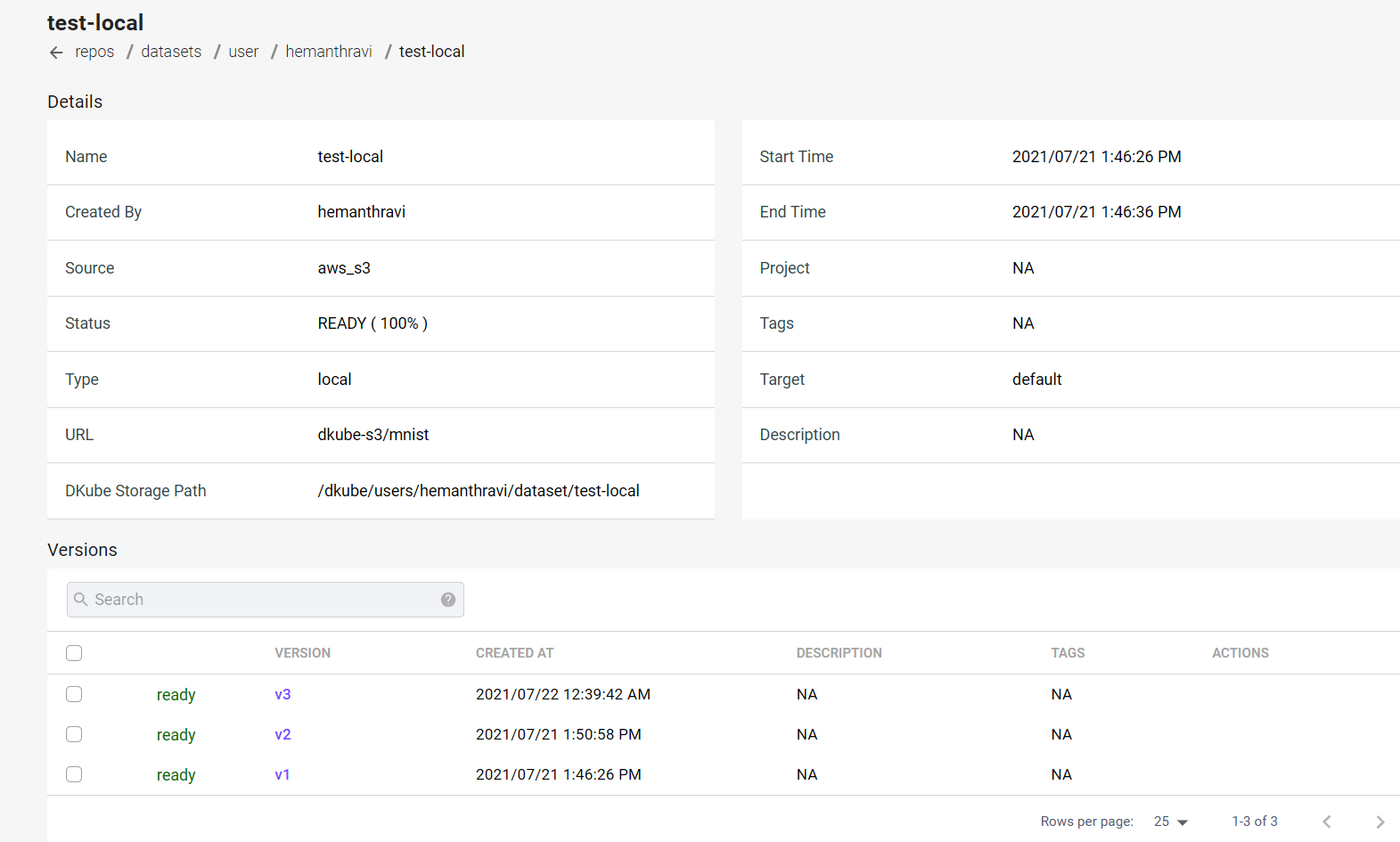

Dataset Details¶

The details for the Dataset contain information about where it came from, where it is stored, and the versions.

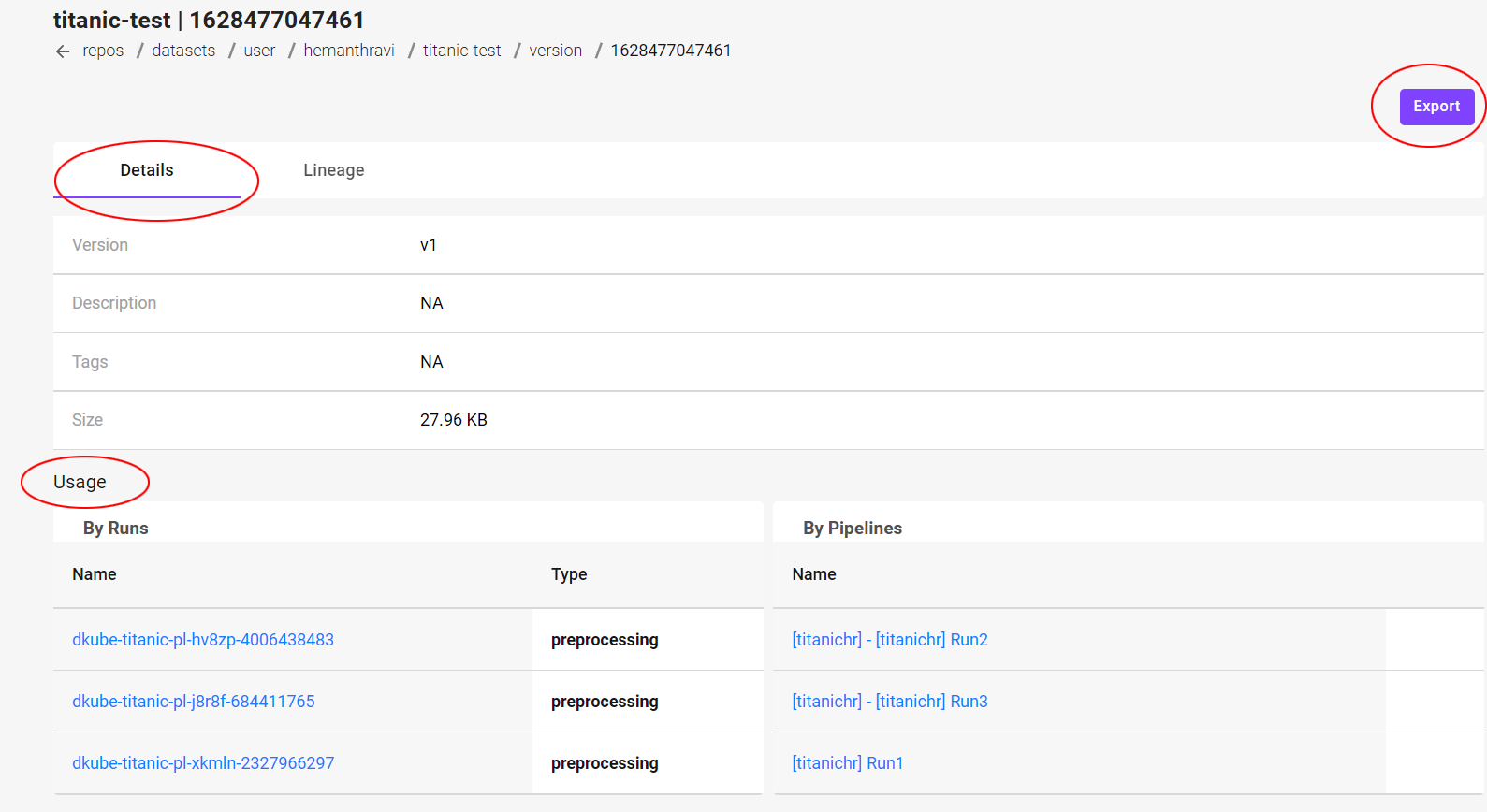

Dataset Version Detail¶

Selecting the version of a Dataset brings up a screen that provides more details on that version, including a list of the Runs that use that version.

The Dataset can be downloaded to your local file system from this page from the “Export” button at the top right.

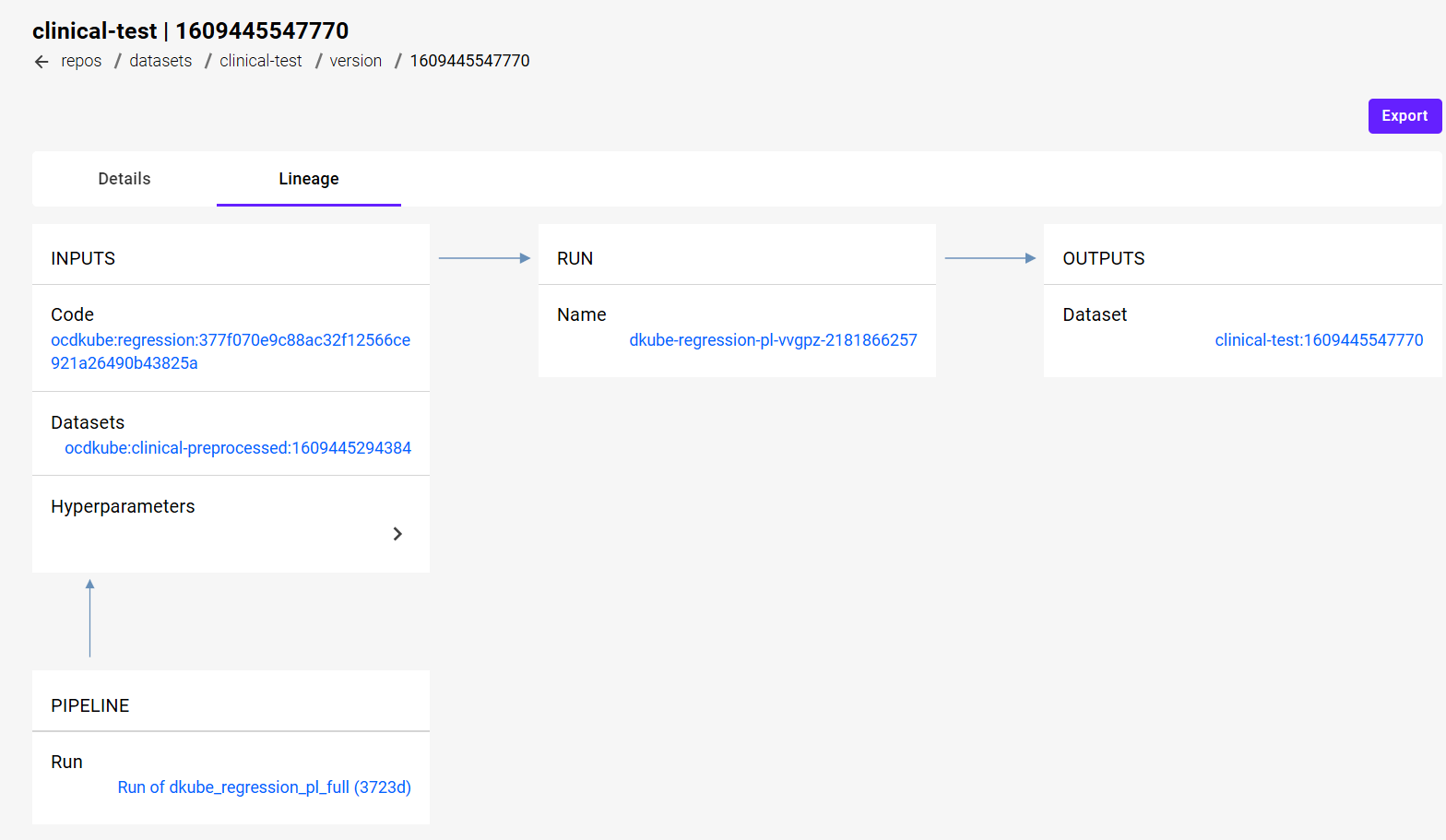

Dataset Version Lineage¶

If the Dataset was created as part of a Preprocessing Run, selecting the “Lineage” tab of the Dataset will provide the full lineage. This provides all of the inputs that were used to create the Dataset.

Add a Dataset¶

The input fields for adding the Dataset depend upon the source.

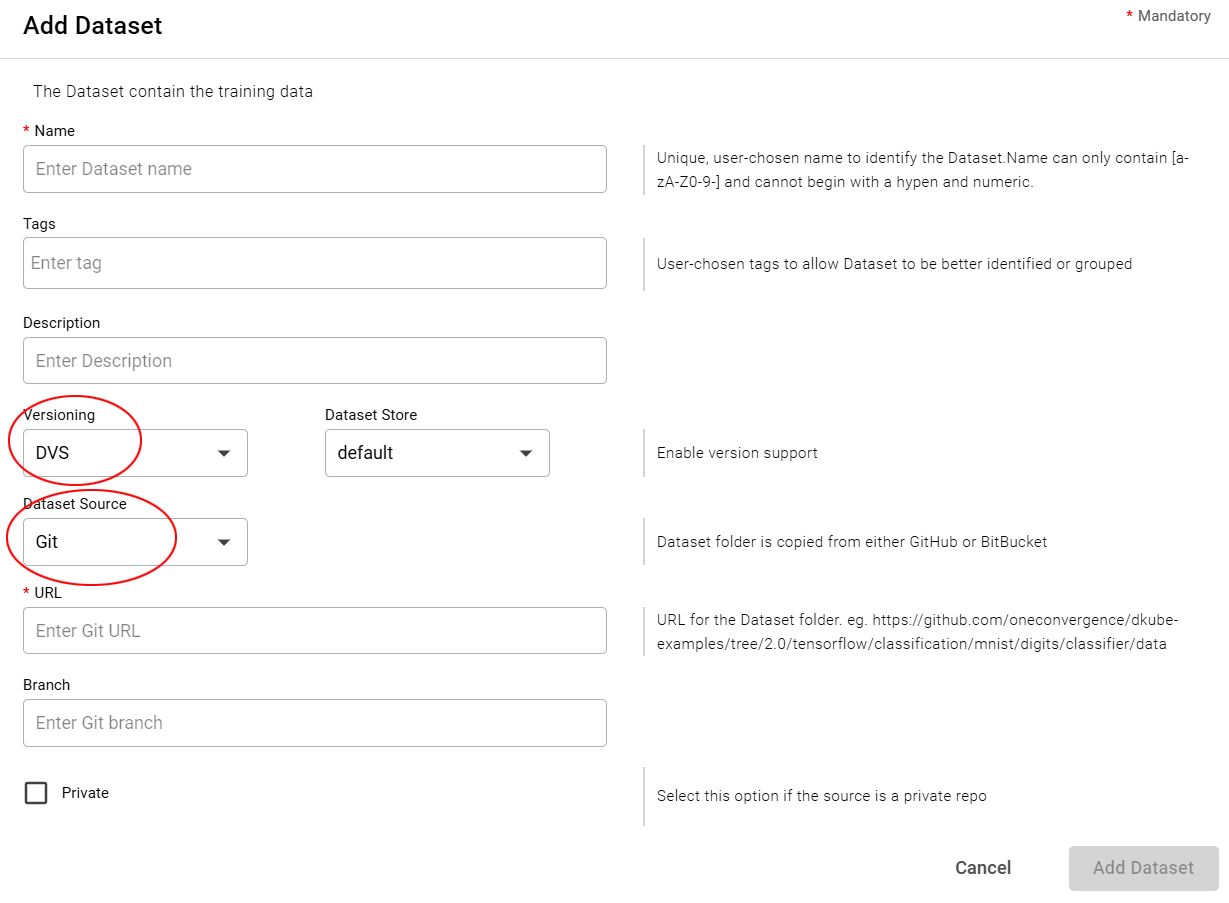

Datasets Used for Training Input¶

A Dataset that is intended as the input to a training run can be added with or without versioning capability. For this type of usage, the fields should be filled in as follows:

Field |

Value |

|---|---|

Versioning |

DVS or None, based on whether versioning is selected |

Dataset Source |

From dropdown menu |

Note

The dataset source field will show different options depending upon whether versioning is selected. Some input sources are only compatible with versioning, and others are are only compatible without it

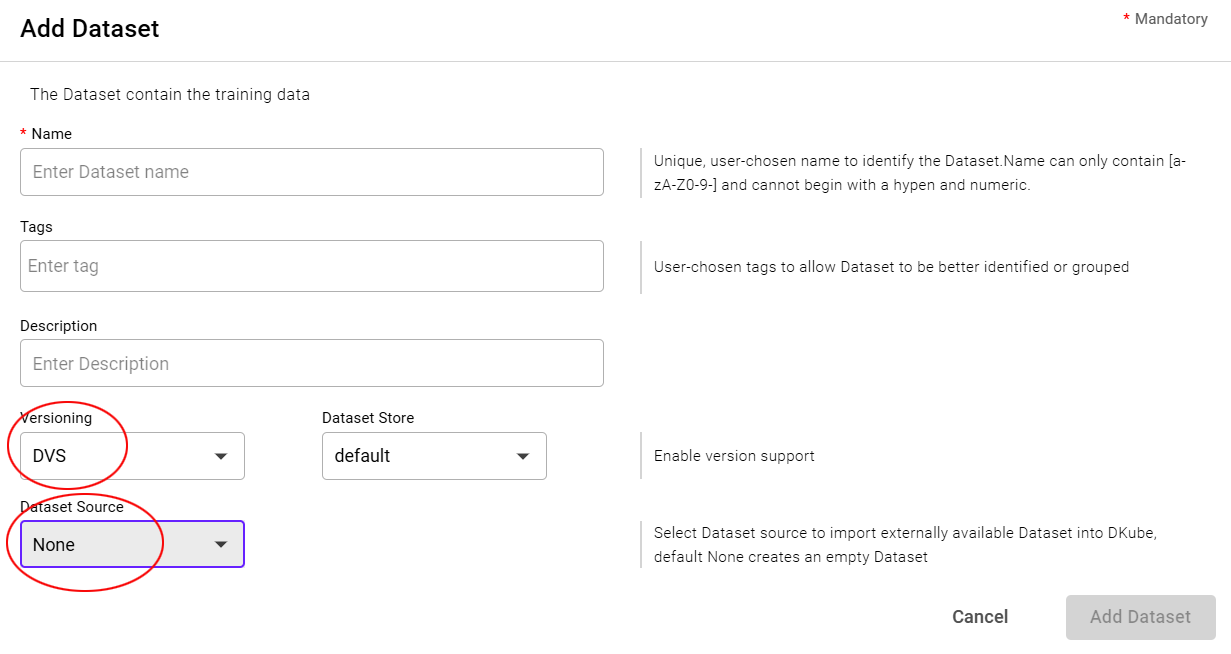

Datasets Used for Preprocessing Output¶

A new Dataset that is intended as an output for a preprocessing run must first be added in the Dataset repo as a blank (version 1) entry. A new version of the same Dataset will be created by the preprocessing run. For this usage, the fields should be filled in as follows:

Field |

Value |

|---|---|

Versioning |

DVS |

Dataset Source |

None |

Adding the Dataset¶

In order to access a Dataset, and use it within DKube:

Select the “+ Dataset” button at the top right-hand part of the screen

Fill in the information necessary to access the Dataset

Select the “Add Dataset” button

Fields for Adding a Dataset¶

For all inputs there are common fields.

Field |

Value |

|---|---|

Name |

Unique user-chosen identification |

Tags |

Optional, user-chosen detailed field to allow grouping or later identification |

Description |

Optional, user-chosen field to provide details about the Dataset |

Versioning |

Select DVS to enable the automatic internal DKube DVS versioning as described at Versioning, or None to use external versioning from the source |

The fields for specific data sources are as follows:

The Git selection creates a Project from a GitHub, GitLab, or Bitbucket repo folder.

Access Type |

Uploaded to local DKube storage |

Versioned with DVS |

Yes |

Field |

Value |

|---|---|

URL |

url of the directory that contains the Dataset |

Branch |

Branch within the Git repo |

Private |

Select this option if you need additional credentials to access the repo |

Note

If the branch of the rep is contained within the url, the Branch input field can be left blank. If the Git repo does not have the branch in the url, the Branch input must be filled in to identify it.

Bitbucket Access

When accessing a Bitbucket repository, the following url forms are supported.

url |

Authentication |

|---|---|

https://xxxx |

Authentication with username and password |

git@xxxx |

Authentication with ssh key |

ssh://git@xxxx |

Authentication with ssh key |

The following links provide a reference on how to access a Bitbucket repository:

Bitbucket Set Up Key Reference 1

Bitbucket Set Up Key Reference 2

Private Authentication

When selecting a Private repo, more options are provided to enter the credentials. There are 3 different methods to enter credentials:

ssh key

Username and password

Authentication token

When using an ssh key:

The public key should be copied to the repository server

The private key should be copied to the local workstation, and uploaded from there

Note

DKube supports the pem file format and the OpenSSH & RSA private key formats

The following link provides a reference on how to create a GitHub token: Create GitHub Token

The fields are compatible with the standard S3 File System. The files can be:

On AWS

On an S3-compatible Minio server ( https://min.io/)

Access Type |

Action |

Versioned with DVS |

|---|---|---|

DVS |

Uploaded to local DKube storage |

Yes |

None |

Referenced |

No |

Field |

Value |

|---|---|

AWS |

Check this box if the Program is on AWS |

Endpoint |

If not AWS, url of the Minio server endpoint |

Access Key ID |

|

Secret Access Key |

|

Bucket |

|

Prefix/Subpath |

Folder within the bucket. If the bucket is called “examples”, and it has a sub-folder called “mnist”, with a further sub-folder called “dataset”, the syntax for this field is “mnist/dataset”. |

The GCS input selection will use Google Cloud Storage.

Access Type |

Uploaded to local DKube storage |

Versioned with DVS |

Yes |

Field |

Value |

|---|---|

Bucket |

Bucket name |

Prefix |

Relative path from the bucket to the specified folder |

Secret Key |

JSON file containing the GCP private key |

DKube can access a k8s persistent volume created for the user.

Access Type |

Referenced |

Versioned with DVS |

No |

Field |

Value |

|---|---|

Volume |

Persistent volume directory created for user |

The NFS input option allows a cluster wide nfs server to be the source of the data.

Access Type |

Referenced |

Versioned with DVS |

No |

Field |

Value |

|---|---|

Server |

IP address of the nfs server (e.g. 192.168.200.1) |

Path |

Absolute path to the dataset on the nfs server (e.g. /mnt/dkube/mnist) |

The Hostpath input option mounts a directory on the host filesystem within the cluster. This data source can be used to access shared storage mounted on all the nodes in the cluster.

Access Type |

Referenced |

Versioned with DVS |

No |

Field |

Value |

|---|---|

Hostpath |

The folder path in the host file system |

The Redshift input option allows DKube to access Amazon Redshift as the source of the data.

Note

Use of Redshift within DKube is described at Amazon Redshift

Access Type |

Referenced |

Versioned with DVS |

No |

Field |

Value |

|---|---|

Endpoint |

The Redshift IP address & port from the Redshift/Clusters screen under the Properties tab (e.g. http(s)://<IP>:<Port>). This field is optional when using an API server. In an API server is used, the endpoint is retrieved from the API server for the specified database name. |

Database |

Database Name |

Username |

User Name |

Password |

User Password |

Cert |

Optionally upload a CA certificate from your local workstation if required to validate the server’s certificate |

This input source supports:

MySQL

MSSQL

Oracle

Access Type |

Referenced |

Versioned with DVS |

No |

Field |

Value |

|---|---|

Provider |

Choose one of the supported SQL providers |

After choosing a connection method, a set of fields will appear to support access.

Note

The SQL details are validated only when connecting using a username and password. For ODBC and JDBC, the details are not validated.

Once a Run or IDE has been created using an SQL input source, the configuration file is available:

From the environmental variable DKUBE_DATASET_SQL_CONFIG, and it resides at /etc/dkube/sql.conf

From the mount point of each dataset

The FSx input source mounts an FSx clustered file system at the specified mount point.

Access Type |

Referenced |

Versioned with DVS |

No |

Field |

Value |

|---|---|

FS DNS Name |

DNS name of the FSx endpoint |

Mountname |

FSx mount name |

Volumehandle |

FSx Filesystem ID |

This input source provides the ability to access a Dataset from other sources. The sources are:

Any public url

A file from the user’s local workstation

Access Type |

Uploaded to local DKube storage |

Versioned with DVS |

Yes |

Field |

Value |

|---|---|

URL |

Public URL for access to the directory |

Select File |

Select the file to be uploaded |

Extract uploaded file |

Check this box to extract the files from a compressed archive |

Note

The Workstation input source will upload a single file. In order to upload multiple files in one step, an archive file (.tar, tar.gz, .tgz, or .zip) can be created that combines the multiple files into one. If the uploaded archive file is a combination of multiple files, the checkbox Extract upload file should be selected. DKube will then uncompress the single archive file into multiple files. If the “Extract upload file” is not checked, the archive will be treated a single file, with the assumption that the model code will use it as it is.

Delete a Dataset¶

Select the Dataset to be deleted with the left-hand checkbox

Click the “Delete” icon at top right-hand side of screen

Confirm the deletion

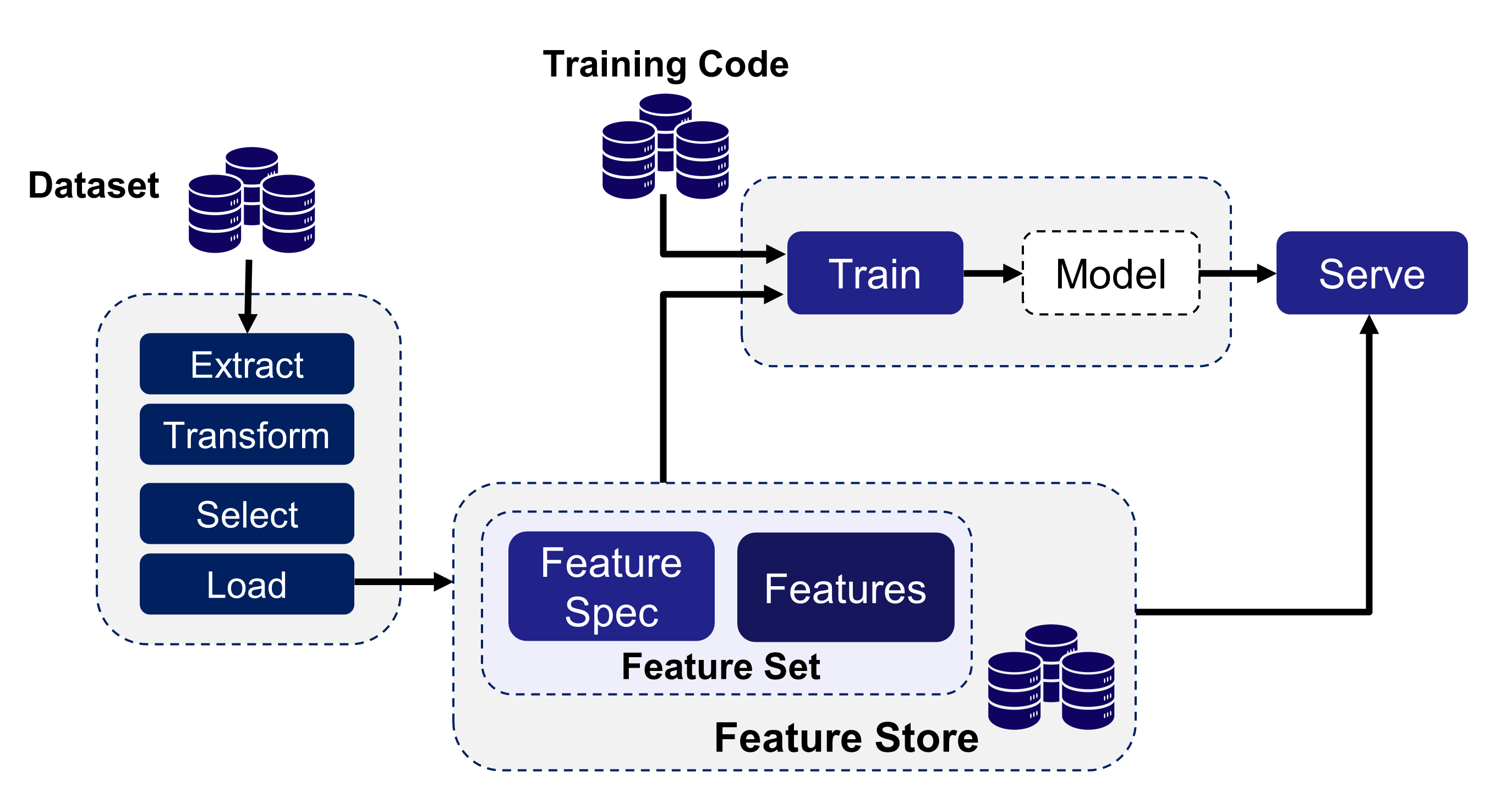

FeatureSets¶

DKube allows an extracted version of a Dataset to be used for training. The extraction identifies the features that are best correlated to an accurate prediction, and leads to a more accurate training run. The workflow for using a Feature Store within DKube is:

Create a FeatureSet from a Dataset by applying a Feature Specification in a Preprocessing Run

Use the FeatureSet in a Training Run rather than the original raw Dataset

FeatureSet Sharing¶

FeatureSets are global resources. Once created, they are available to other users.

Configure the Feature Store¶

Before using the Feature Store capability, the storage area needs to be configured. This is done through the “Configuration” menu in the Operator section. This is described at Configuration

Important

The Feature Store backend configuration should not be changed after being used by DKube

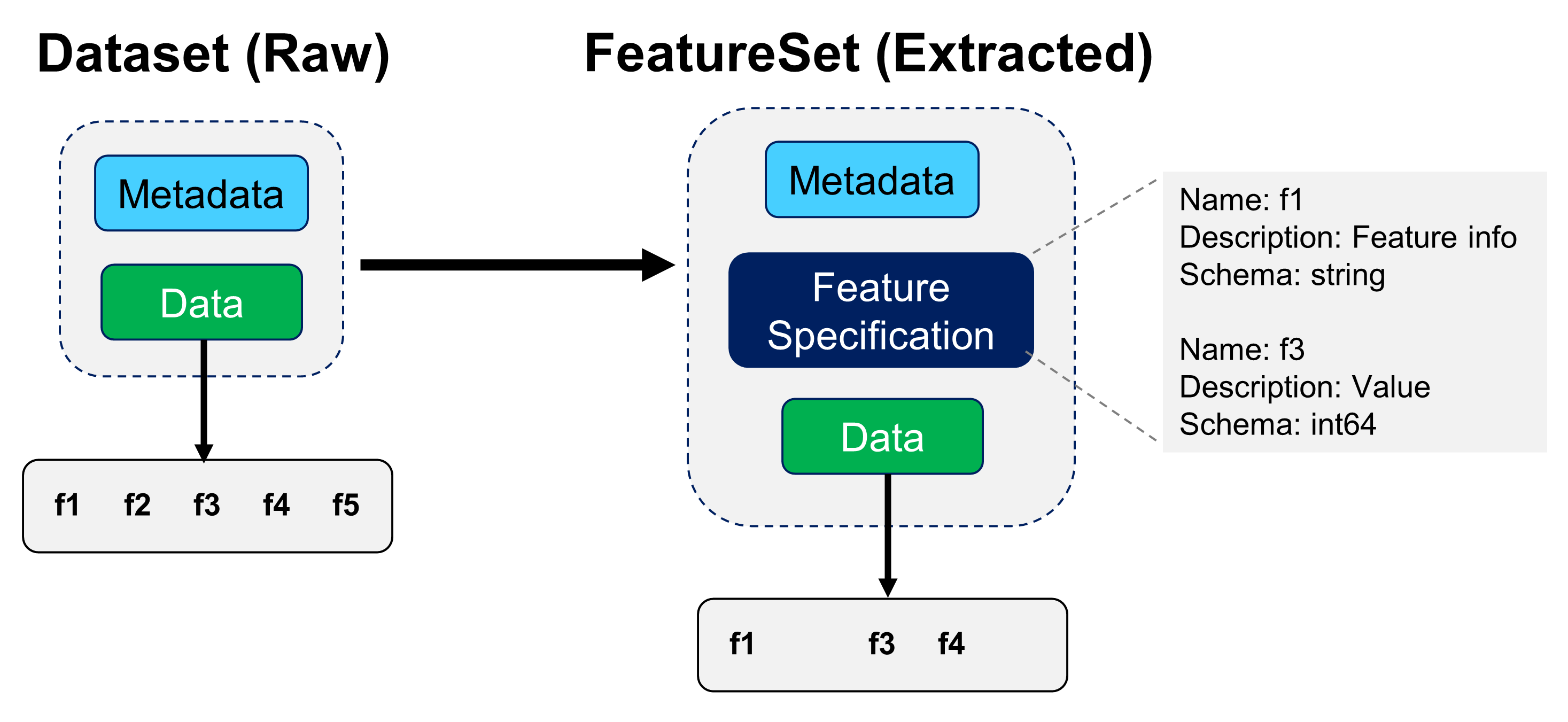

Feature Specification¶

The Feature Specification contains metadata for the features in the FeatureSet. It provides details on each feature, and selects the features that will be used for training. The Feature Specification can also provide the metadata for additional features that are derived from the original Dataset.

The Feature Specification file includes the metadata for the features that will be included in the resulting FeatureSet. The Feature Specification format is as follows:

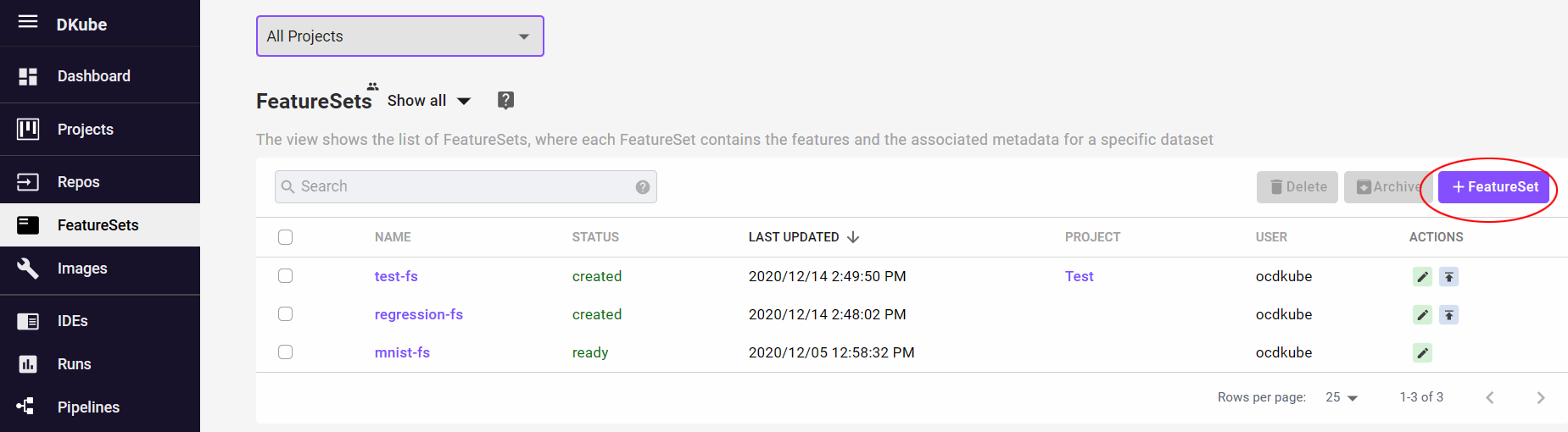

Create a FeatureSet¶

The FeatureSet menu screen shows the FeatureSets that have been created. If a FeatureSet is associated with a Project, it will be identified. FeatureSets that are not associated with a Project will have the column blank.

Creating a new FeatureSet is accomplished by selecting the “+ FeatureSet” button on the far right.

Note

The FeatureSet will be created in the Project that is selected when the “+ FeatureSet” button is selected. If “All Projects” is selected, the FeatureSet will not be associated with any Project.

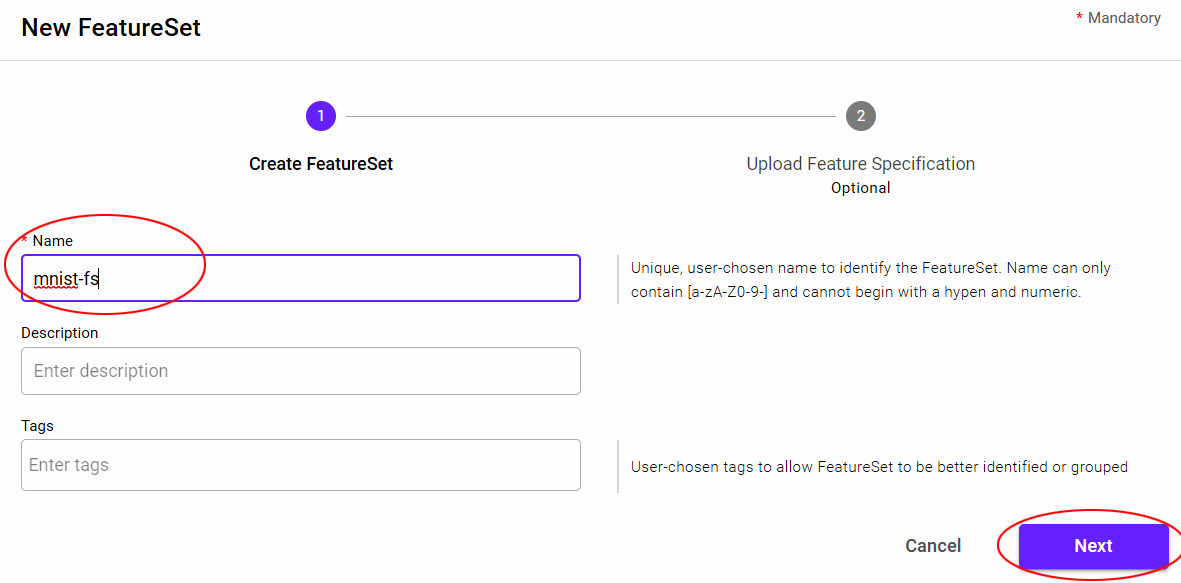

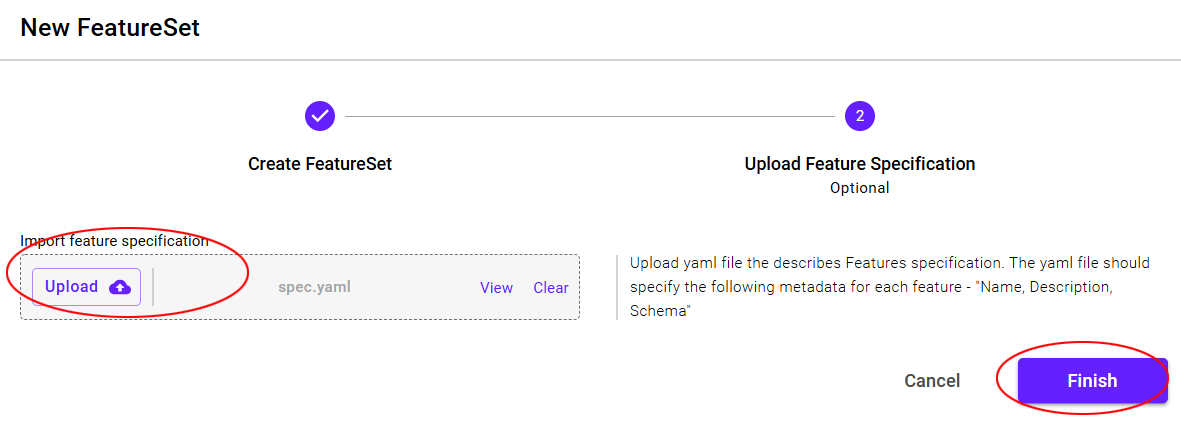

The “+ FeatureSet” button will open a popup to fill in the required information.

After filling in the options, select the “Next” button to optionally upload a Feature Specification.

A Feature Specification can also be uploaded to the FeatureSet after creation by the edit icons on the right.

This creates the first version of the FeatureSet, with the expectation that the Feature Specification will be applied to a Dataset through a Preprocessing run - creating the FeatureSet that will be used in the Training Run.

Create a new FeatureSet Version¶

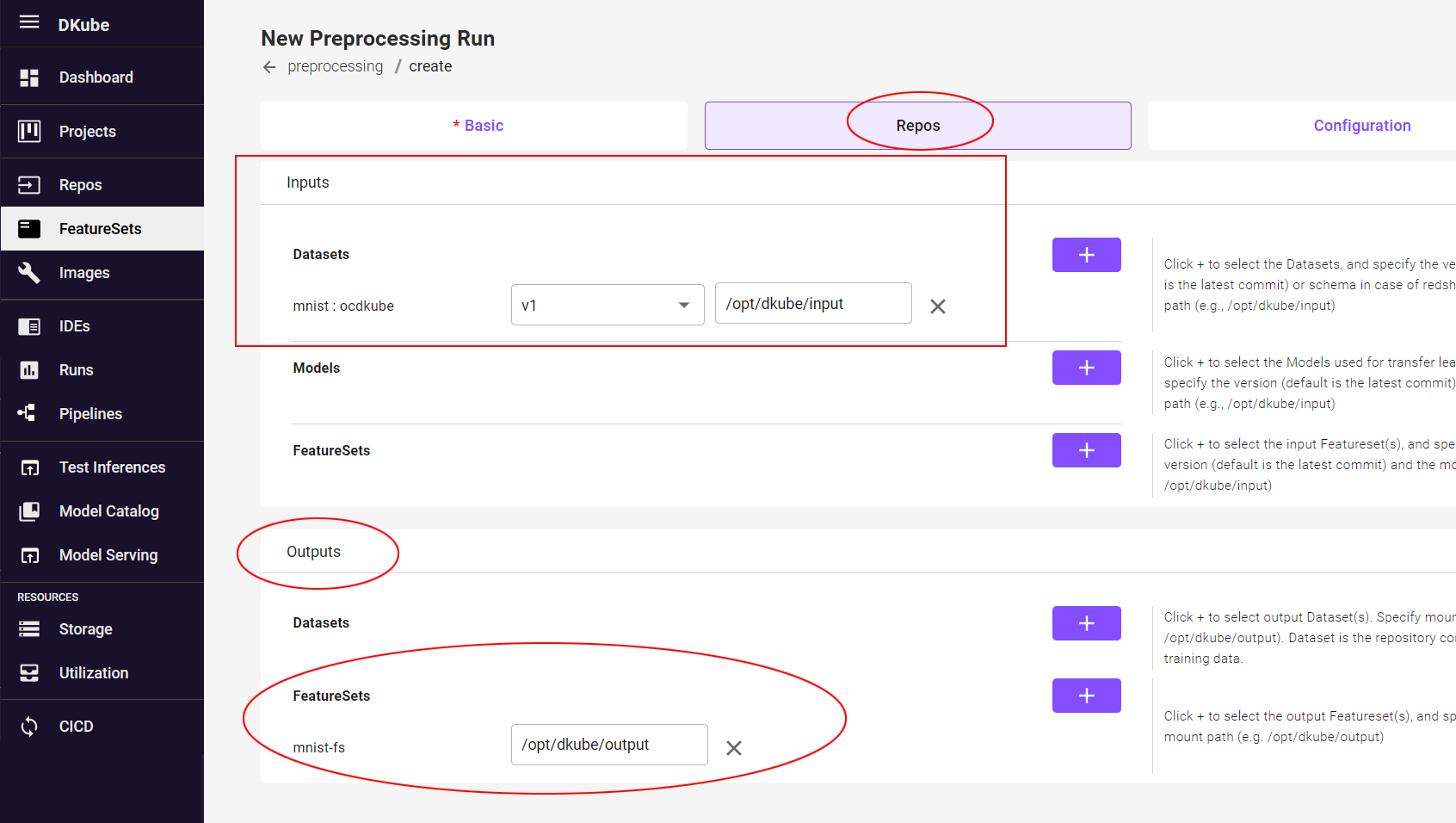

A Preprocessing Run takes the initial FeatureSet and applies the Feature Specification to it. The program then writes the new features and creates a version of the FeatureSet (which is now an extracted Dataset) that will be used by the Training Run.

When creating a Preprocessing Run (explained in Preprocessing Runs ), the input is a Dataset, and the output is a FeatureSet. The FeatureSet contains the Feature Spec that will be applied to create a new version of the FeatureSet.

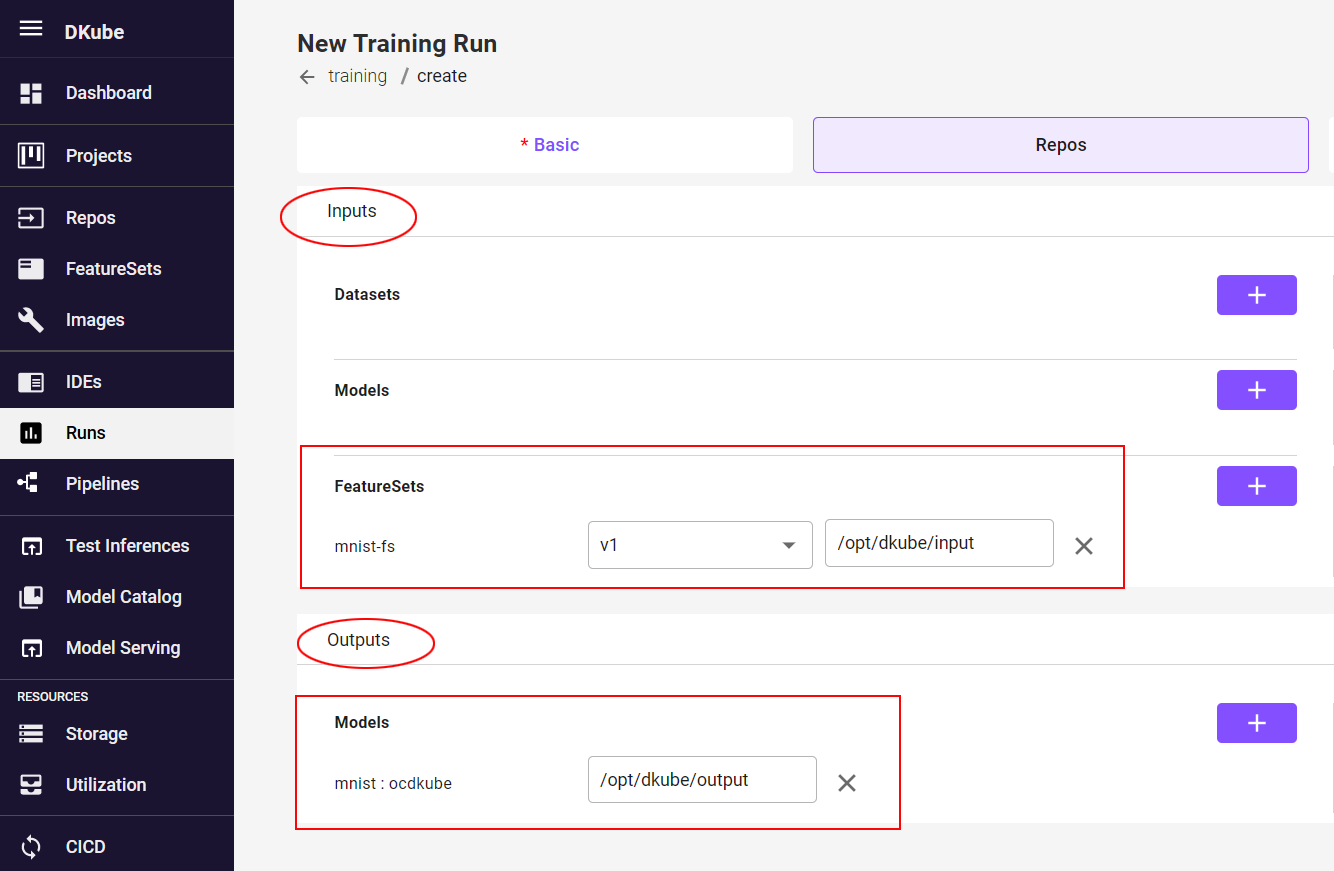

Use the FeatureSet in a Training Run¶

Once a new version of the FeatureSet has been created, based on the Feature Specification, it can be used in a Training Run.

When creating a Training Run (explained in Create Training Run ), the input is a FeatureSet, and the output is a Model.

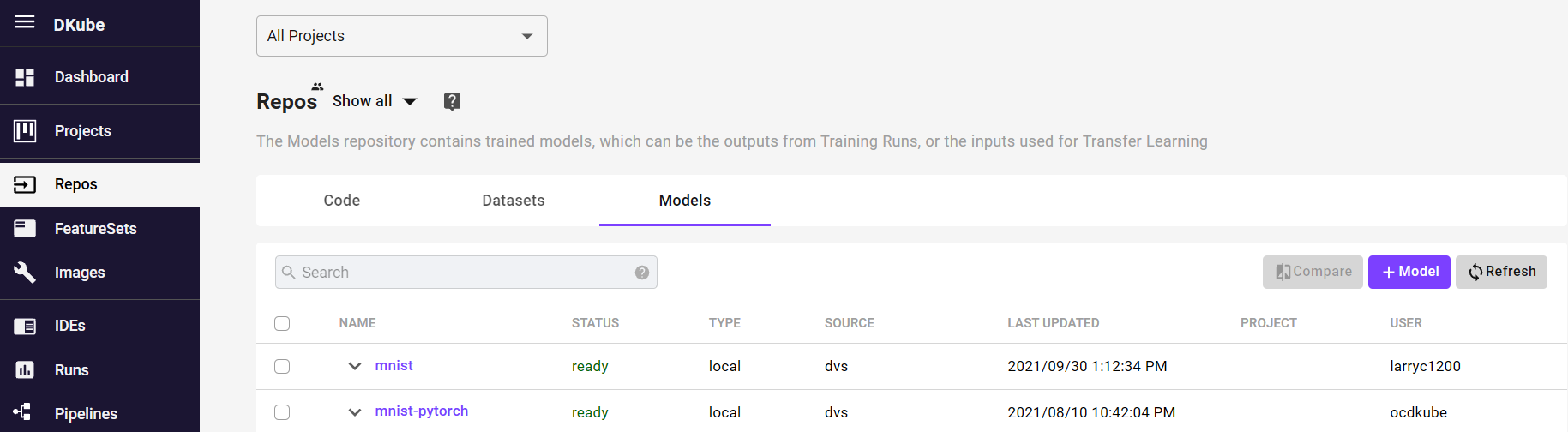

Models¶

The Models Repo contains all of the models that are available within DKube. Models are added to the DKube repo in the following ways:

Manually added for use as an input for a run that includes transfer learning with or without versioning capability, as explained in Versioning

Manually added as a placeholder for use as a versioned output

Created as an output from a training run

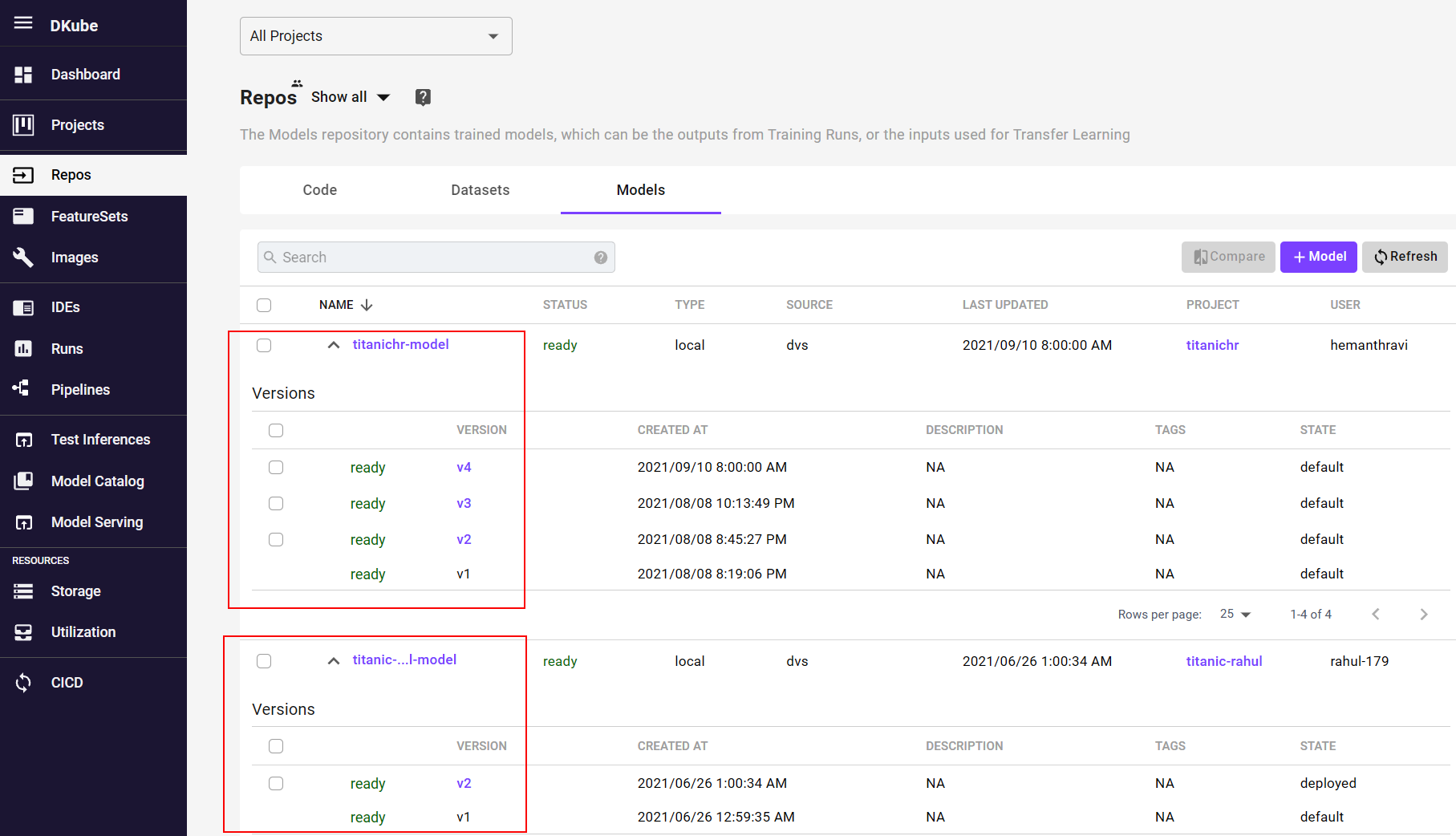

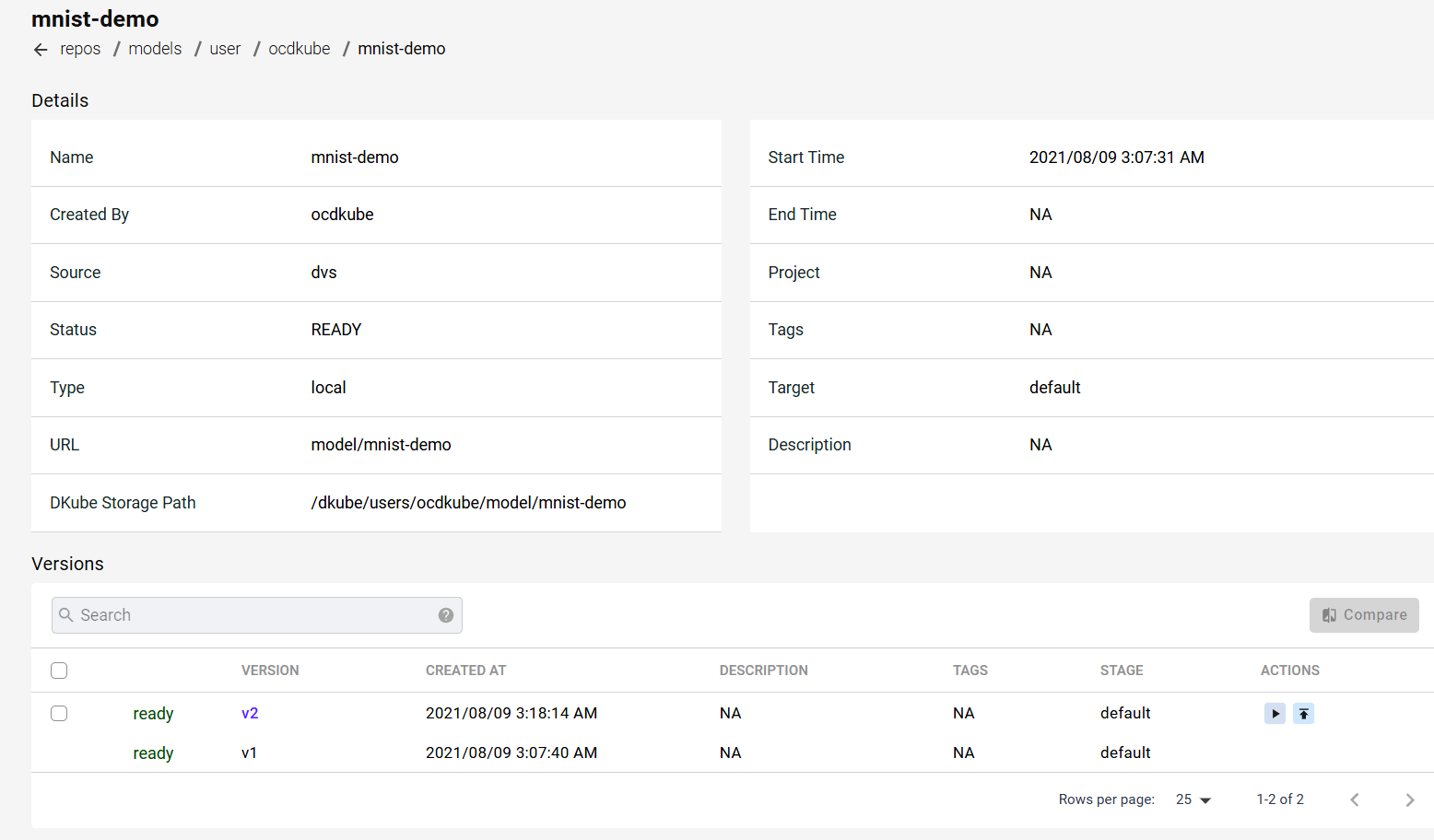

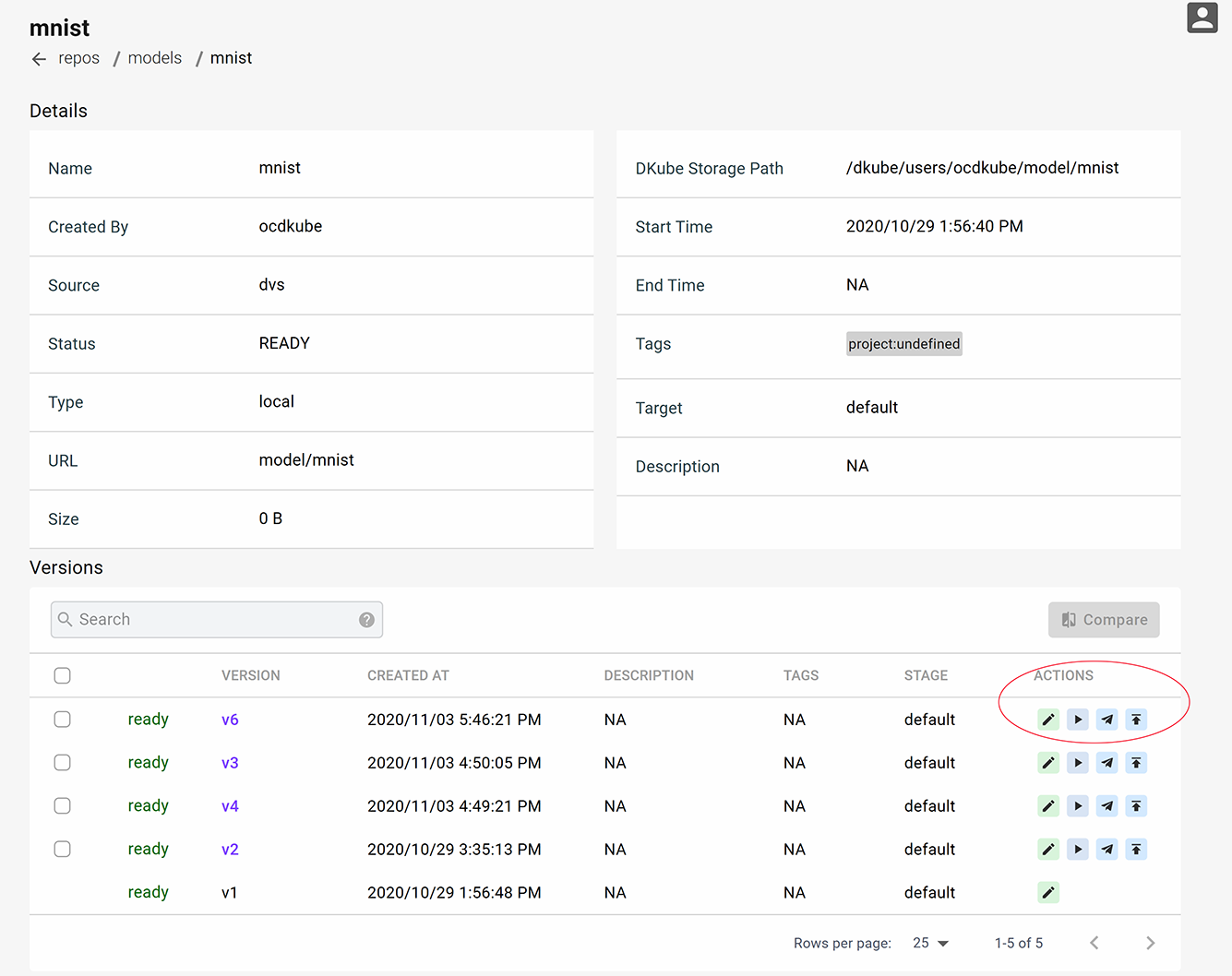

The versions of each model can be viewed by selecting the expansion icon to the left of the Model name. This allows direct access to the version details, and for versions of different models to be compared.

Model Details¶

Selecting the Model will call up a screen which provides more details. This includes the information used to create the model and a list of versions. See section Versioning to understand how Model versions are created.

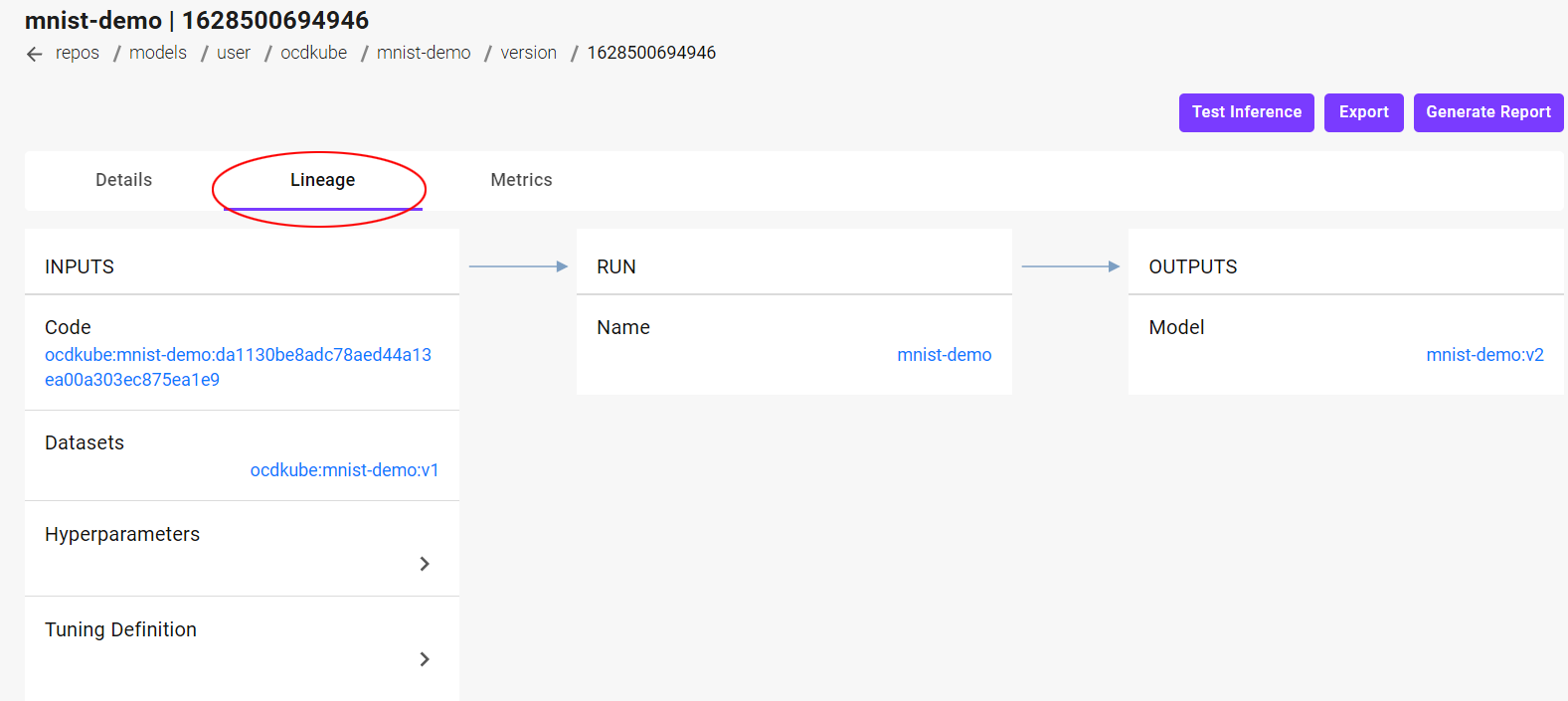

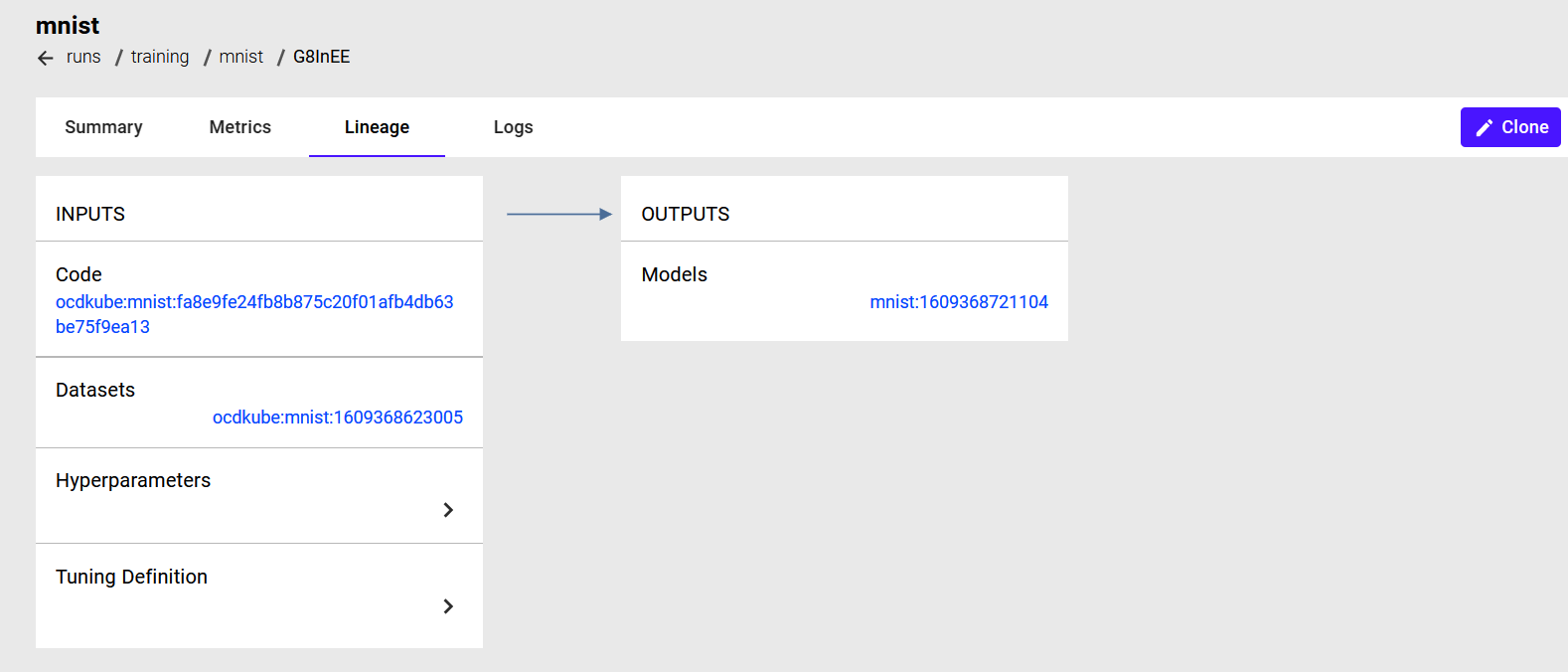

Model Version Lineage¶

More detail on a specific model version can be obtained by selecting that version on the detail page. The complete Model lineage can be viewed by selecting the “Lineage” tab on the Model Version Details screen. This provides the complete set of inputs that were used to create the Model.

Add a Model¶

Models are added manually for 2 different purposes:

The Model is going to be used as an input to a Run for transfer learning, where a partially trained model is further trained

The Model will become an output from a Training Run

The screens and fields for adding a Model are the same as for adding a Dataset, described at Add a Dataset

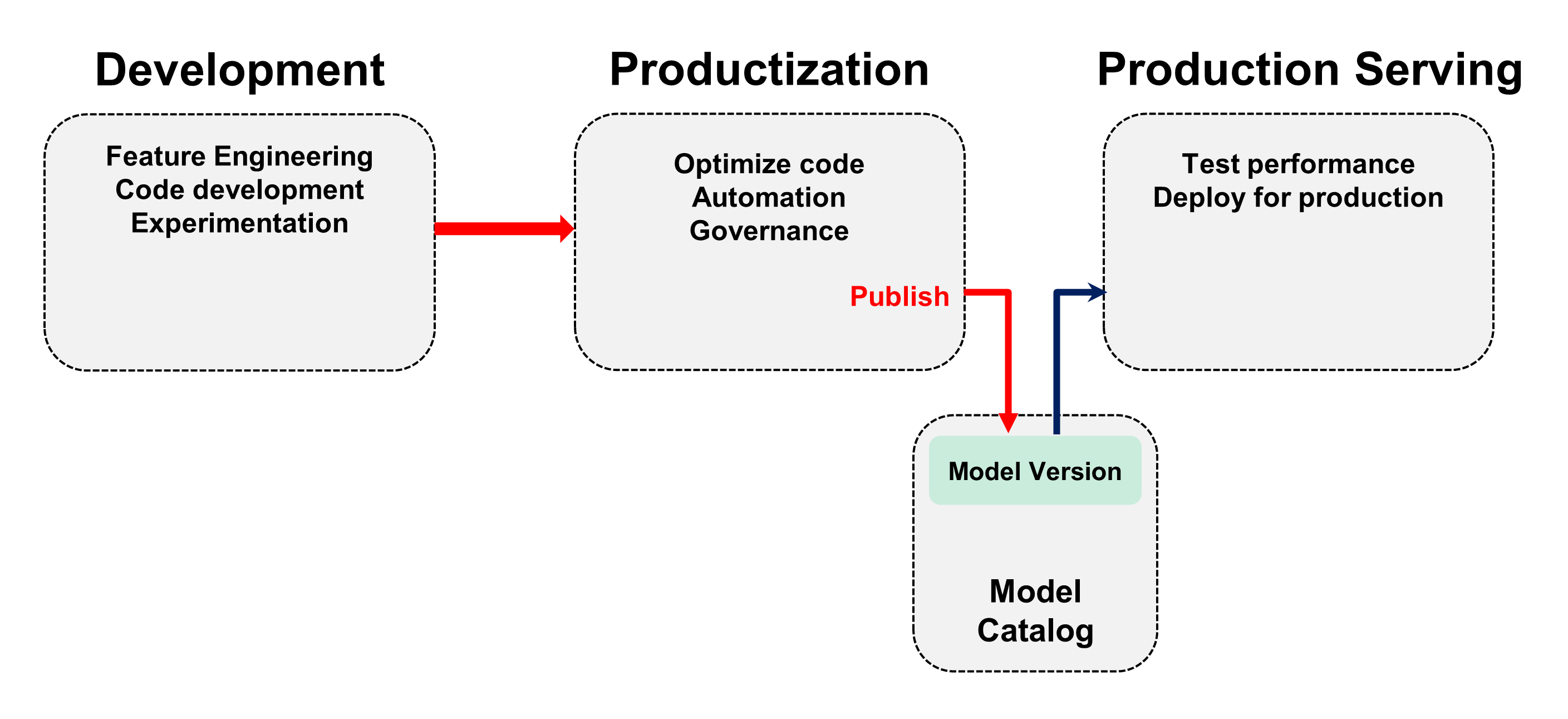

Model Workflow¶

The overall workflow of a Model is described in section MLOps Concepts . A diagram of the flow is shown here. This describes the expected stages that a Model goes through from creation to production. This section provides the details of this workflow within DKube.

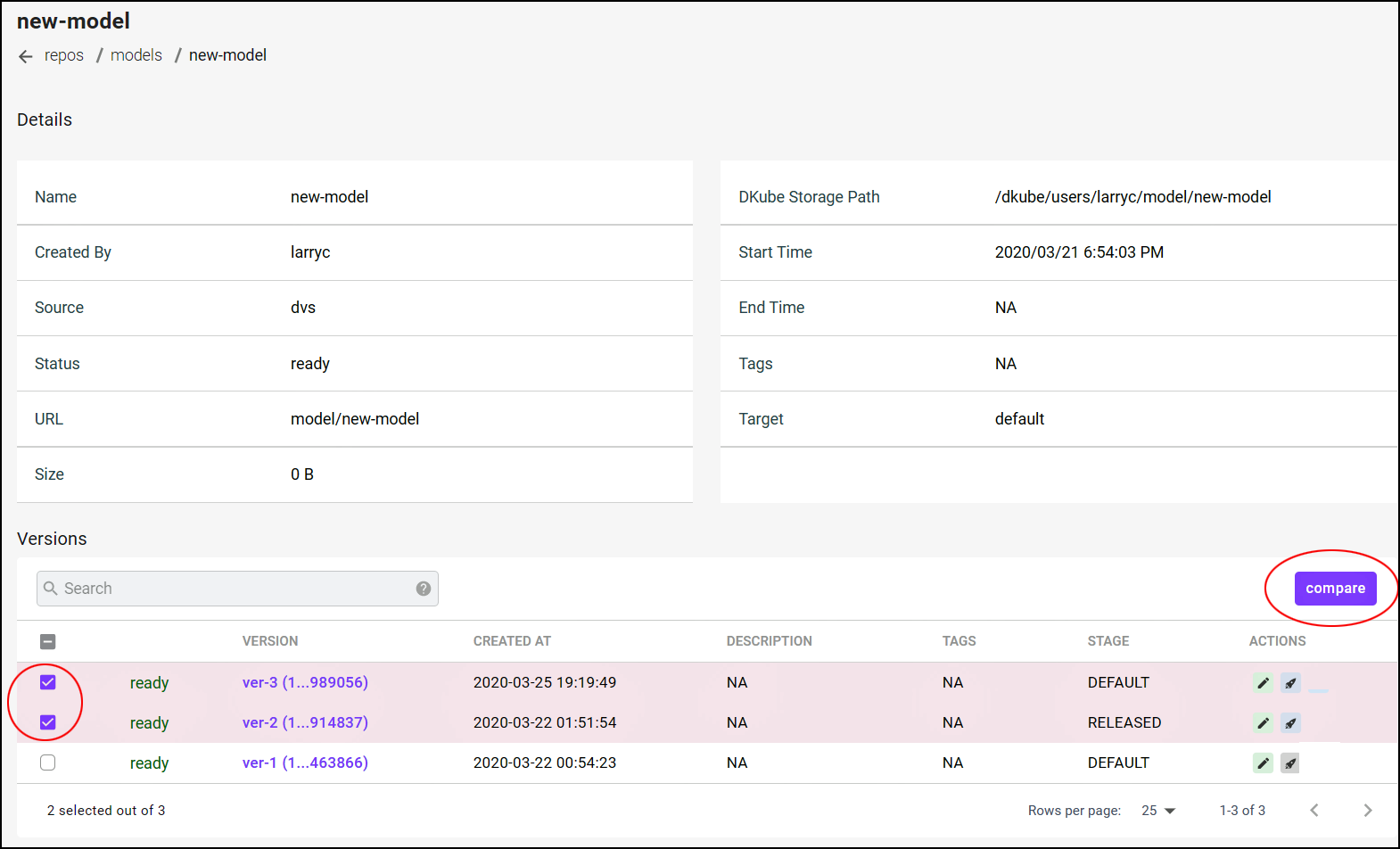

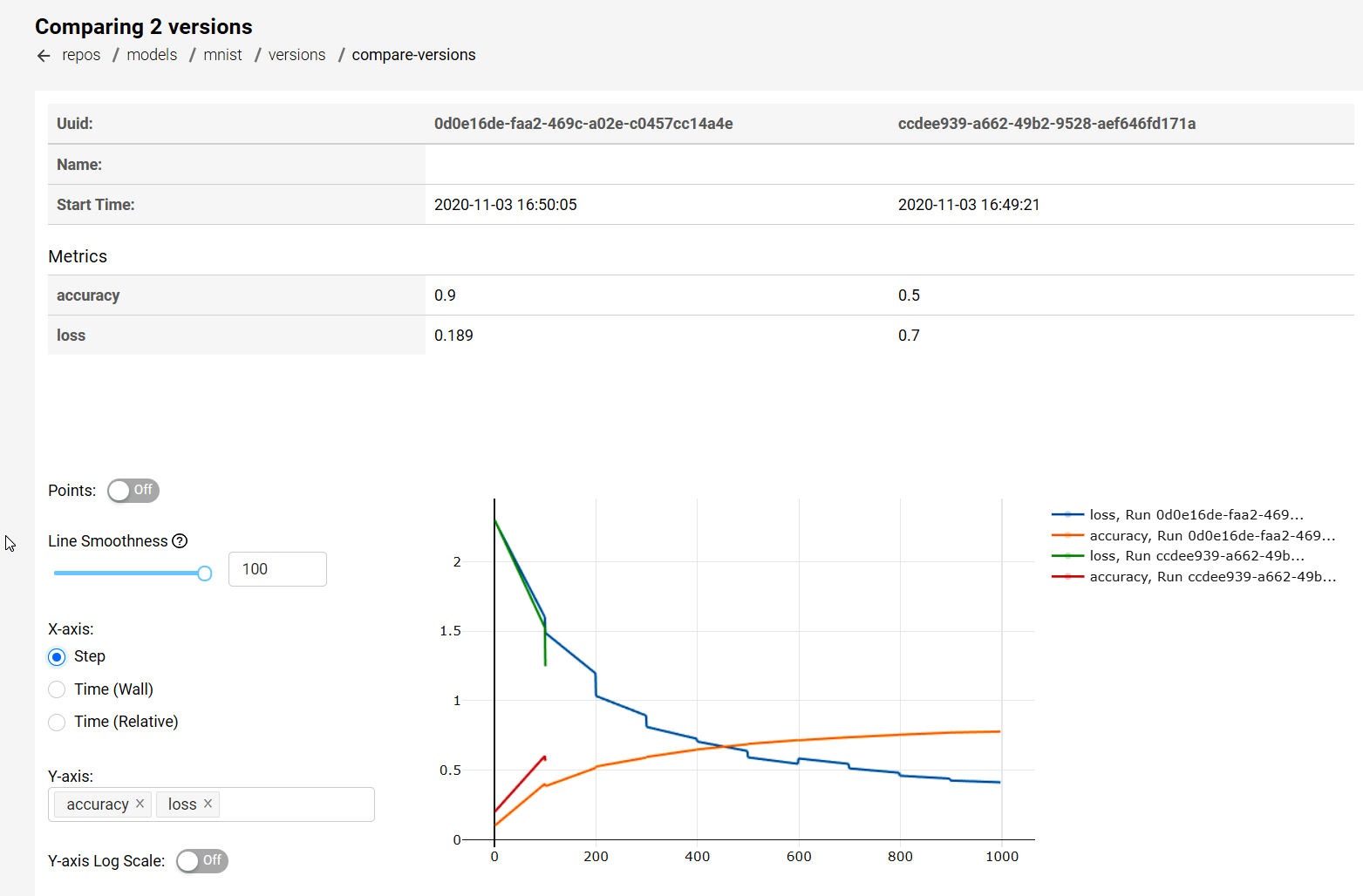

Compare Models¶

Model versions can be compared from:

The main model screen

The detailed model screen

To perform a comparison, the models to be compared should be selected, and the “Compare” button should be used. This will bring up a window with the comparison. The compare function is used by the Data Scientist and ML Engineer during their development.

Model Actions¶

Models can have a variety of different actions performed on them from the details screen. The actions depend upon the User role as described at DKube Roles & Workflow .

Edit Model¶

The description and tag can be modified using the “Edit” button.

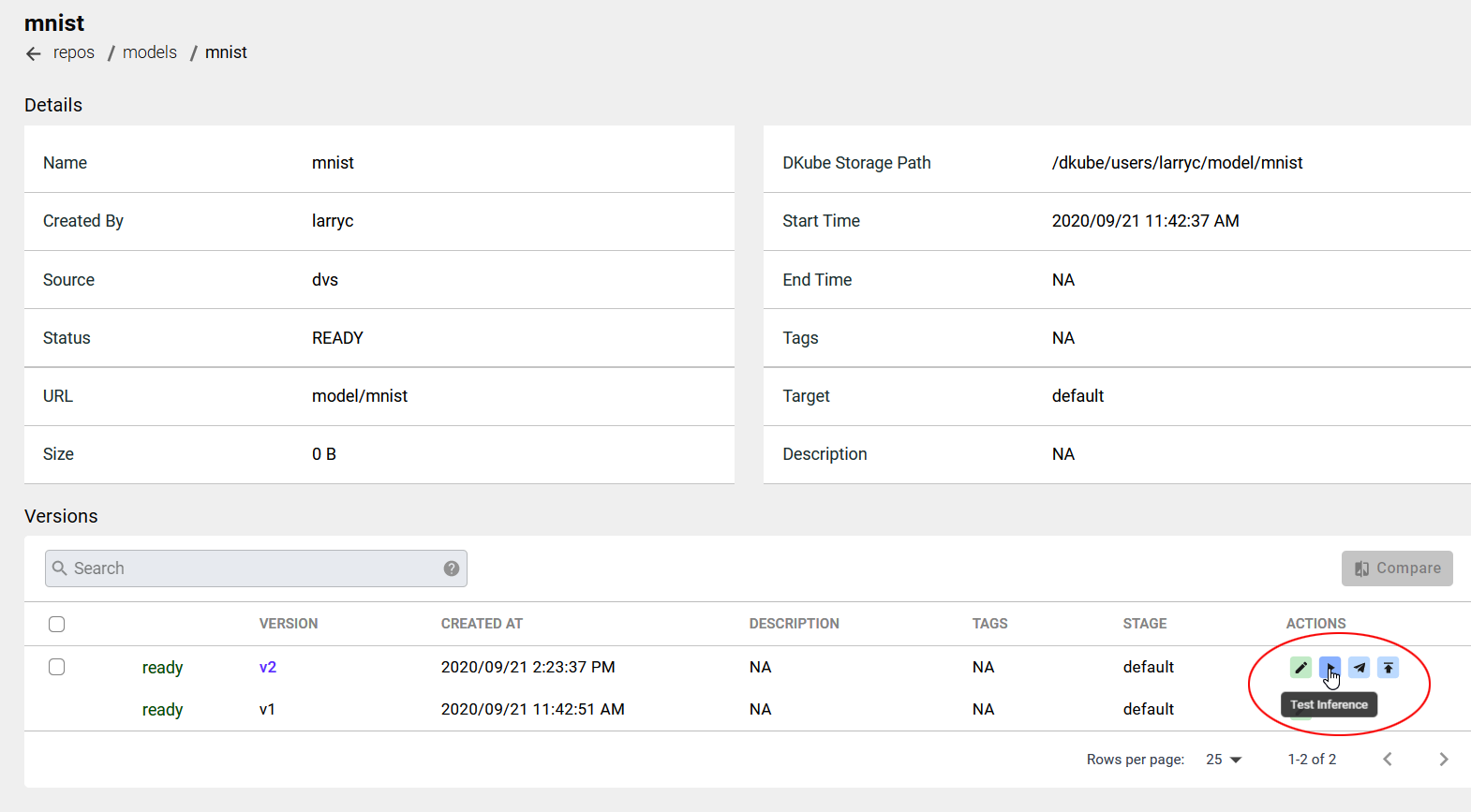

Test Model¶

A model can be deployed on the local training cluster, which will expose an API that can be used for testing the model using inference input. One Convergence provides a test inference application for our example models. For other models, the user would need to do a custom inference and call the serving API.

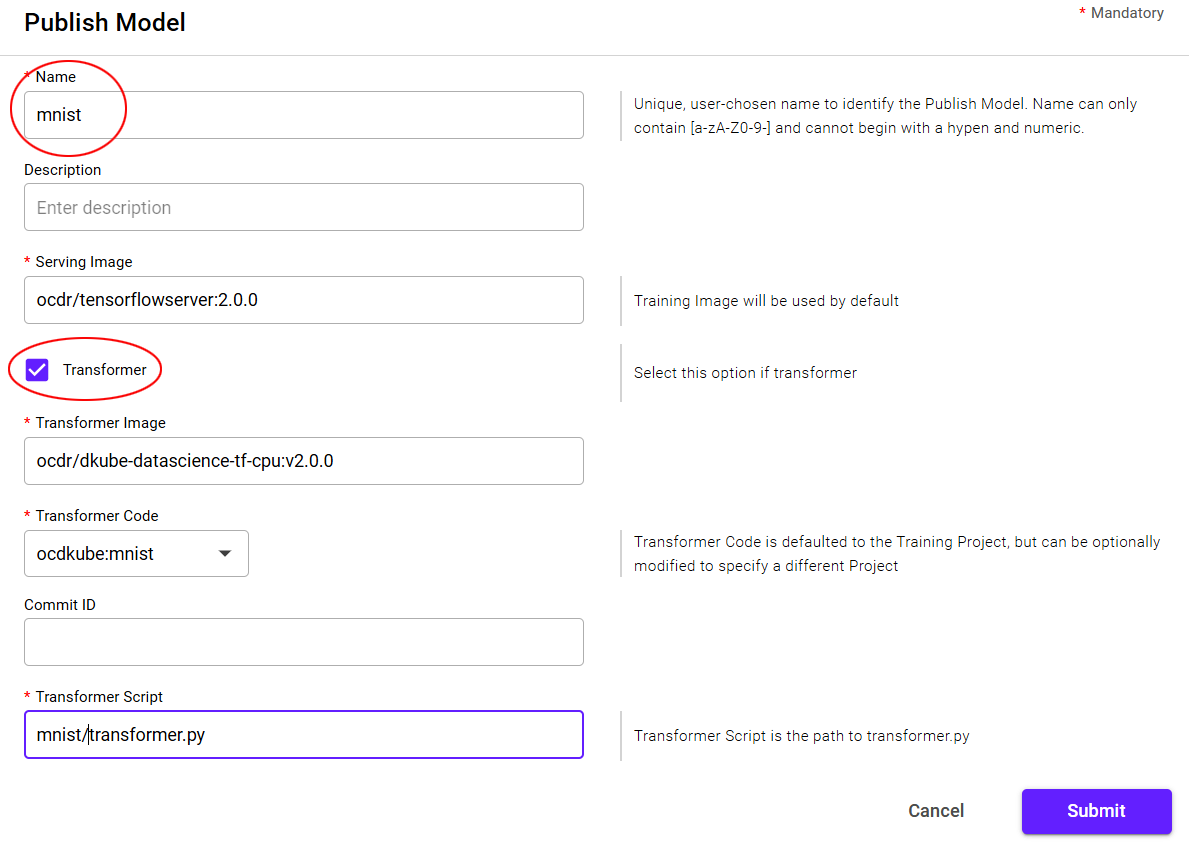

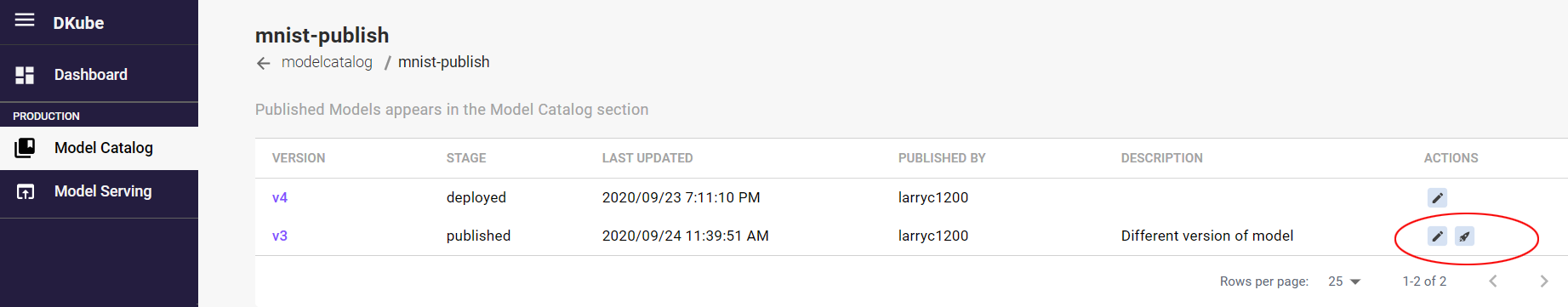

Publish Model¶

The ML Eng will optimize, automate, and productize the model using larger datasets and other configurations and parameters that are necessary.

The Model that the ML Eng believes is the best fit to the goal is “Published”.

Publishing a Model copies it to the Model Catalog, which makes it available to the Production Engineer for testing and eventual Deployment to a production server.

A Model version is published from the Model details page by selecting the Publish button on the far right. This will initiate a popup menu to fill in the publishing details.

Field |

Value |

|---|---|

Name |

User-chosen name for the Test Inference |

Description |

Optional user-chosen name to provide more details for the instance |

Serving Image |

Defaults to the training image, but a different image can be used if required |

Transformer |

Select if the inference requires preprocessing or postprocessing |

Transformer Image |

Image used for the transformer code |

Transformer Code |

Defaults to the training code repo, but a different repo can be used if required |

Transformer Script |

Program used for the Transformer |

Deploy Model¶

A Model is Deployed by the Production Engineer from the Model Catalog screen, as described in Production Engineer .

IDEs¶

DKube supports JupyterLab and RStudio. It can include the model and dataset information, as well as the hyperparameters for the model run.

The status messages are described in section Status Field of IDEs & Runs

TensorBoard¶

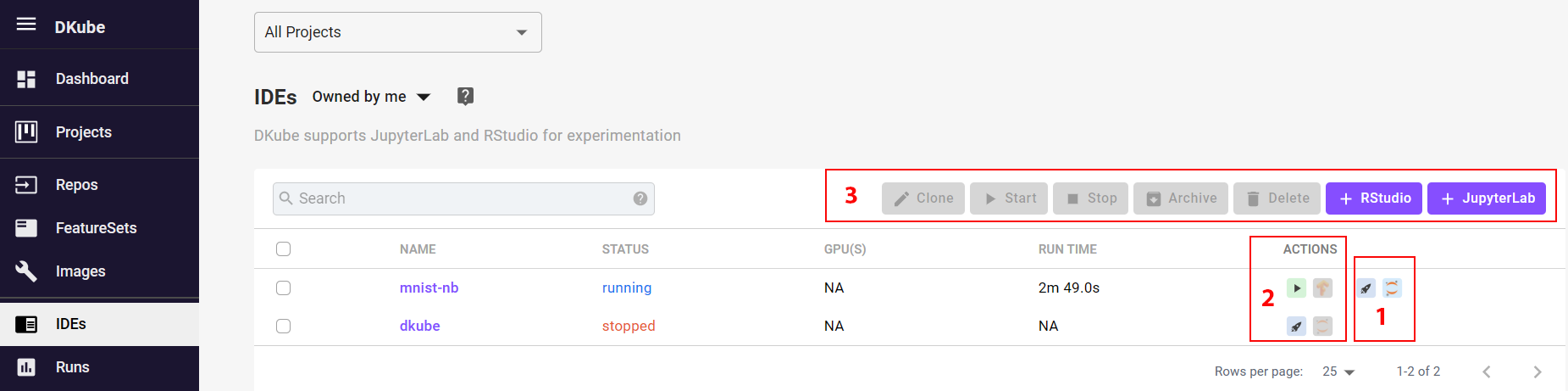

TensorBoard can be accessed from the Notebook screen.

Note

When an IDE instance is created, TensorBoard is in the “stopped” state. In order to use it, TensorBoard must be started by selecting the play icon (item 2 in the screenshot above).

Note

It can take several minutes for TensorBoard to be active after being started

Note

It is good practice to stop TensorBoard instances if they are not going to be used in order to conserve system resources

IDE Actions¶

There are actions that can be performed on an IDE instance (items 1 in the screenshot above).

A JupyterLab or RStudio instance can be started

A Training Run can be submitted based on the current configuration and parameters in the IDE.

In addition, there are actions that can be performed that are selected from the icons above the list of instances (item 3 in the screenshot). For these actions, the instance checkbox is selected, and the action is performed by choosing the appropriate icon.

An instance can be stopped or started

Instances can be deleted or archived (see Delete and Archive )

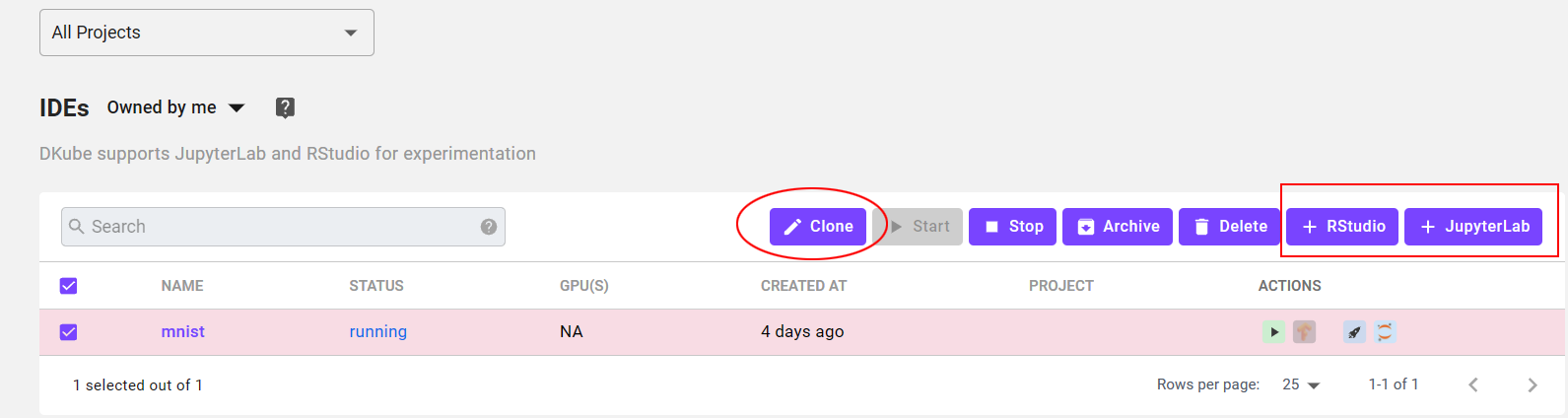

A new instance can be created by either cloning it (clone icon), or starting from a blank configuration (+ RStudio or + JupyterLab icon)

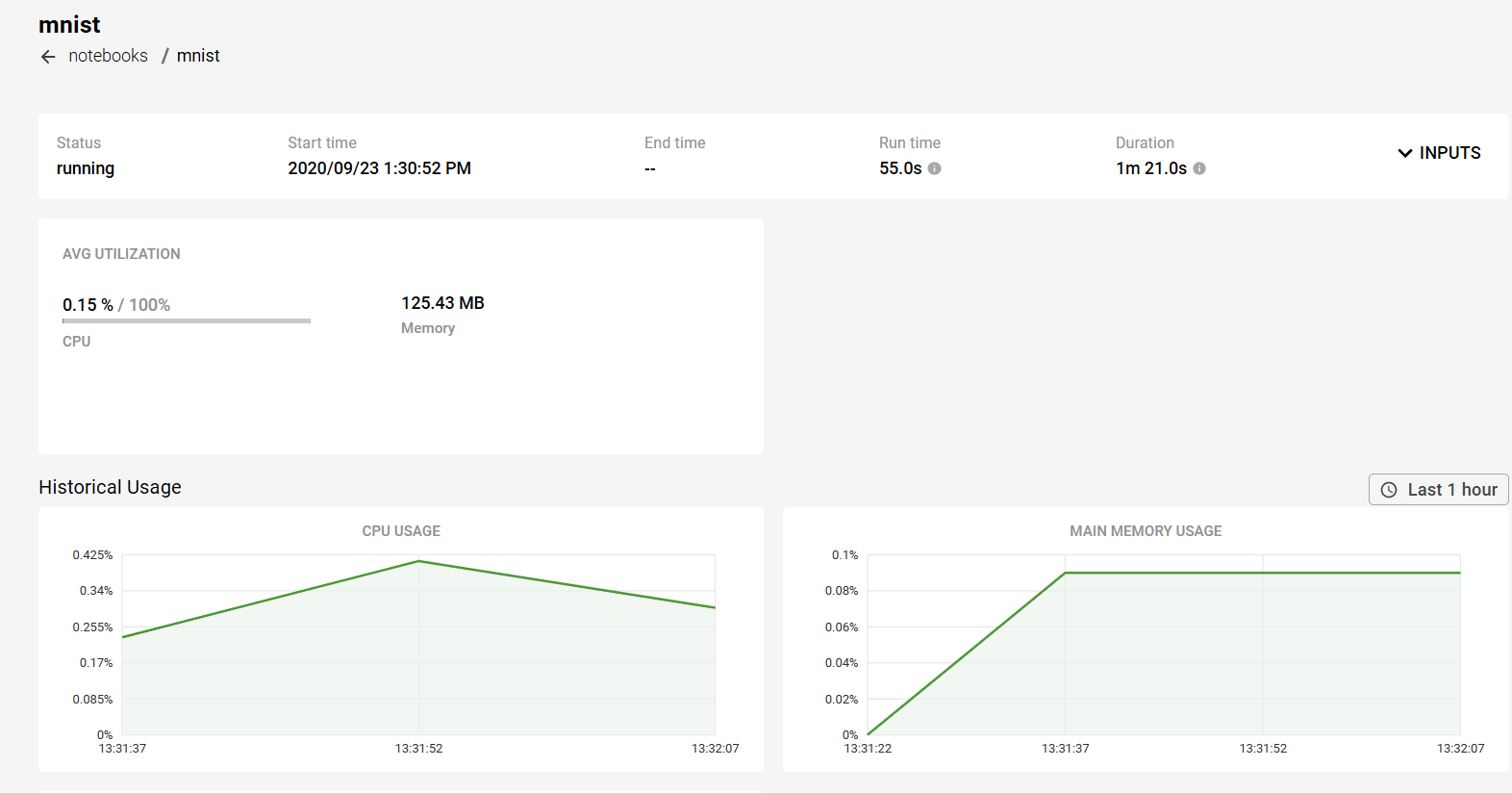

IDE Details¶

More information can be obtained on the Notebook by selecting the name. This will open a detailed window.

Create IDE¶

There are 2 methods to create an IDE instance:

Create a new JupyterLab or RStudio instance by selecting the “+ <Instance Type>” button at the top right-hand side of the screen, and fill in the fields manually

This is typically done for the first instance, since there is nothing to clone

Clone an instance from an existing instance

This will open the same new submission dialog screen, but the fields are pre-loaded from the existing instance. This is convenient when a new instance will have only a few different fields, such as hyperparameters, from an existing one.

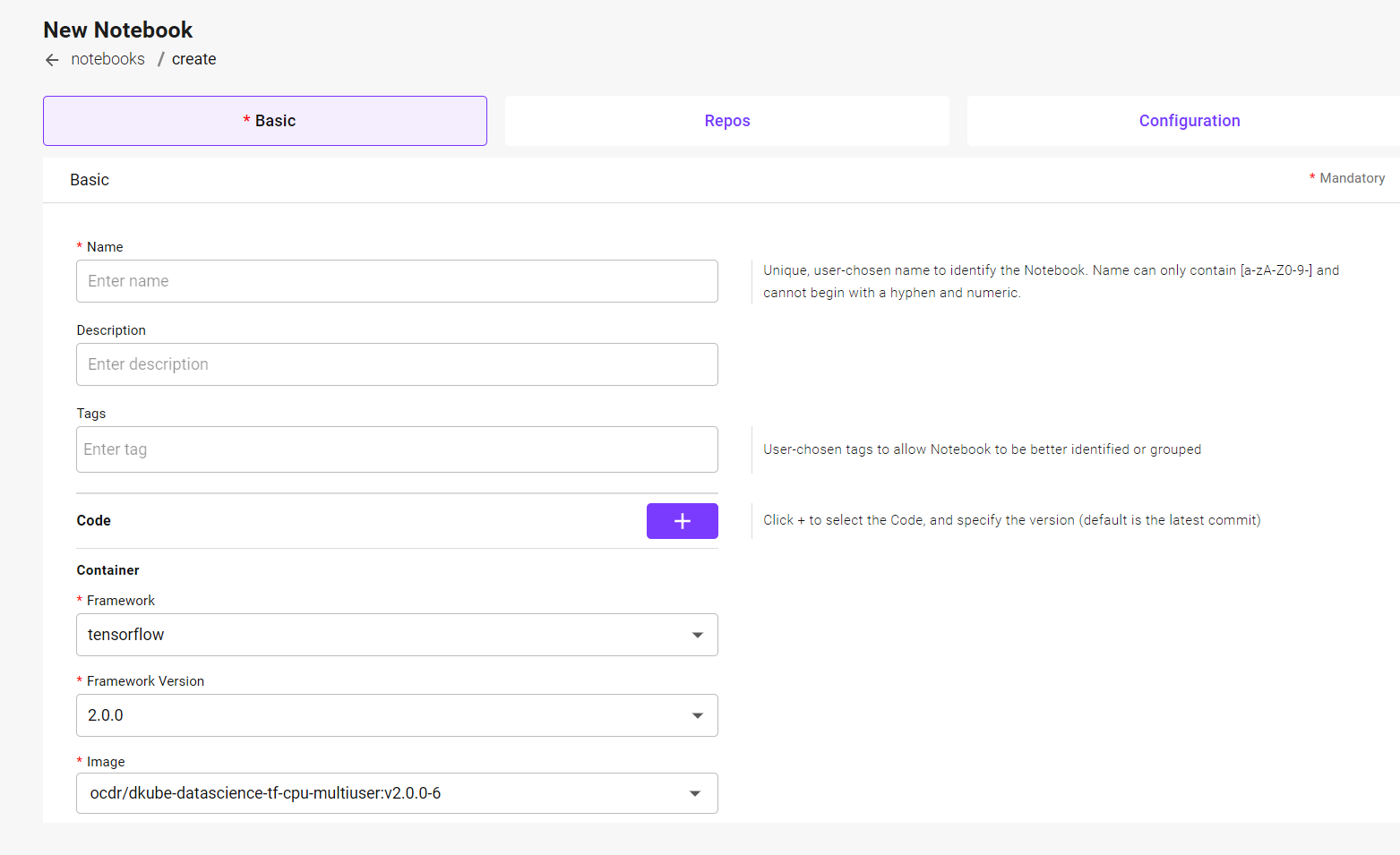

In both cases, the new instance submission screen will appear. Once the fields have been entered or changed, select “Submit”

Note

The IDE will be created in the Project that is selected when the IDE is created. If “All Projects” is selected, it will not be associated with any Project.

There are 3 sections that provide input to the new instance.

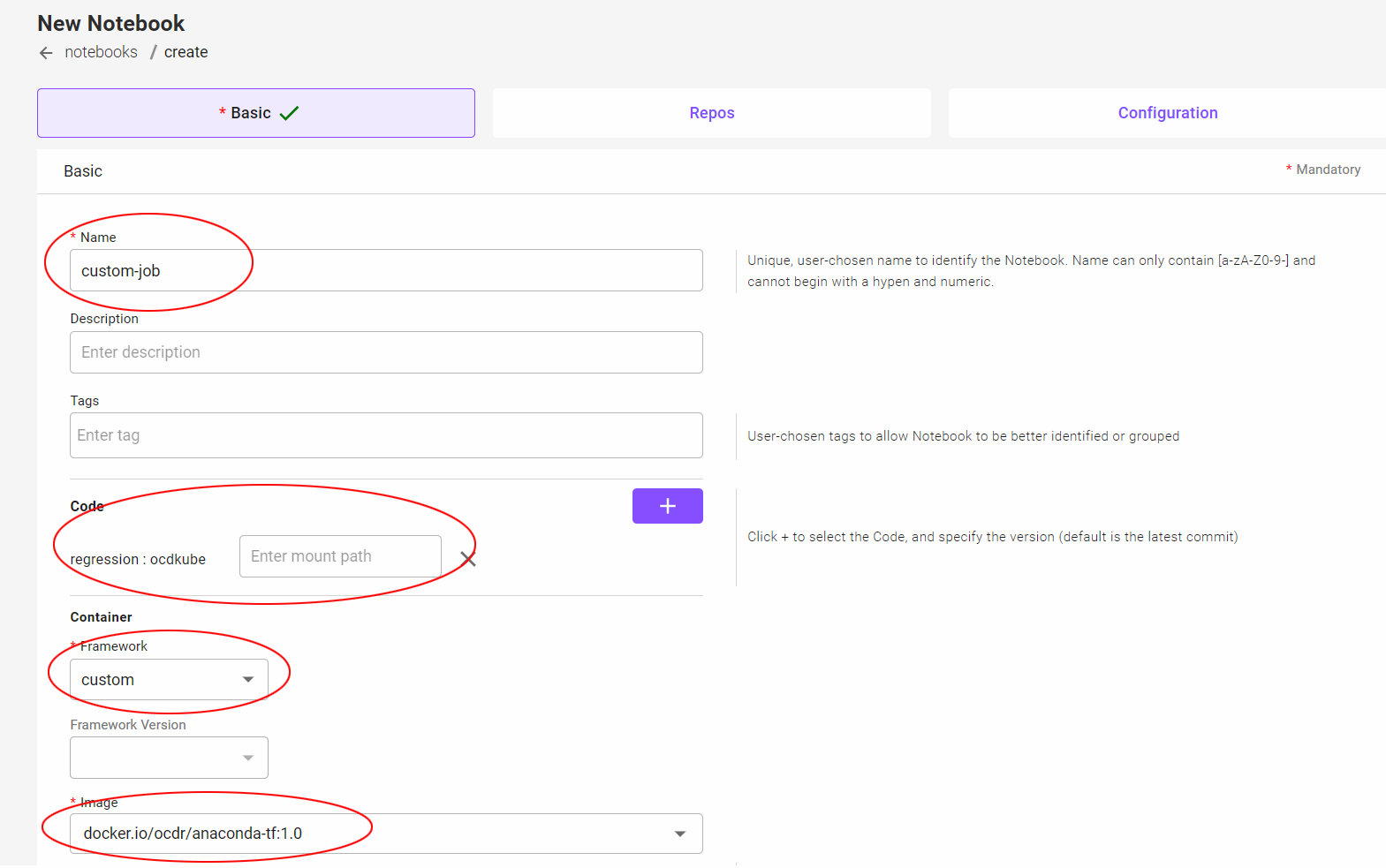

The Basic tab allows the selection of:

The name of the instance and any other pertinent information

The program code

The framework and version

The docker image to use: either the standard DKube image or your own custom image

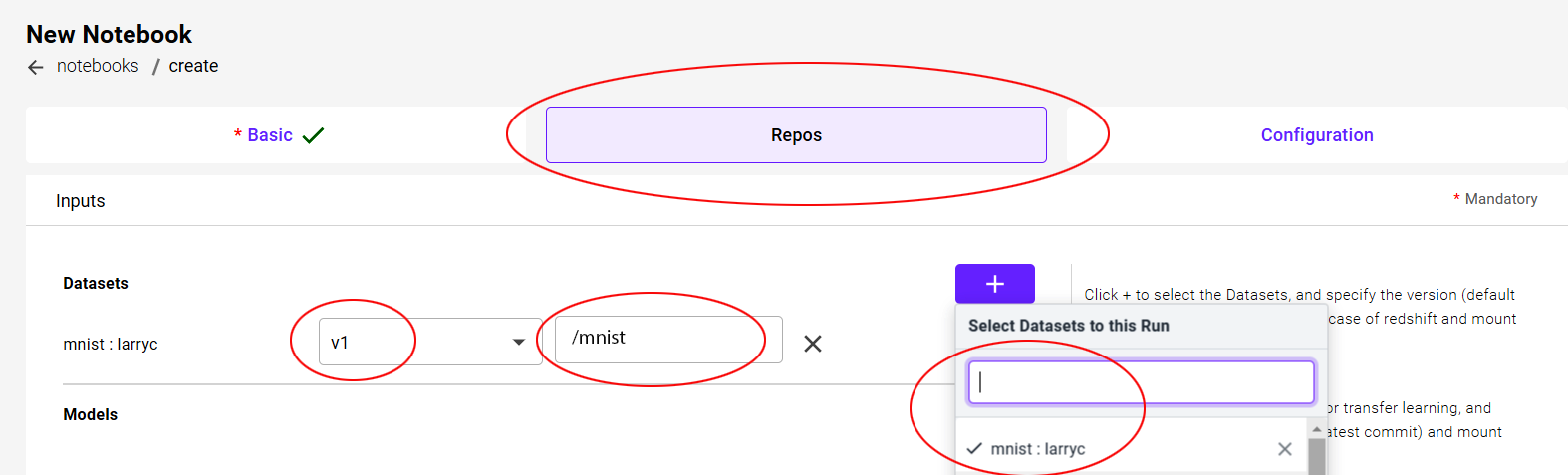

The Repo tab selects the input datafiles required

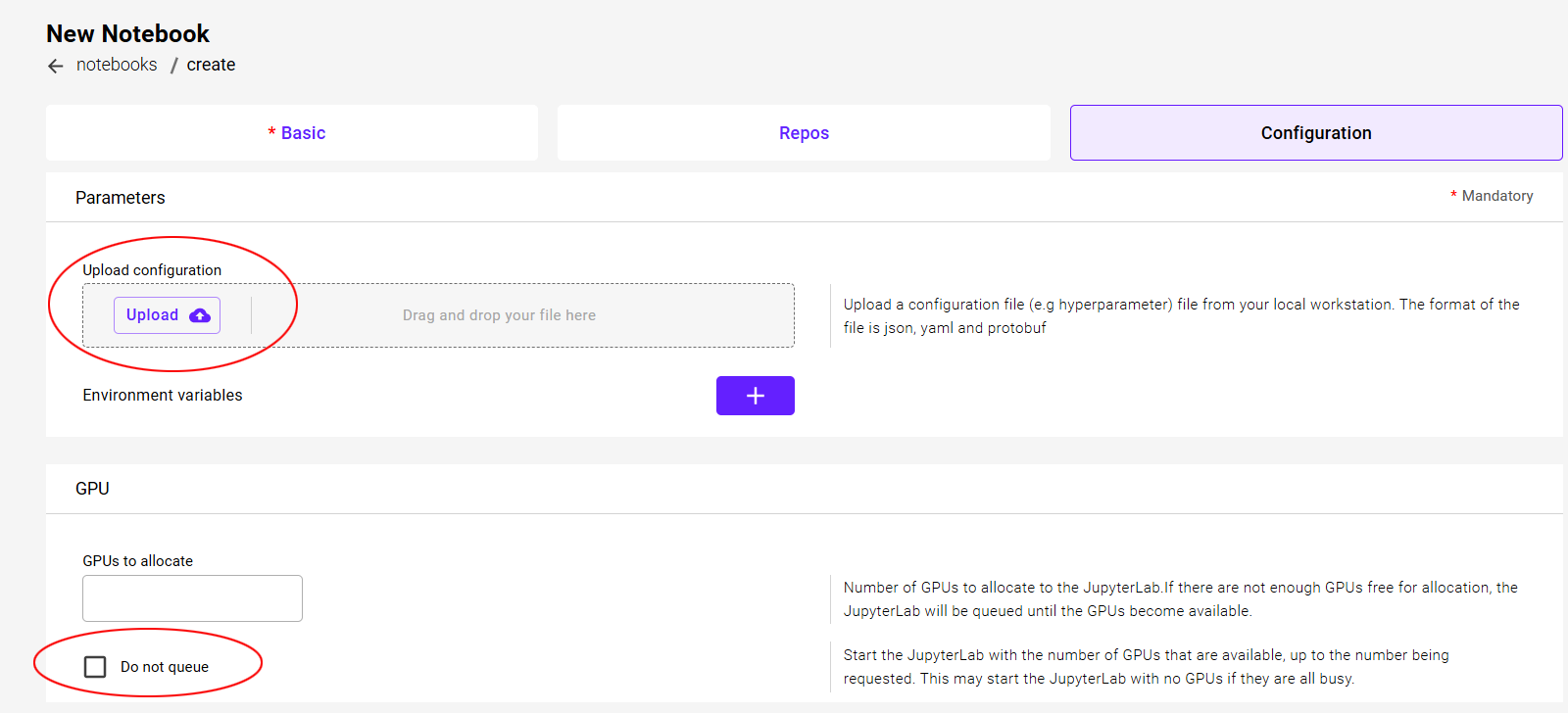

The Configuration tab selects:

Inputs related to configuration files & hyperparameters

GPU requests

Note

It is not required to make changes to all of the tabs. There are some mandatory fields required, which are highlighted on the screen, but once those have been filled in the instance can be created through the Submit button. The tabs can be selected directly, or the user can go back and forth using the navigation buttons at the bottom of the screen.

Note

The first Run or instance load will take extra time to start due to the image being pulled prior to initiating the task. The message might be “Starting” or “Waiting for GPUs”. It will not happen after the first run of a particular framework version.

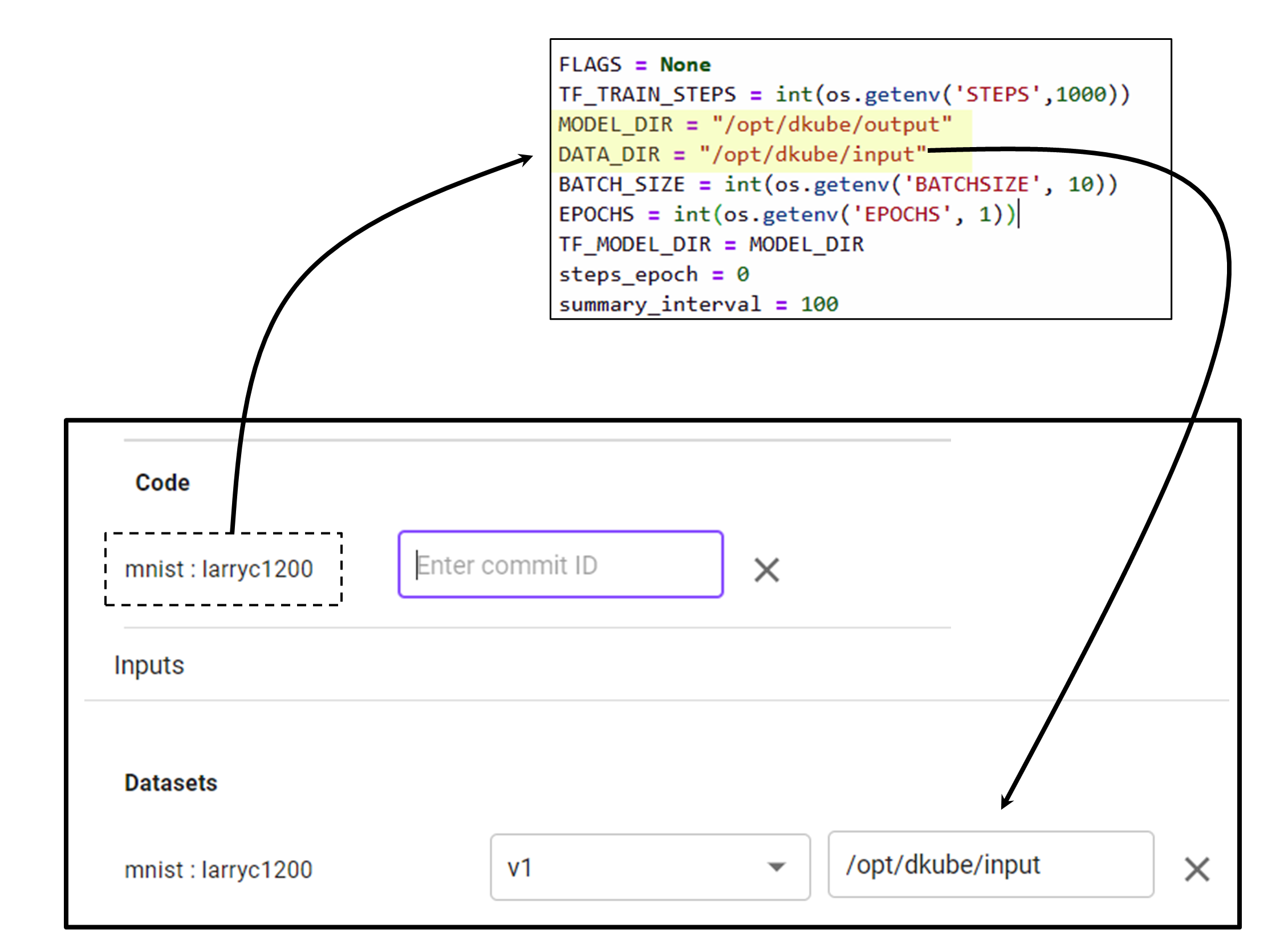

File Paths for Datasets and Models¶

The Dataset & Model repos that are added as part of the submission are saved as described at File Paths

Basic Submission Screen¶

Field |

Value |

|---|---|

Name |

Unique user-chosen identification |

Description |

Free-form user-chosen text to provide details |

Tags |

Optional, user-chosen detailed field to allow grouping or later identification |

Code |

Project code repo |

Framework |

Framework type |

Framework Version |

Framework version |

Image |

Docker image to use - this can be left at the default, or a custom image can be selected |

Code Repo¶

The code is uploaded into the local DKube storage and used for the IDE.

Important

The latest version of the product code will always be used. This is the case even if there is a specific commit ID filled in. The commit ID will be ignored.

DKube has built-in support for TensorFlow, PyTorch, and Scikit Learn.

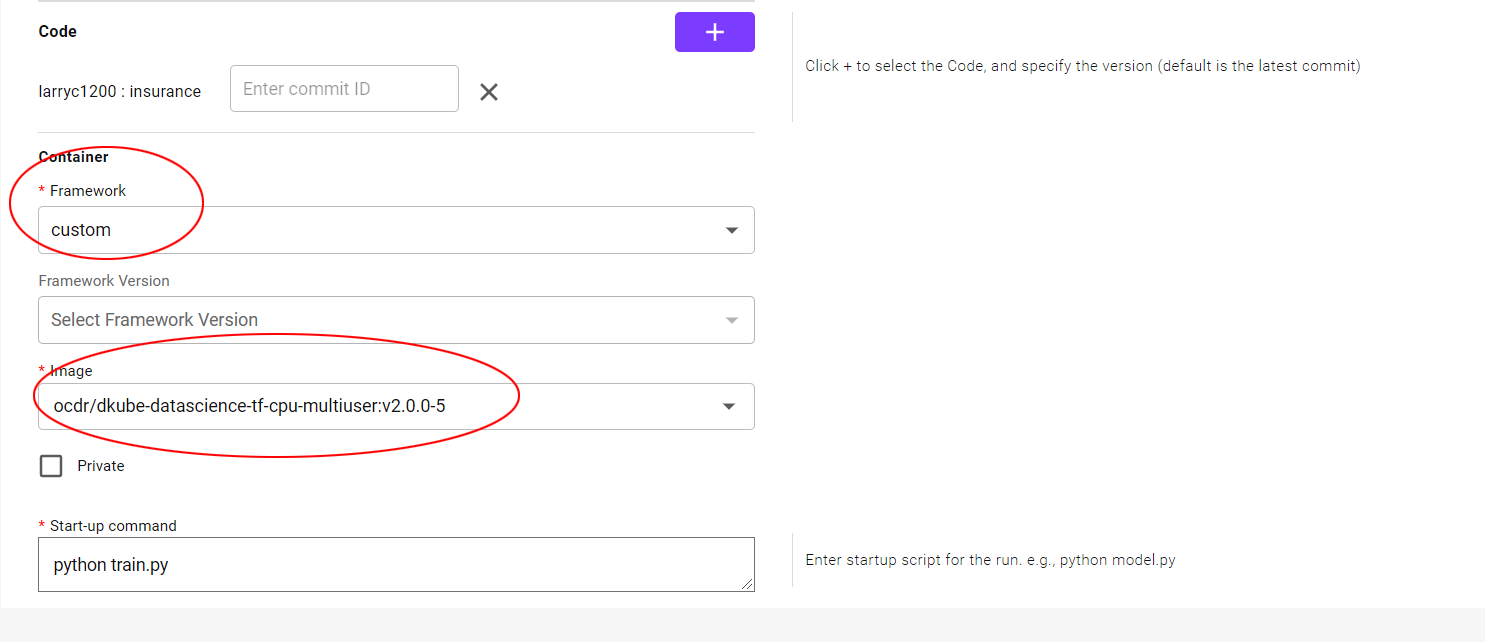

Custom Containers¶

Custom containers are supported to extend the capabilities of DKube. In order to use a customer container within DKube, select “Custom” from the Framework dropdown menu. This will provide more options.

Enter the image location in the field labeled Docker Image URL in the format registry/<repo>/<image>:<tag>

If the image is in a private registry, enable the Private option, and fill in the username and password

Repo Submission Screen¶

The Repos submission screen selects the repositories required for training or experimentation:

Dataset repo(s)

Model repo(s) for use in transfer learning

FeatureSets repo(s)

A repo is chosen by selecting the “+” beside the repo type, and choosing the repo(s) from the list provided. The repo is required to be made available to DKube through the process described in Repos

The version of the repo can also be chosen

A mount path should be selected for the repo, which should correspond to the expected path in the Project code. This is described in more detail at Mount Path

Mount Path for Datasets and Models¶

The Dataset, FeatureSet, & Model repos that are added as part of the submission contain a field called the “Mount Path”. This is the path that is used by the code to access the repo. This is described in more detail at File Paths

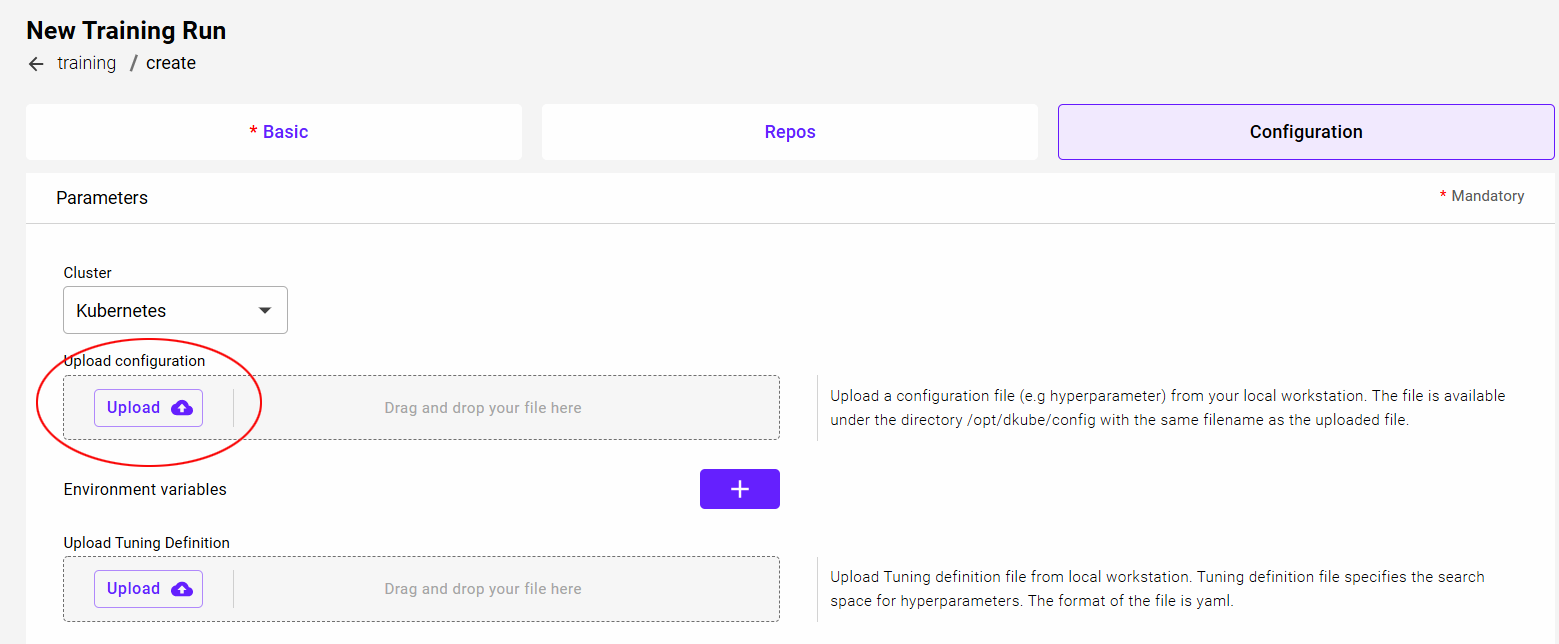

Configuration Screen¶

Configuration File¶

A configuration file can be uploaded and provided to the program. There is no DKube-enforced formatting for this file. It can be any information that needs to be used during program execution. It can be a set of hyperparameters, or configuration details, or anything else. The program needs to be aware of the formatting so that it can correctly unpacked during execution.

The file can be used within DKube as described in Configuration File

Hyperparameters¶

The configuration section allows the user to input the hyperparameters for the instance. The use of the hyperparameters is based on the program code. Hyperparameters can be added by selecting the highlighted “+”. This will allow an additional “Key” and “Value”. More parameters can be added by repeated use of this option.

GPUs¶

The number of GPUs can be selected for the instance. The GPUs in a Group are shared with all Users in a Group. If there are currently not enough GPUs to satisfy the request, the instance will be queued until enough GPUs are available.

The GPU selection area shows how many GPUs are available in the group. Selecting more GPUs than are available in the group will cause an error.

Note

The screen shows how many GPUs available in the Group, but these are shared with other instances and users. The actual number of GPUs available when the instance is submitted may be fewer than what is shown.

Just below the GPU selection is a checkbox that allows the instance to start with the number of GPUs that are available upon submission, including none, if all of the GPUs are currently in use. This will guarantee that the instance does not queue.

Delete IDE¶

Select the instance name from the left-hand checkbox

Click “Delete” icon at top right-hand side of screen

Confirm the deletion

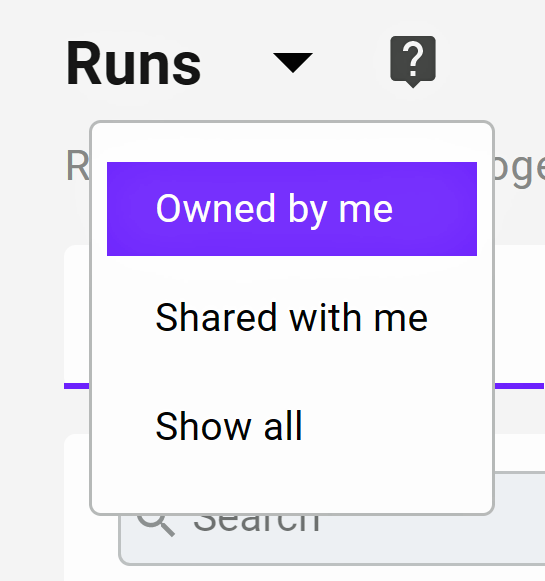

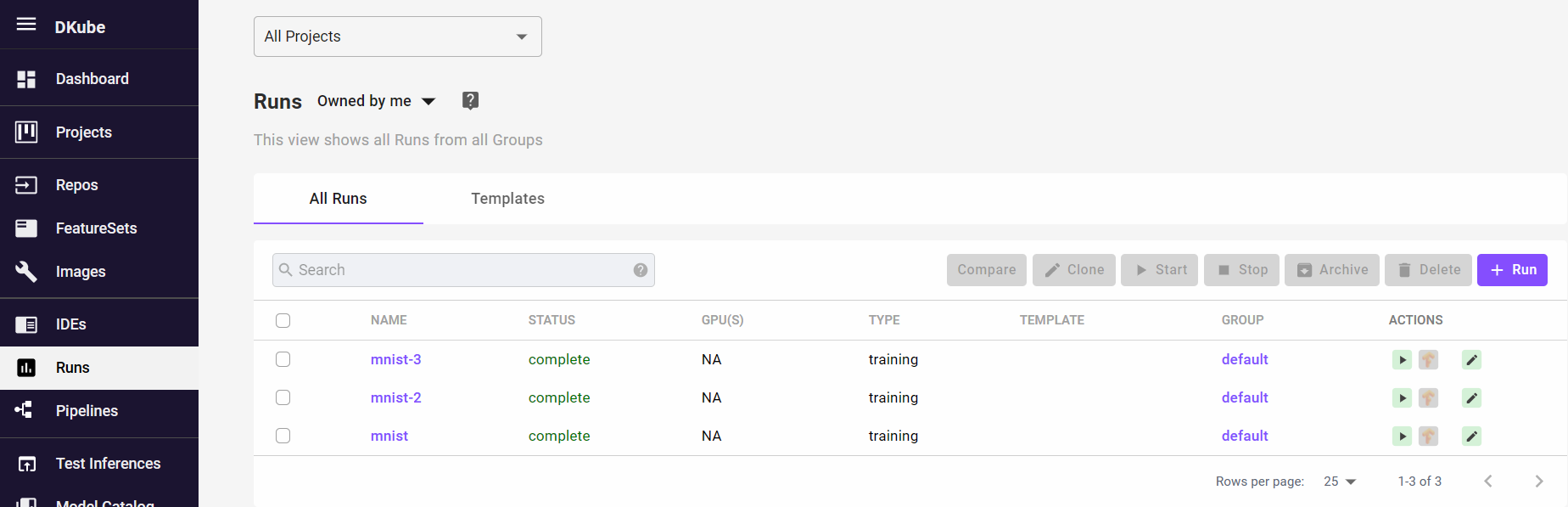

Runs¶

A Run is the execution of code using:

Datasets

Optional FeatureSets (extracted Datasets)

Optional pre-trained models

Hyperparameters

Resources

The status messages are described in section Status Field of IDEs & Runs

The Run screen allows the user to manage training and preprocessing based on the inputs selected. The primary difference between the functions are:

Once the Training Run is complete, it creates a trained Model

When a Preprocessing Run is complete, it creates a new Dataset or FeatureSet

Templates¶

Run Templates are a way to simplify the submission of Runs. They allow many of the fields to be pre-filled, similar to cloning a run from another run. For example, the user may want to do a number of runs with different hyperparameters or resources. A Template can be used to fill in the fields, then the updated hyperparameters or resources can be selected before submitting the new Run.

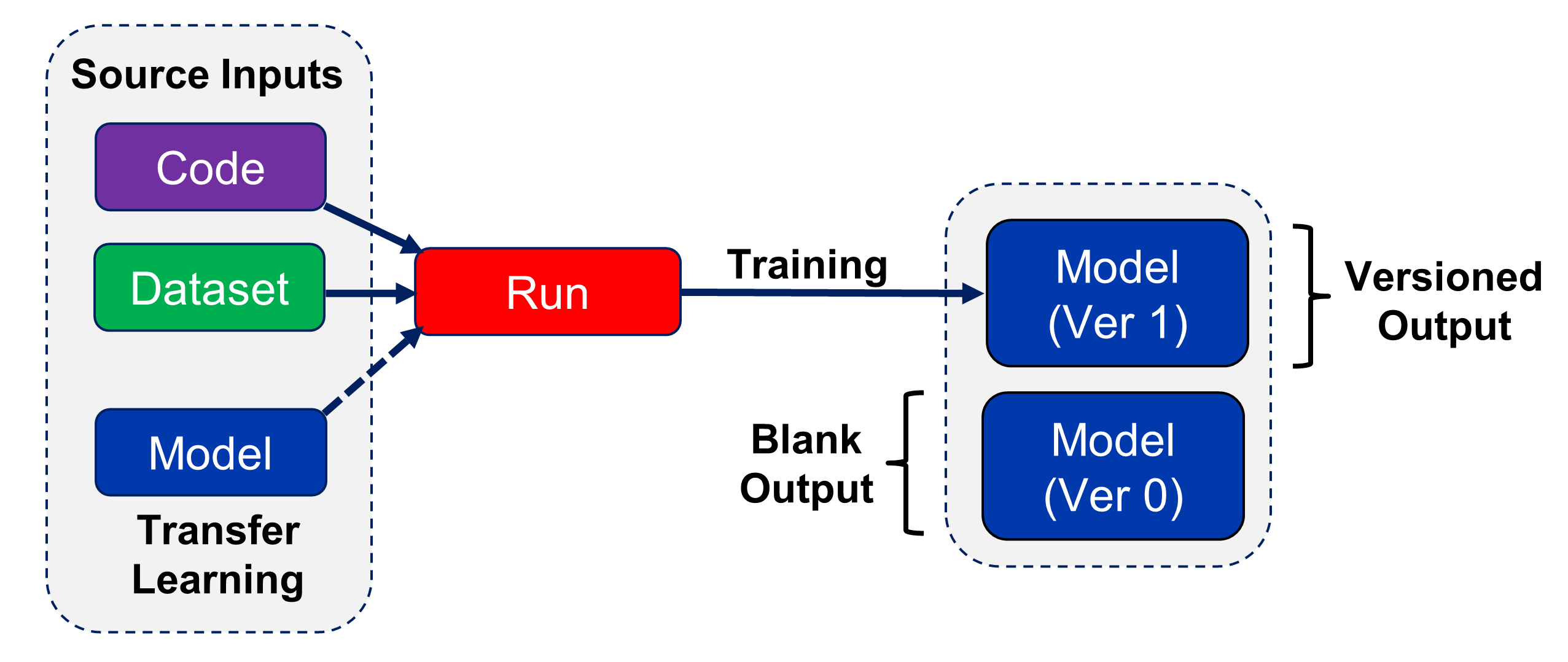

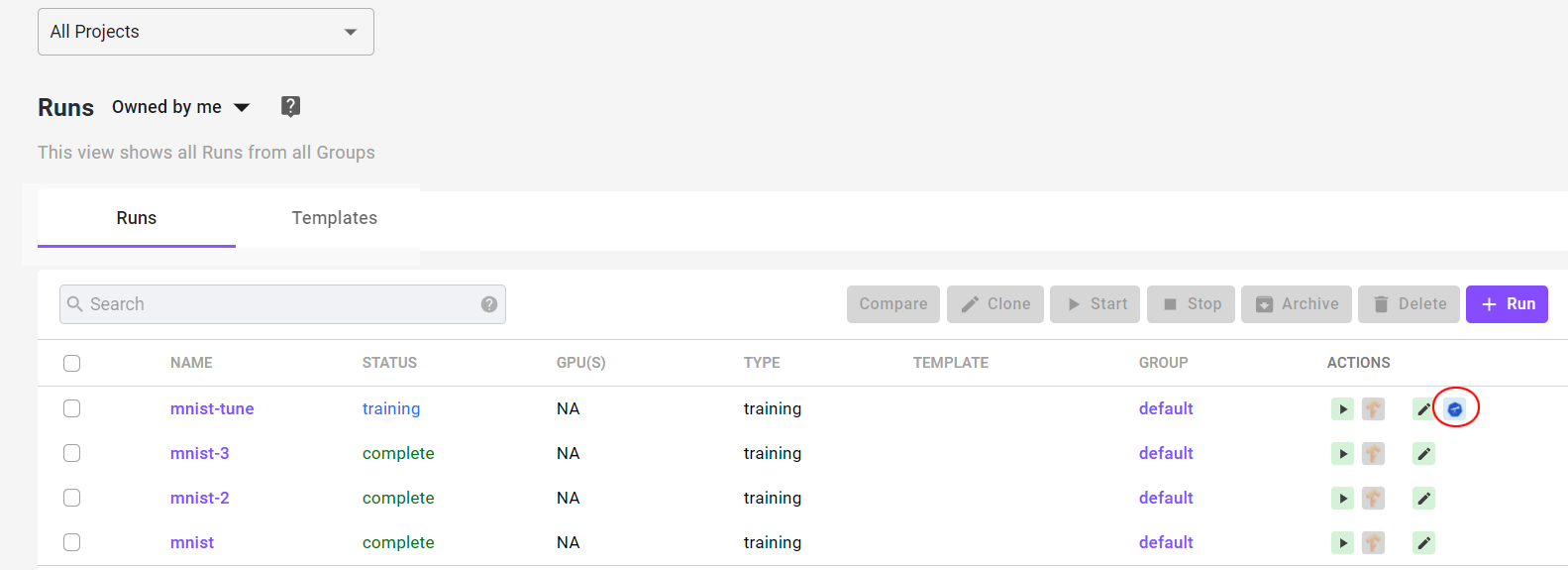

Training Runs¶

Training runs create Models as their output.

TensorBoard¶

TensorBoard can be accessed from the Runs screen.

When a Run instance is created, TensorBoard is in the “stopped” state. In order to use it, TensorBoard must be started by selecting the play icon.

Note

It can take several minutes for TensorBoard to be active after being started

Note

It is good practice to stop TensorBoard instances if they are not going to be used in order to conserve system resources

Training Run Actions¶

There are actions that can be performed on a Training Run instance. For these actions, the Run instance checkbox is selected, and the action is performed by choosing the appropriate icon.

Hyperparameter Optimization¶

DKube supports Katib-based hyperparameter optimization. This enables automated tuning of hyperparameters for a program and dataset, based upon target objectives. An optimization study is initiated by uploading a configuration file during the Training Run submission as described in Configuration Submission Screen

The study initiates a set of trials, which run through the parameters in order to achieve the objectives, as provided in the configuration file. After all of the trial Runs have completed, DKube provides a graph of the trial results, and lists the best hyperparameter combinations for the required objectives.

The optimization study is ready for viewing when the status is shown as “complete”. That indicates that the trials associated with the study are all complete. The output results of the study can be viewed and downloaded by selecting the Katib icon at the far right hand side of the Run line.

As described in section Configuration Submission Screen , an optimization Run is initiated by providing a YAML configuration file in the Hyperparameter Optimization field when submitting a Run.

A study that has been initiated using Hyperparameter Optimization is identified by the Katib icon on the far right.

Selecting the icon opens up a window that shows a graph of the trials, and lists the best trials based on the objectives.

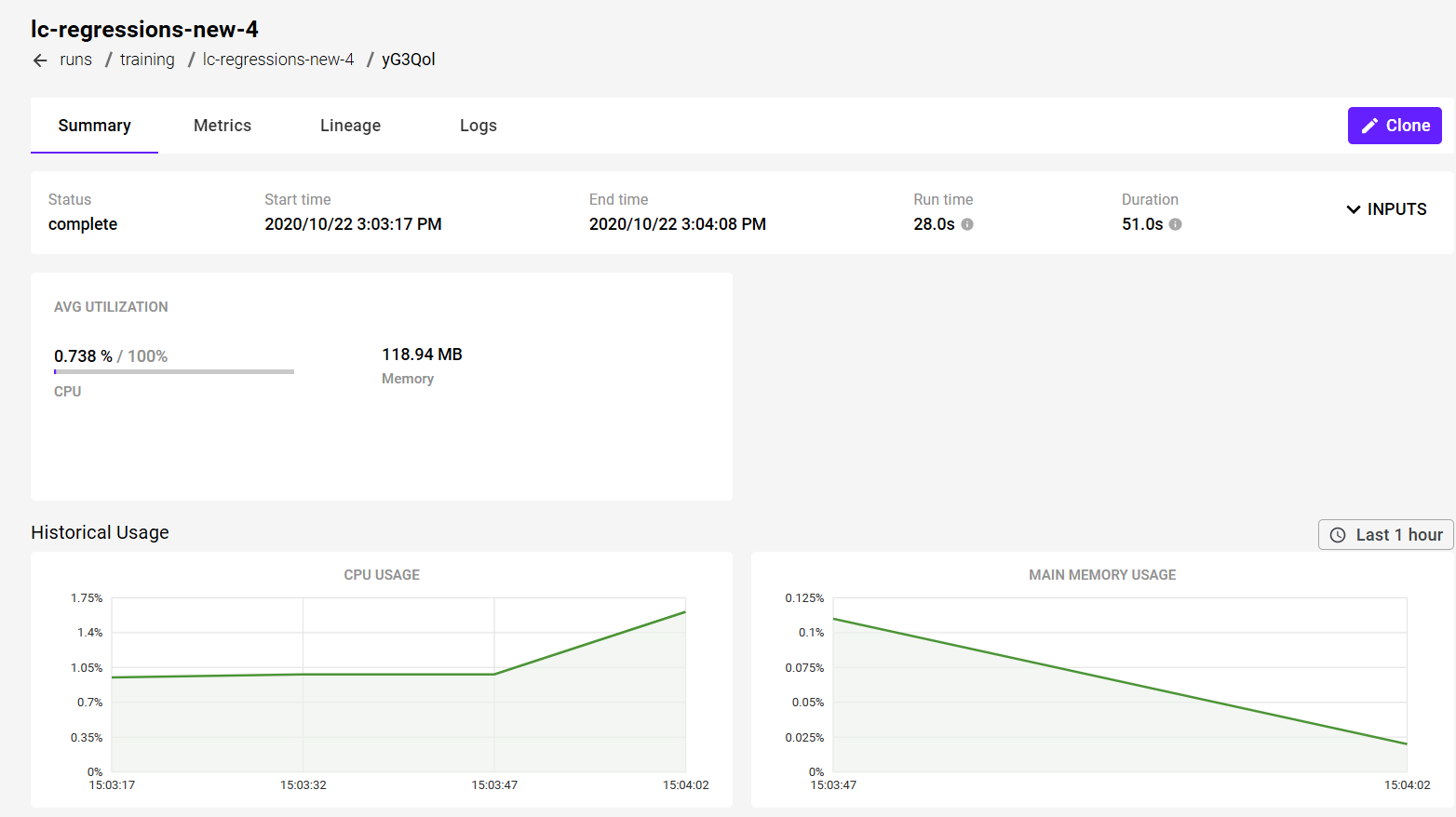

Training Run Details¶

More information can be obtained on the Run by selecting the name. This will open a detailed window.

Lineage¶

DKube provides the complete set of inputs that are used to create a Model from a Training Run. The overall concept is described in section Tracking and Lineage . The lineage is accessed from the details screen for a Run.

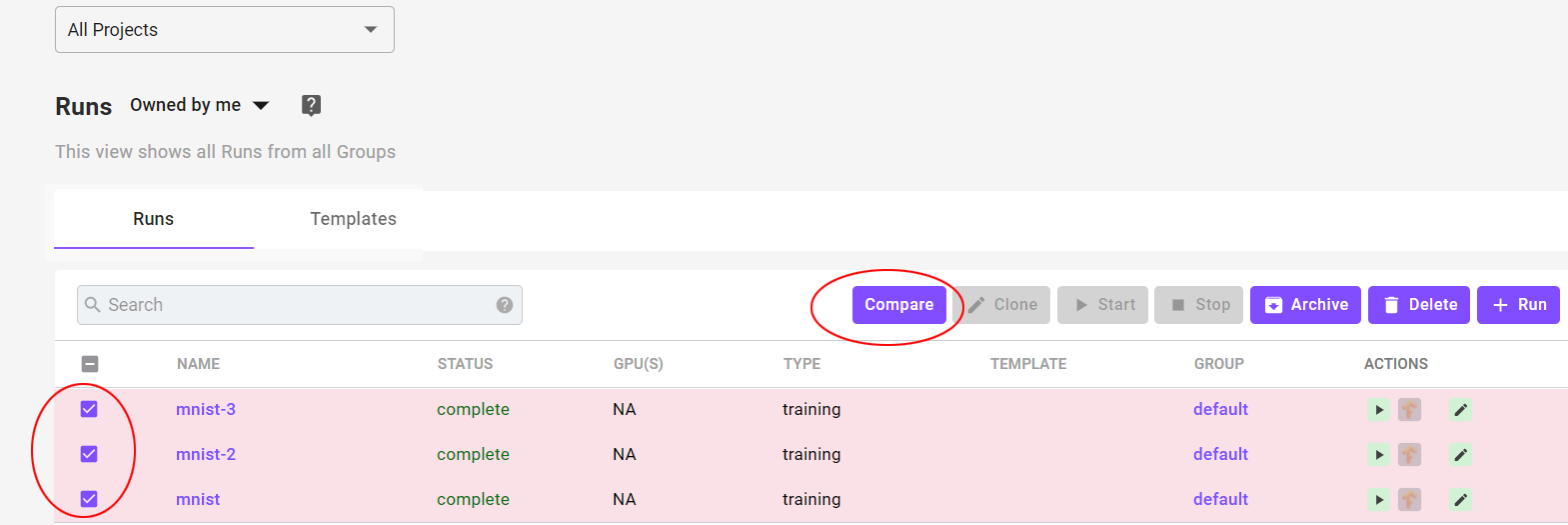

Compare Runs¶

Training runs can be compared in a similar manner as Model versions. This is accomplished by selecting the Runs to compare.

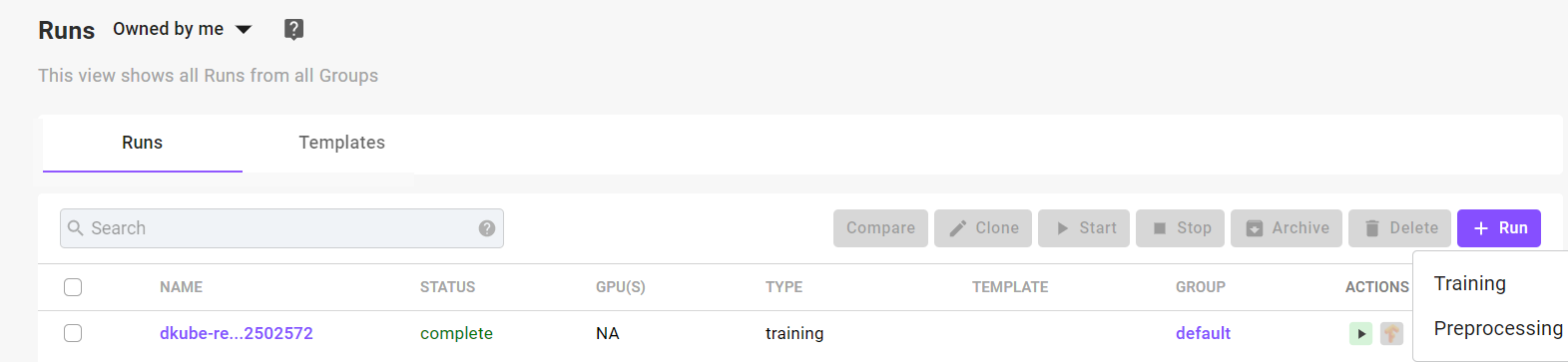

Create Training Run¶

A Training Run can be created in the following ways:

Create a Run from a JupyterLab or RStudio instance, using the “Create Run” icon on the right-hand side of the selected instance

This will pre-load the parameters from the instance

Create a new Training Run by selecting the “+ Run” button at the top right-hand side of the screen, and fill in the fields manually

The type of run, training or preprocessing, is chosen

A Run can be created from a Template at Templates that will pre-fill in many of the fields

Clone a Training Run from an existing instance

This will open the same new Training Run dialog screen, but most of the fields are pre-loaded from the existing Run. This is convenient when a new Run will have only a few different fields, such as hyperparameters, as the existing Run.

A Run is automatically created as part of a Pipeline

Note

The Run will be created in the Project that is selected when the Run is created. If “All Projects” is selected, it will not be associated with any Project.

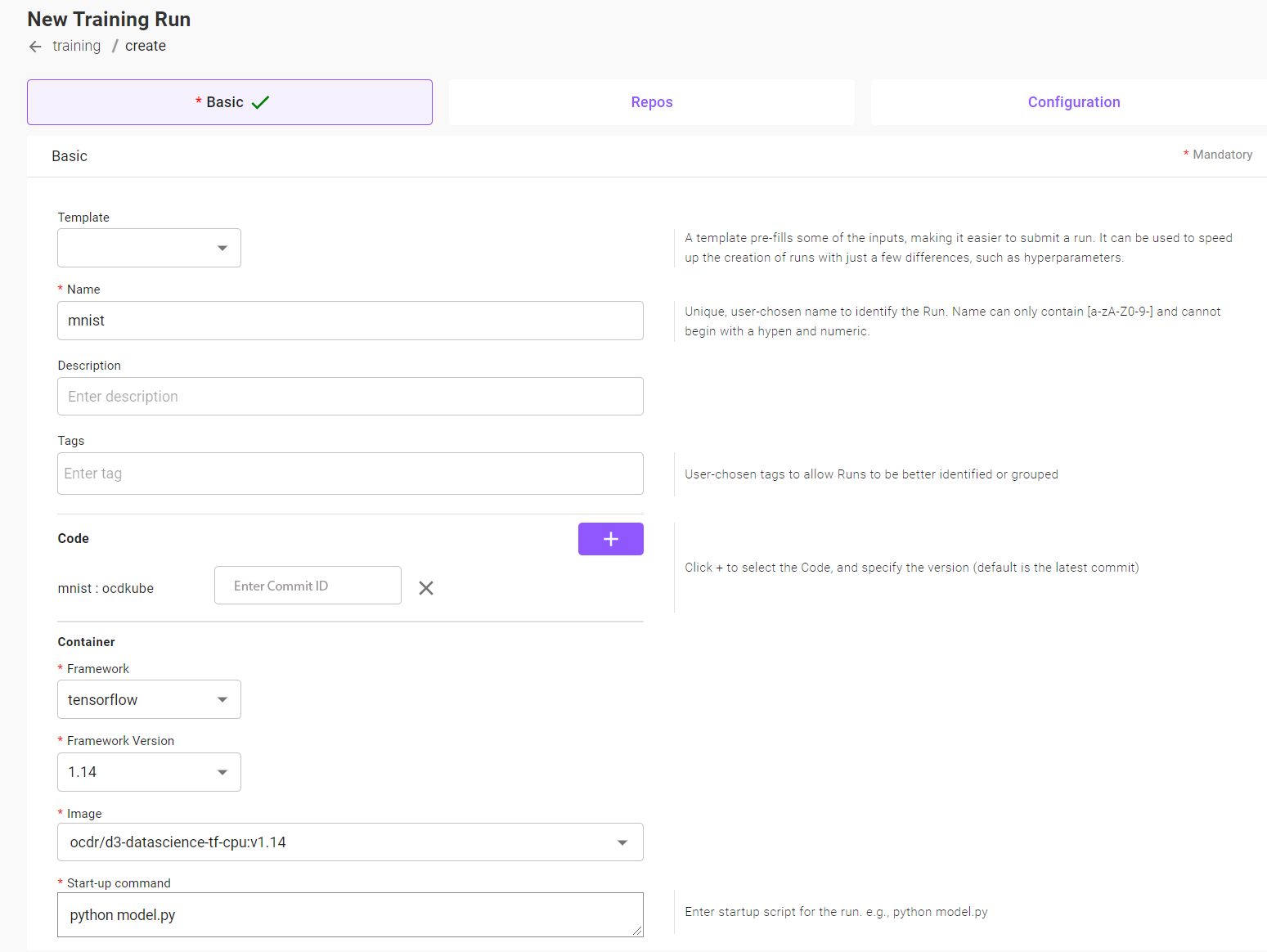

For the cases where a Run is created by the User, the “New Training Run” screen will appear. Once the fields have been filled in, select “Submit”.

Note

The first Run will take additional time to start due to the image being pulled prior to initiating the task. The message might be “Starting” or “Waiting for GPUs”. Each time a new version of the framework is run for the first time, the delay will occur. It will not happen after the first run.

File Paths for Datasets and Models¶

The Dataset & Model repos that are added as part of the submission are saved as described at File Paths

Basic Submission Screen¶

Field |

Value |

|---|---|

Name |

Unique user-chosen identification |

Description |

Free-form user-chosen text to provide details |

Tags |

Optional, user-chosen detailed field to allow grouping or later identification |

Code |

Program code repo |

Framework |

Framework type |

Framework Version |

Framework version |

Image |

Docker image to use - this can be left at the default, or a custom image can be selected |

Start-up Command |

Program and options that need to run in order to initiate training |

DKube has built-in support for TensorFlow, PyTorch, and Scikit Learn.

Code Repo¶

The code is uploaded into the local DKube storage and used for the Run.

The program code will be used based on the Commit ID field.

Blank |

The latest version of the code will be used |

Value |

The version of the code with that value will be used |

Custom Containers¶

Custom containers are supported to extend the capabilities of DKube. In order to use a customer container within DKube, select “Custom” from the Framework dropdown menu. This will provide more options.

Enter the image location in the field labeled Docker Image URL in the format registry/<repo>/<image>:<tag>

If the image is in a private registry, enable the Private option, and fill in the username and password

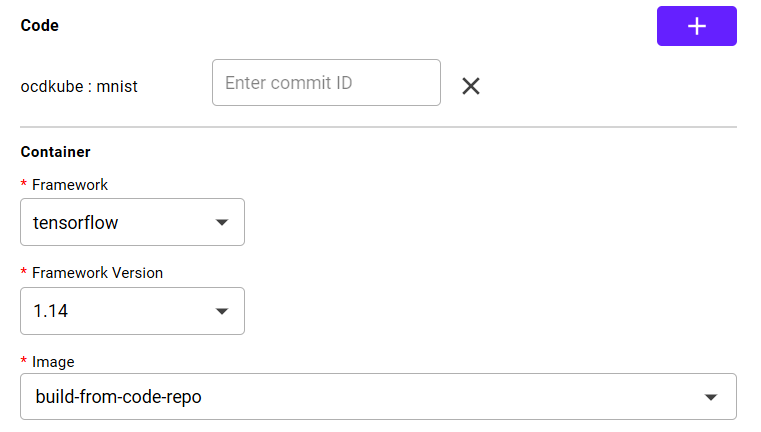

Build From Code Repo¶

By default, DKube will choose a standard image when creating a new training Run. If a different image is required, it can be selected from the dropdown menu in the Image field. In addition, the image can be created from the Code and then used for the Run.

In order to use this capability, the GitHub folder is required to have a .dkube-ci.yml file as described at CI/CD Image Creation

Selecting the “build-from-code-repo” Image option will cause the image to first be built and saved, then used for the Run execution.

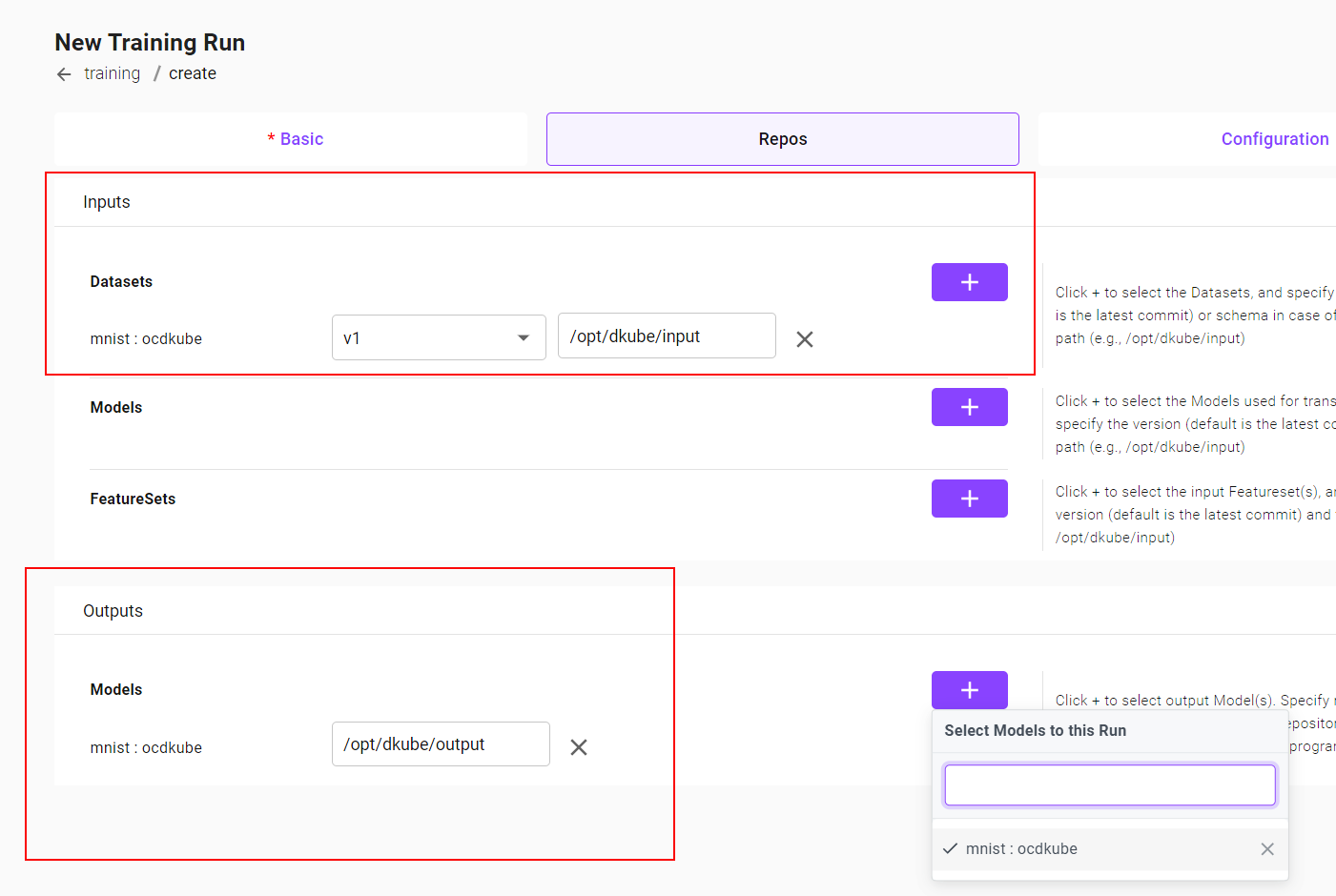

Repo Submission Screen¶

Output Model Repo¶

The Repos screen has an output Model section for the trained model in addition to the input Model section (for transfer learning).

The format for the output Model is similar to the input Model. Even though the field is for an output trained model, there still needs to be an entry in the Models repo so that the model can be properly tracked and versioned. The new trained model will become the next version of the model that is added to the submission.

In order to create a completely new model - with Ver 1 - a new DVS model should be created as explained in the section Models

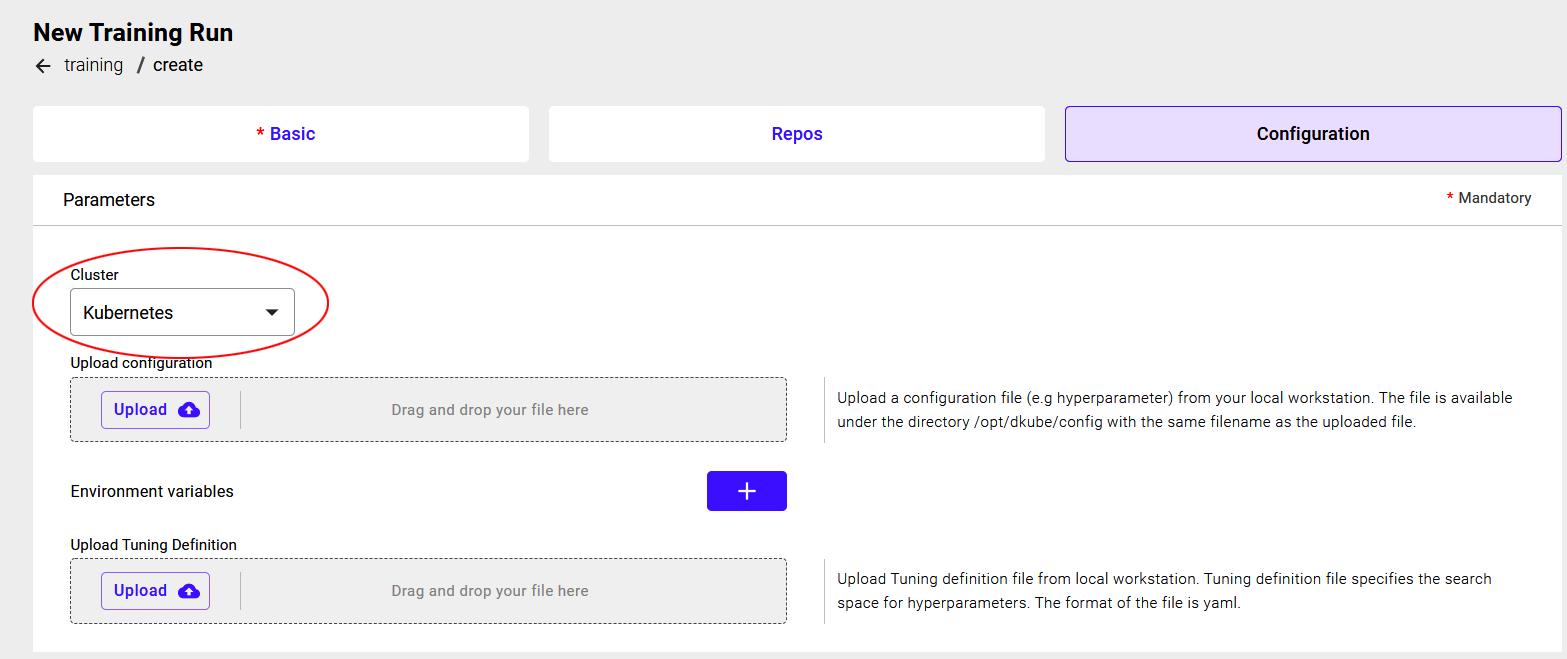

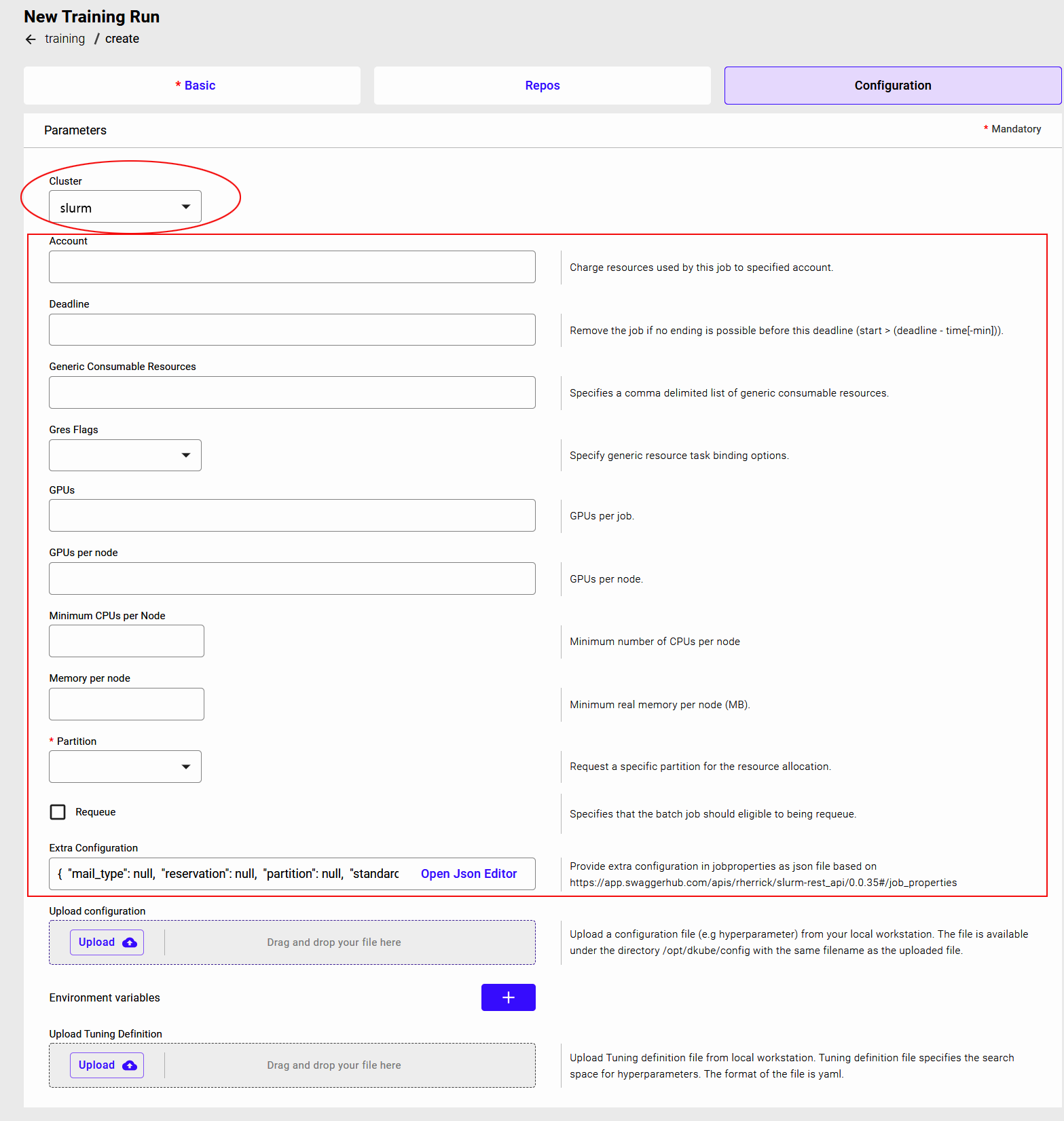

Configuration Submission Screen¶

Target Cluster¶

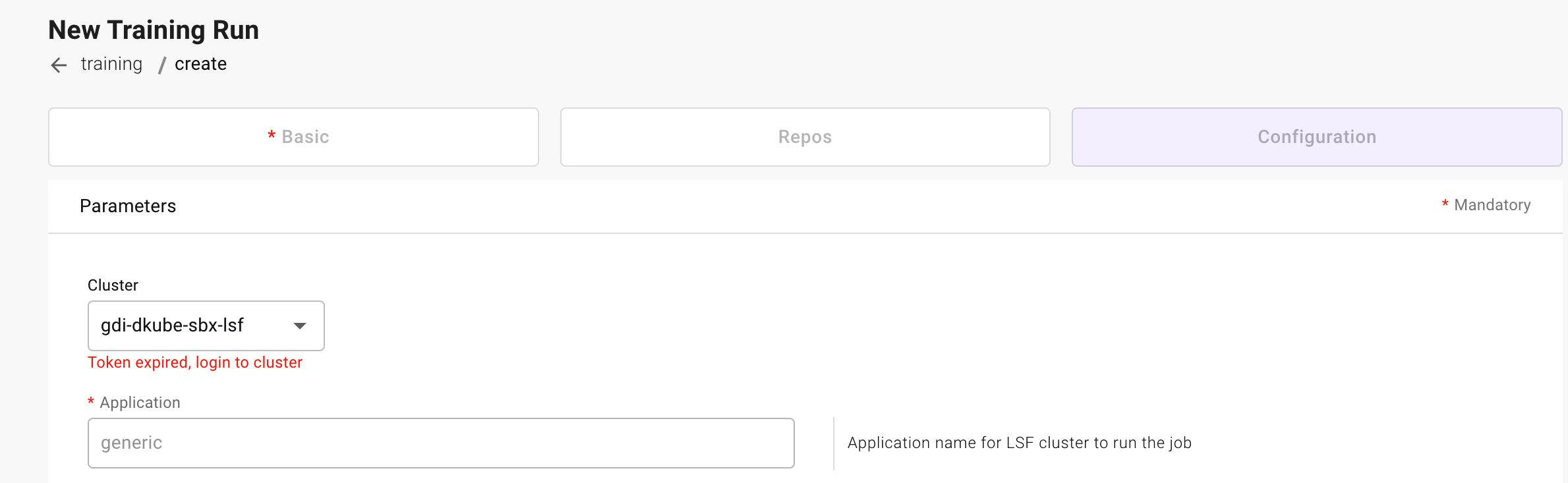

A Run can be executed on the primary Kubernetes cluster, or executed on a remote cluster. Available execution clusters will appear in a dropdown in the Cluster field. Based on the cluster type, the remaining fields will be different.

If the cluster choice is an external cluster, there are additional fields that are required prior to the common fields explained below. The field definitions are available at:

Cluster Type |

Field Definitions |

|---|---|

Slurm |

|

LSF |

If the cluster requires login, it will be indicated under the name of the cluster. If required, the login credentials are provided by following the steps at External Cluster Access

Configuration File¶

A configuration file can be uploaded and provided to the program. There is no DKube-enforced formatting for this file. It can be any information that needs to be used during program execution. It can be a set of hyperparameters, or configuration details, or anything else. The program needs to be aware of the formatting so that it can correctly unpacked during execution.

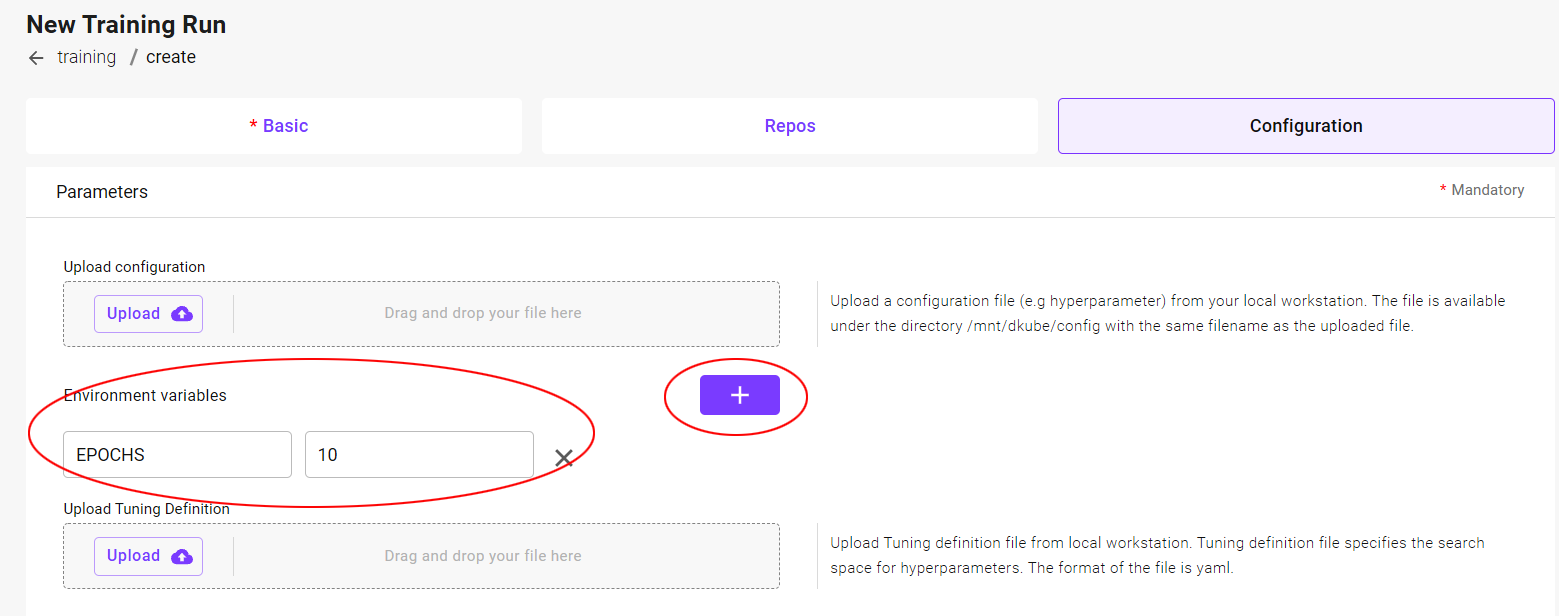

Hyperparameters¶

The configuration section allows the user to input the hyperparameters for the instance. The use of the hyperparameters is based on the program code. Hyperparameters can be added by selecting the highlighted “+”. This will allow an additional “Key” and “Value”. More parameters can be added by repeated use of this option.

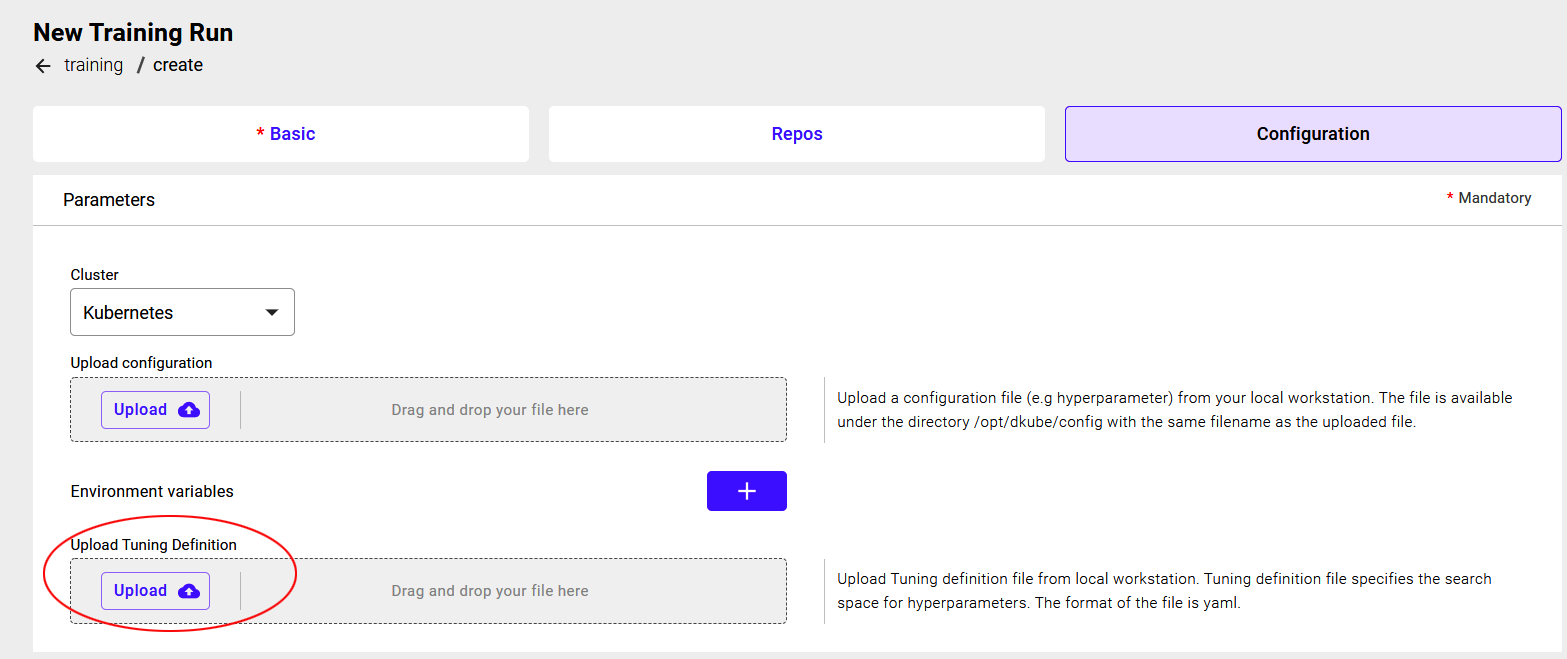

Hyperparameter Tuning¶

The Configuration screen has additional fields that allow more actions for the Run beyond what is possible with an IDE.

In addition to the ability to add or upload hyperparameters, the Training Run can also initiate a Hyperparameter Optimization run.

In order to specify that the Run should be managed as a Hyperparameter Optimization study, a yaml file must be uploaded that includes the configuration for the experiment.

The YAML files for the examples that are included with DKube are available at the following links.

Example |

Hyperparameter File |

|---|---|

mnist |

|

catsdogs |

The YAML file should be downloaded locally, and used when submitting the Run. The YAML file can be edited to have different configurations.

Leaving this field blank (no file uploaded) will indicate to DKube that this is a standard (non-hyperparameter optimization) Run.

The format of the configuration file is explained at Katib Introduction.

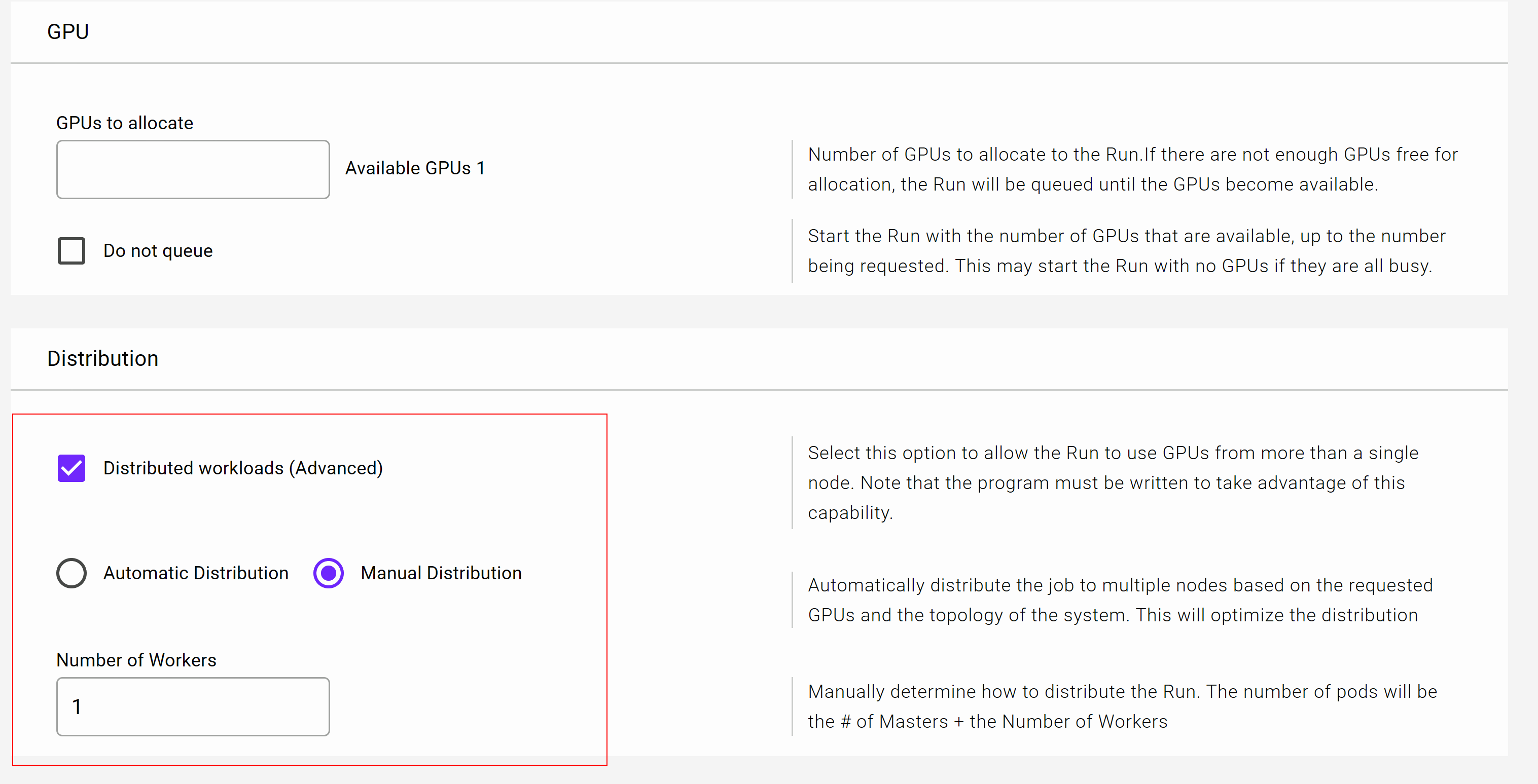

GPU Distribution¶

Runs can be submitted with GPUs distributed across the cluster. The Project code needs to be written to take advantage of this option. In order to enable this, the “Distributed workloads” option needs to be selected.

The distribution can be accomplished automatically or manually.

If the automatic distribution option is selected, DKube will determine the most effective way to use the GPUs across the cluster.

If the manual distribution option is selected, the user needs to tell DKube how the GPUs should be distributed. For this option, the user needs to understand the topology of the cluster, and know where the GPUs are located.

When distributing the workload manually across nodes in the cluster, the number of workers needs to be specified. DKube takes the number of GPUs specified in the GPU field, and requests that number of GPUs for each worker.

So, for example, if the number of GPUs is 4, and the number of workers is 1, then 8 GPUs will be requested, spread across 2 nodes.

Stop Run¶

Select the Run to be stopped with the left-hand checkbox

Click the “Stop” icon at the top right-hand side of the screen

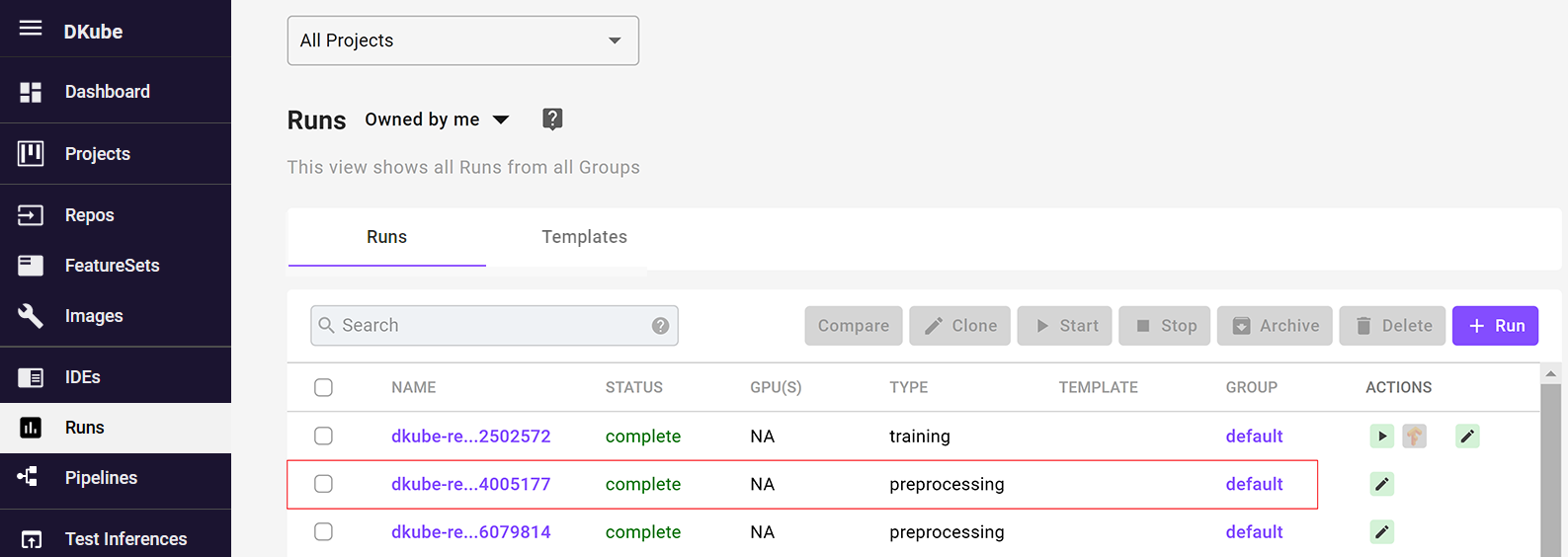

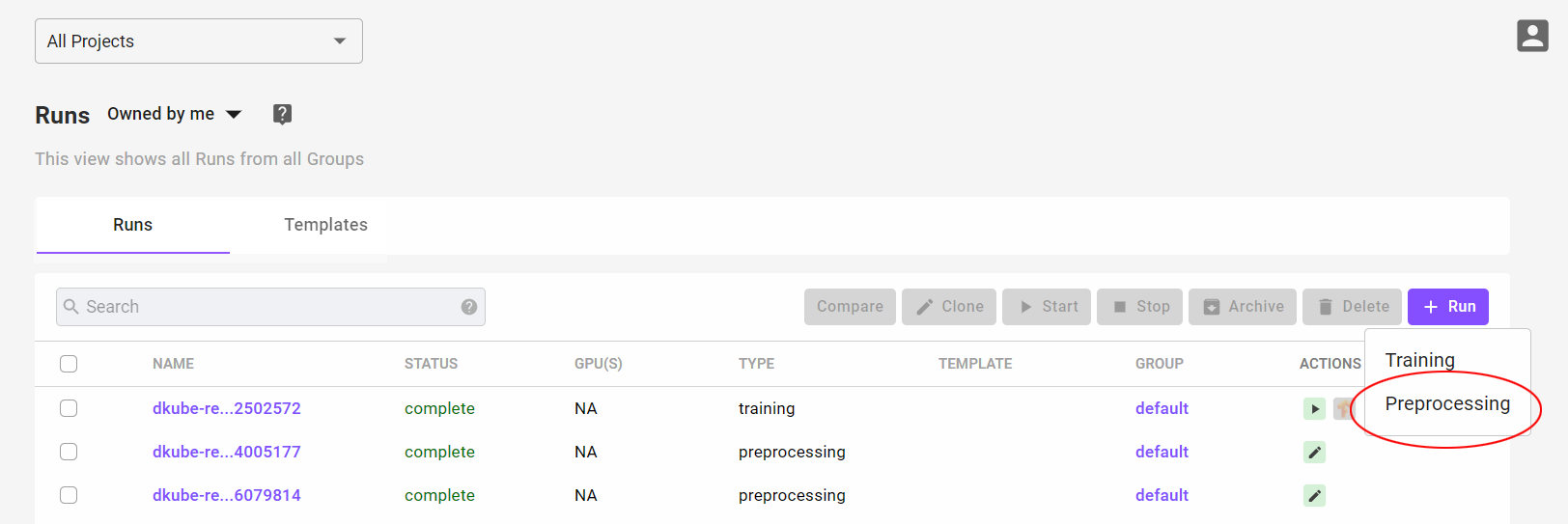

Preprocessing Runs¶

A Preprocessing Run outputs a Dataset entry when it is complete. This is typically done in order to modify a raw dataset such that it can be used for Training.

Create Preprocessing Run¶

A Preprocessing Run can be created in the following ways:

Create a new Preprocessing Run by selecting the “+ Run” button at the top right-hand side of the screen, and selecting “Preprocessing”.

A Run is automatically created as part of a Pipeline

Note

The Run will be created in the Project that is selected when the Run is created. If “All Projects” is selected, it will not be associated with any Project.

For the cases where a Preprocessing Run is created by the User, the “New Preprocessing Run” screen will appear. Once the fields have been filled in, select “Submit”

Note

The first Run will take additional time to start due to the image being pulled prior to initiating the task. Each time a new version of a framework is run for the first time, the delay will occur. It will not happen after the first run.

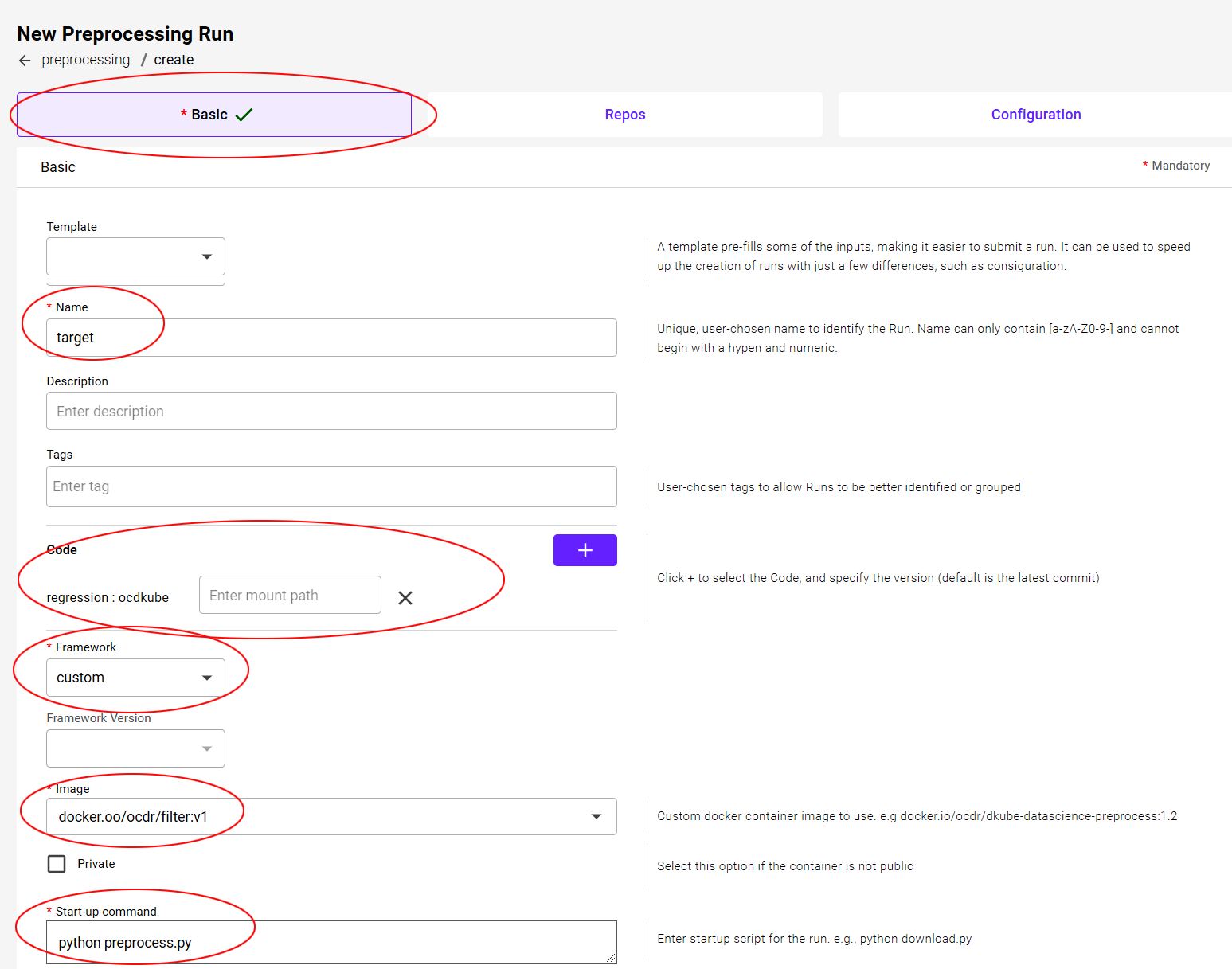

Basic Submission Screen¶

In addition to the standard fields, including the name of the run, tags, and start-up command, the Preprocessing Basic screen includes a docker image field that points to the image created by the user.

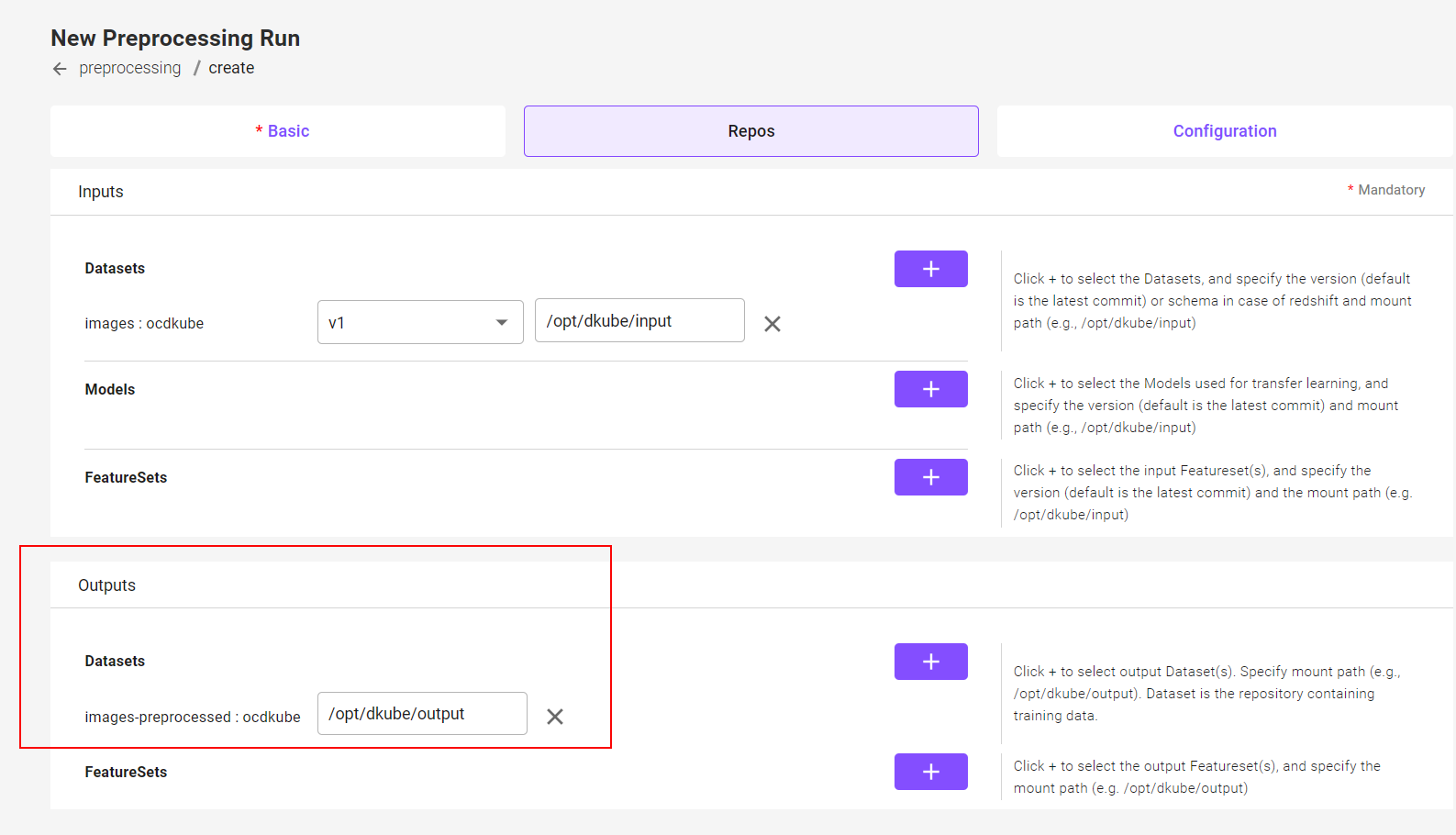

Repo Submission Screen¶

The Repos screen is filled in similarly to the Training Run, but instead of a Model output, there is a Dataset or FeatureSet output.

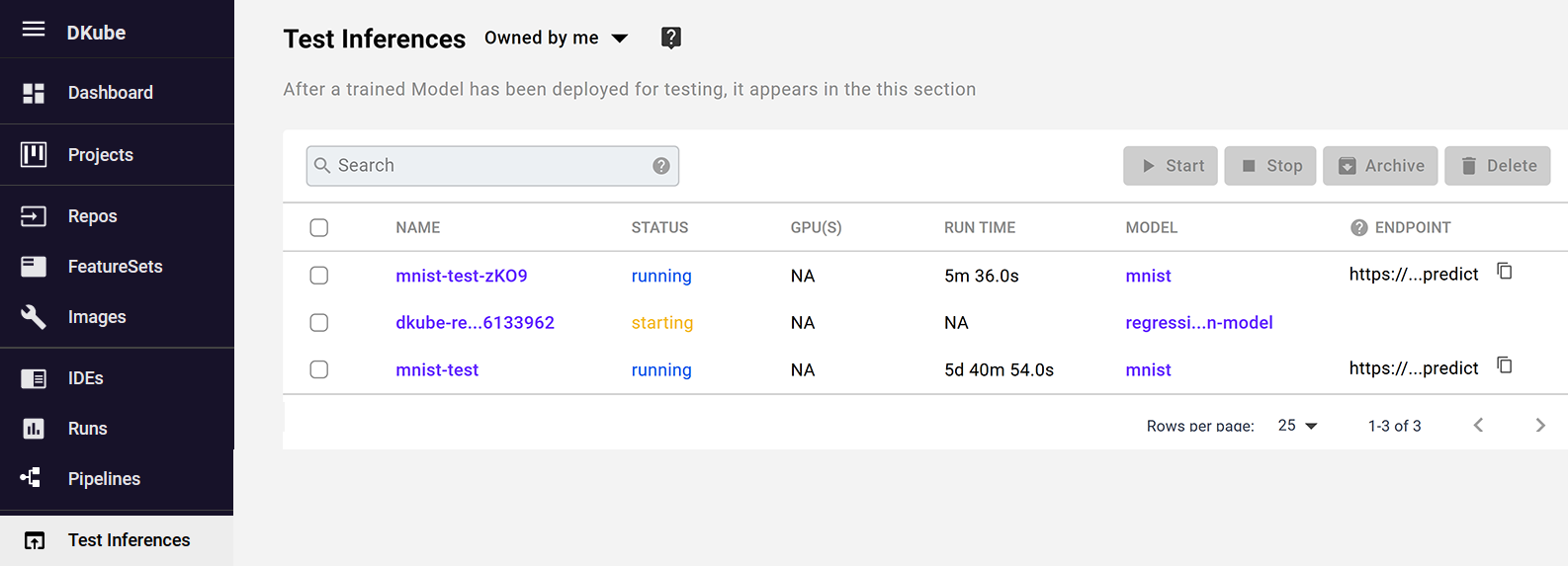

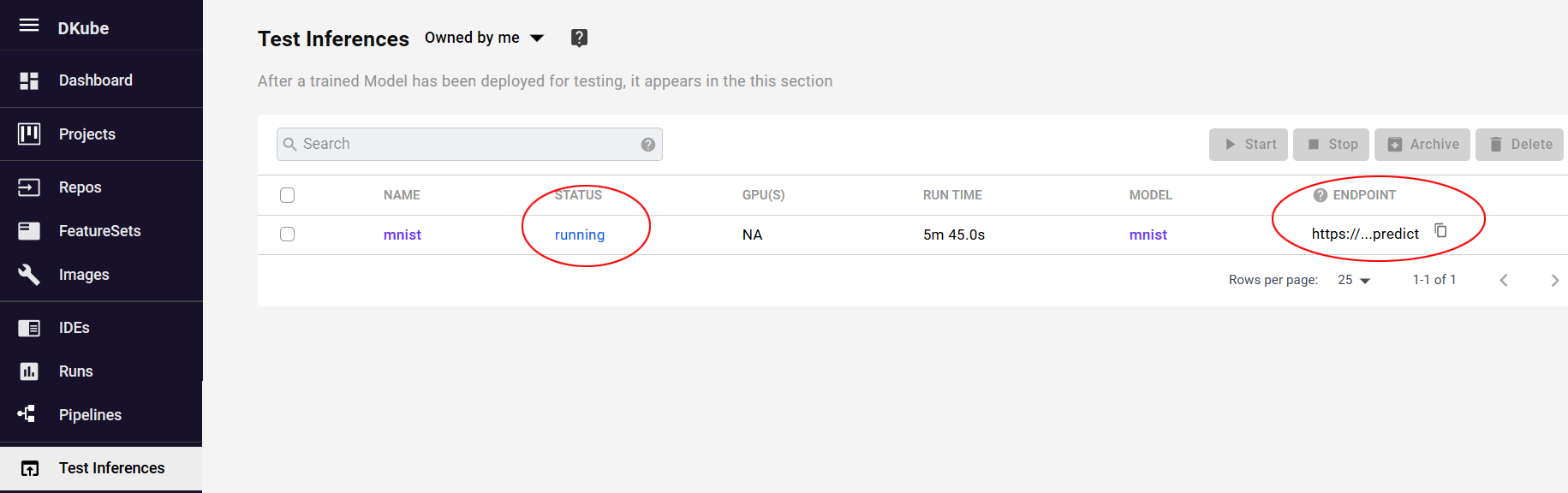

Test Inferences¶

DKube allows the user to test the quality of the trained model without deploying it for production serving. This is managed from the “Test Inferences” screen. A Model can be deployed for testing, which will run the model on a local endpoint and expose the inference for testing with an external application.

The status messages are described in section Status Field of IDEs & Runs

Testing the Inference¶

Creating the Test Inference¶

DKube includes a mechanism to deploy a serving instance locally and expose the APIs so that a Model can be tested before publishing it as a deployment candidate.

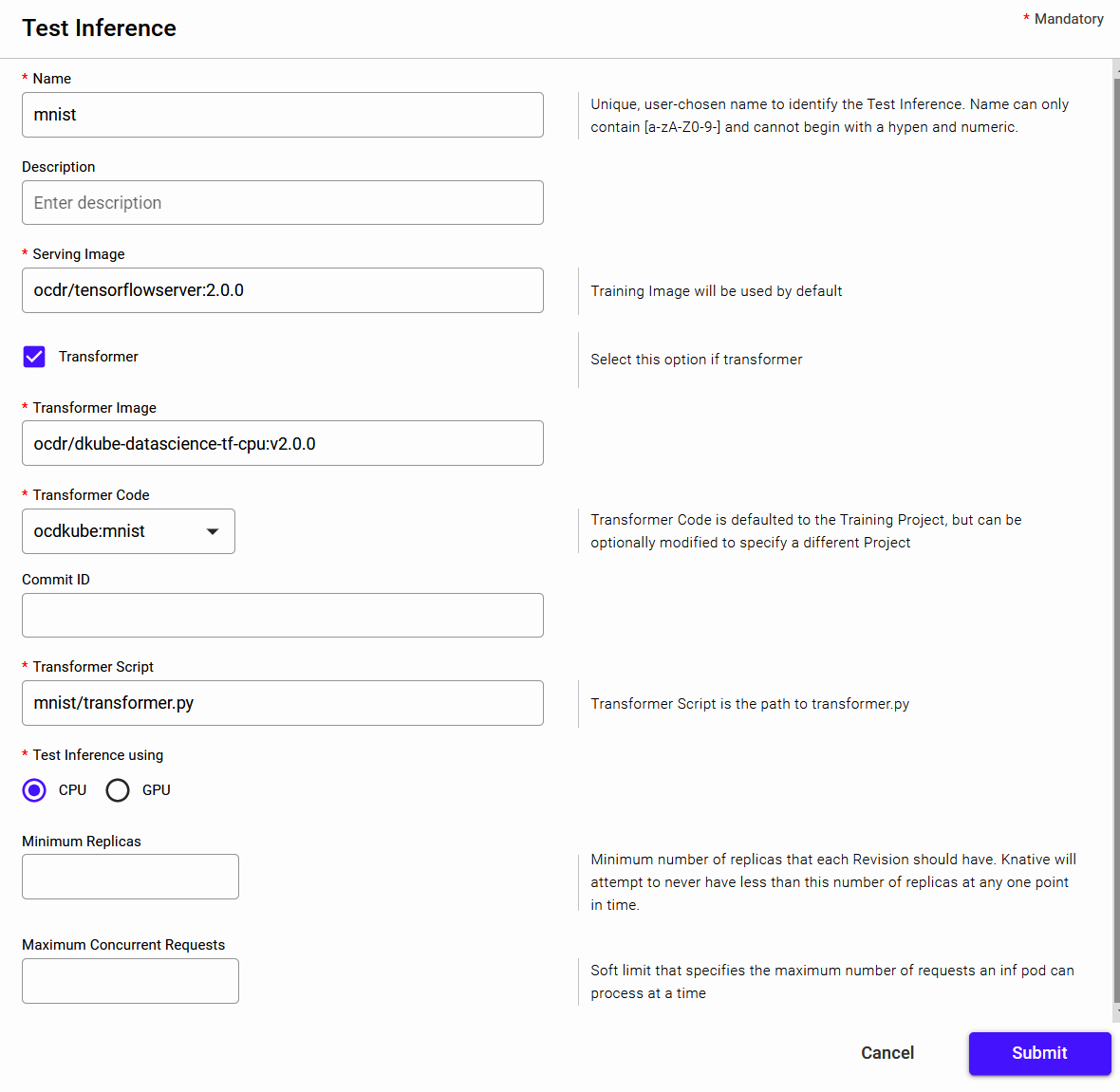

This will show a pop-up window, where you can enter the fields as explained here.

Field |

Value |

|---|---|

Name |

User-chosen name for the inference |

Description |

Optional user-chosen name to provide more details for the inference |

Serving Image |

Defaults to the training image, but a different image can be used if required |

Transformer |

Select if the inference requires preprocessing or postprocessing |

Transformer Image |

Image used for the transformer code |

Transformer Code |

Defaults to the training code repo, but a different repo can be used if required |

Commit ID |

Commit ID for the Transfomer Code - if left blank it will choose the latest |

Transformer Script |

Program used for the Transformer - referenced from the top level of the GitHub Code repository |

CPU/GPU |

Type of inference |

Minimum Replicas |

Minimum number of inference pods that will run in the idle state with no inference requests, described at Configuring Scale Bounds |

Maximum Concurrent Requests |

Soft target for the number of concurrent requests that a single inference pod can serve for the Model, described at Configuring Concurency |

The test inference is viewed from the “Test Inferences” menu. Once the status of the test inference shows “Running”, the “Endpoint” column provides the API that is serving the model.

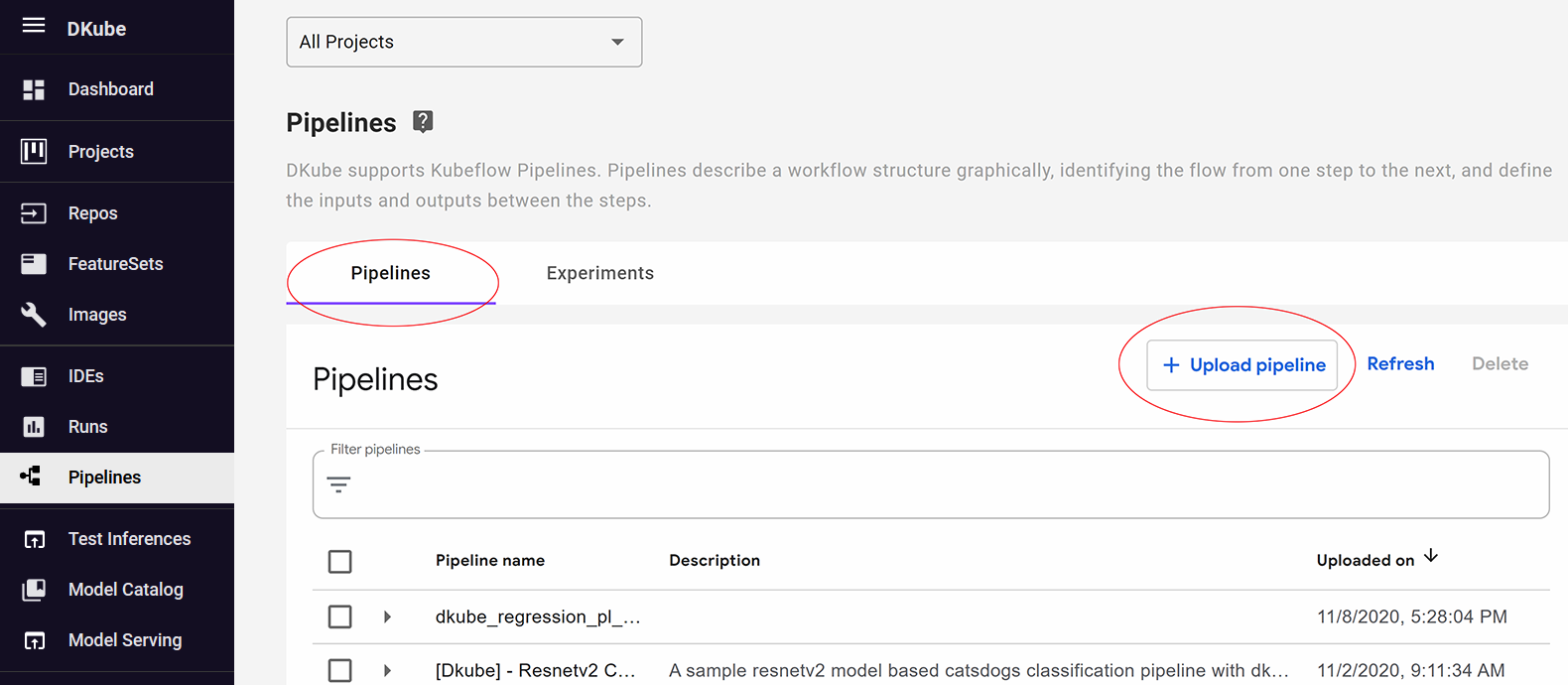

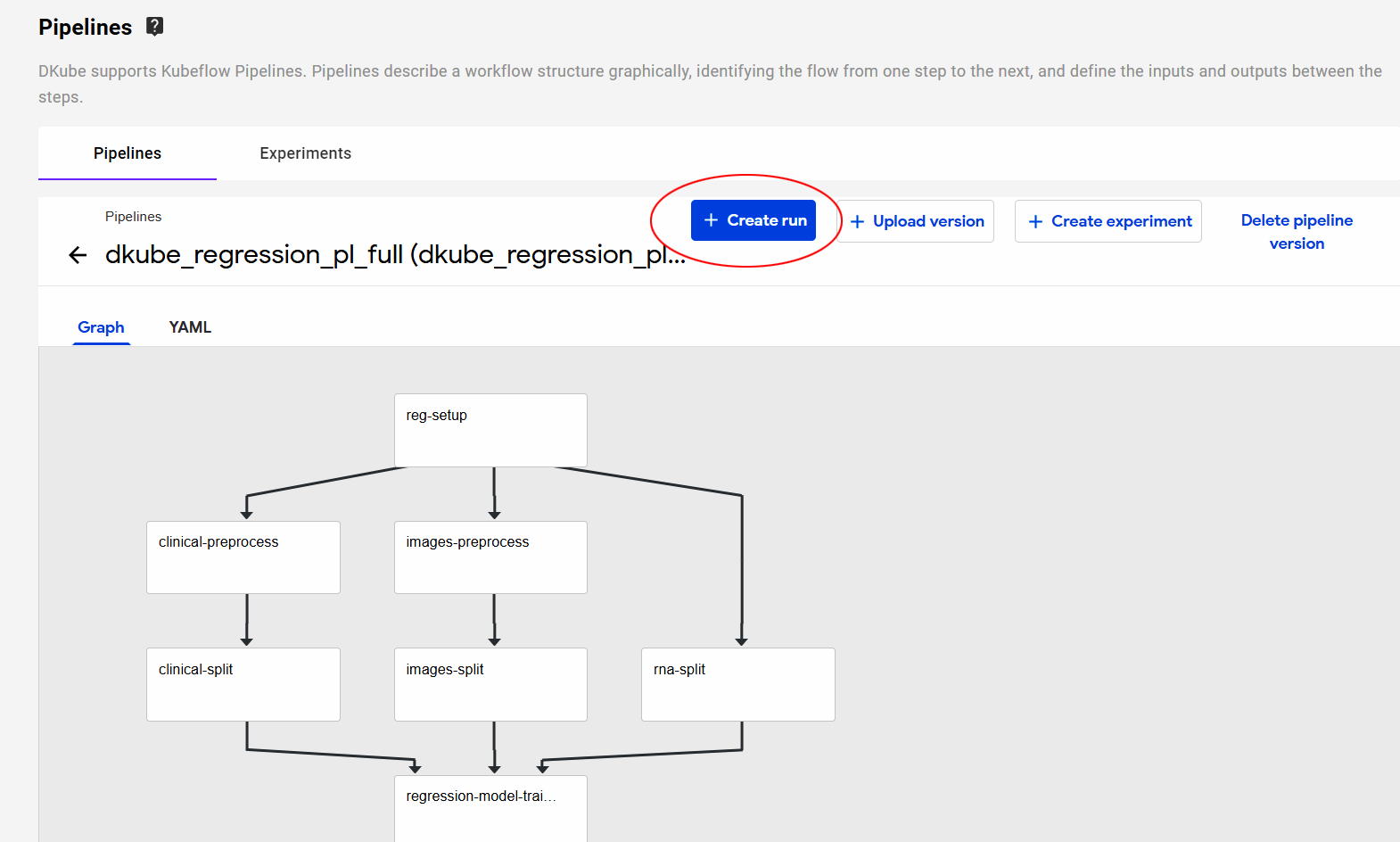

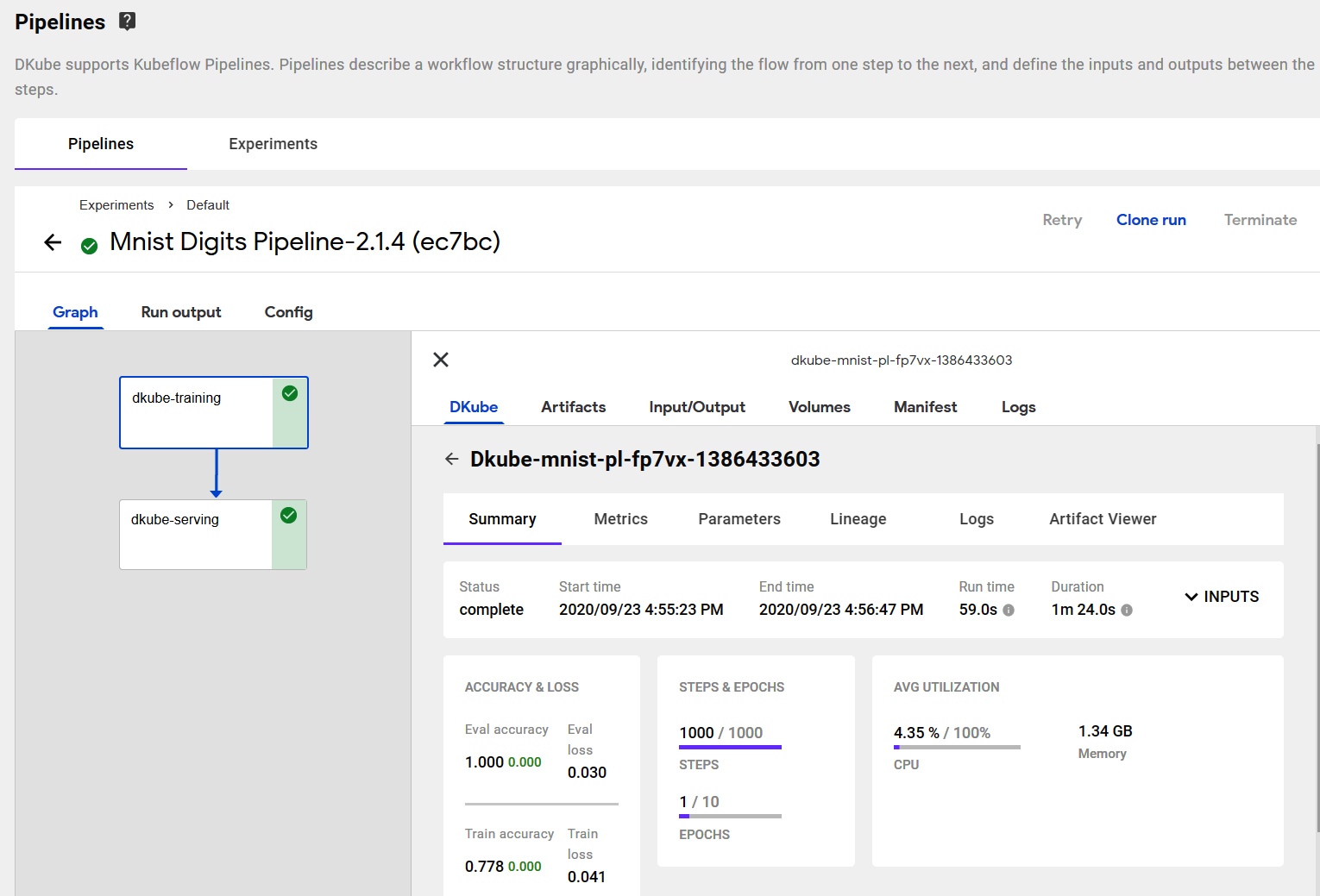

Kubeflow Pipelines¶

DKube supports Kubeflow Pipelines. Pipelines describe a workflow structure graphically, identifying the flow from one step to the next, and define the inputs and outputs between the steps.

An introduction to Kubeflow Pipelines can be found at Kubeflow Pipelines

One Convergence provides templates and examples for pipeline creation described at Kubeflow Pipelines Template

The steps of the pipeline use the underlying components of DKube in order to perform the required actions.

The following sections describe what is necessary to create and execute a Pipeline within DKube.

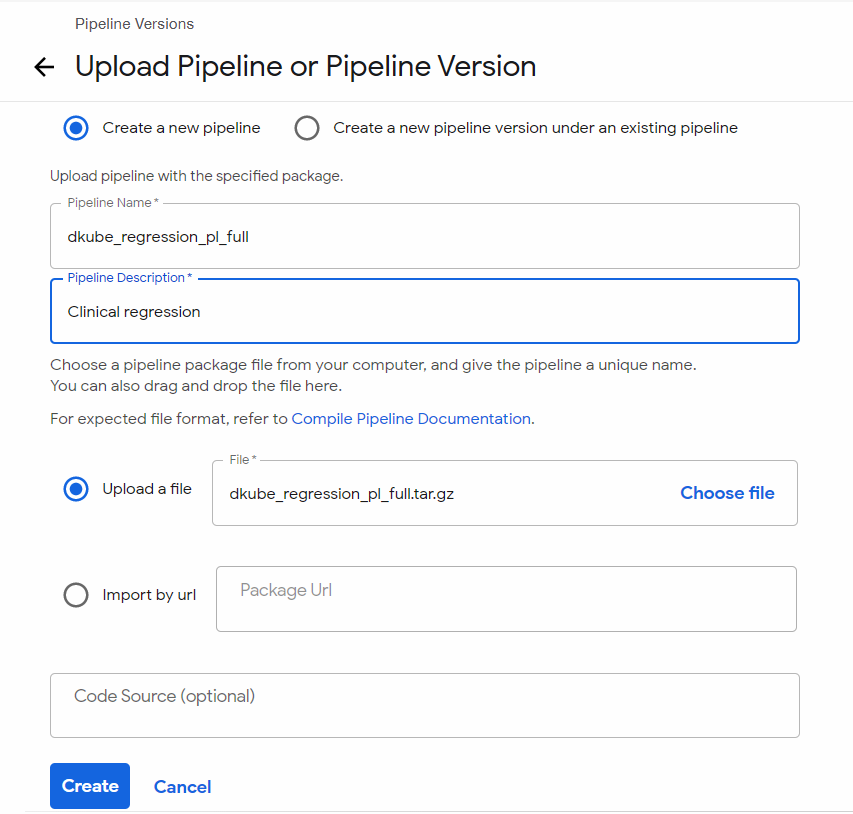

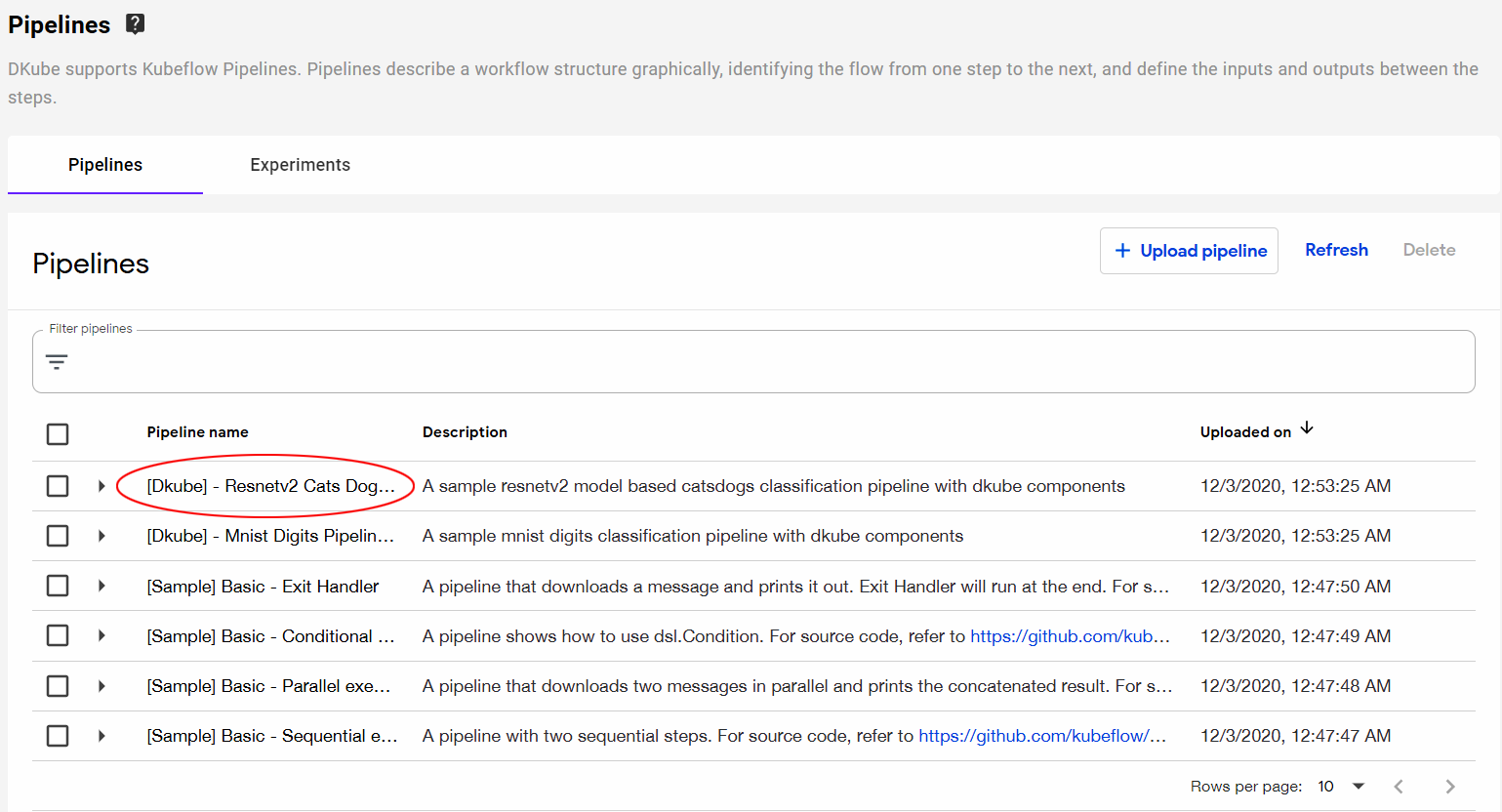

Upload a New Pipeline¶

The pipelines that have been uploaded or created within DKube are available from the “Pipelines” menu. There are a number of pipelines that come with a standard DKube installation. New pipelines can be uploaded to DKube by selecting the “+ Upload Pipeline” button and entering the access information.

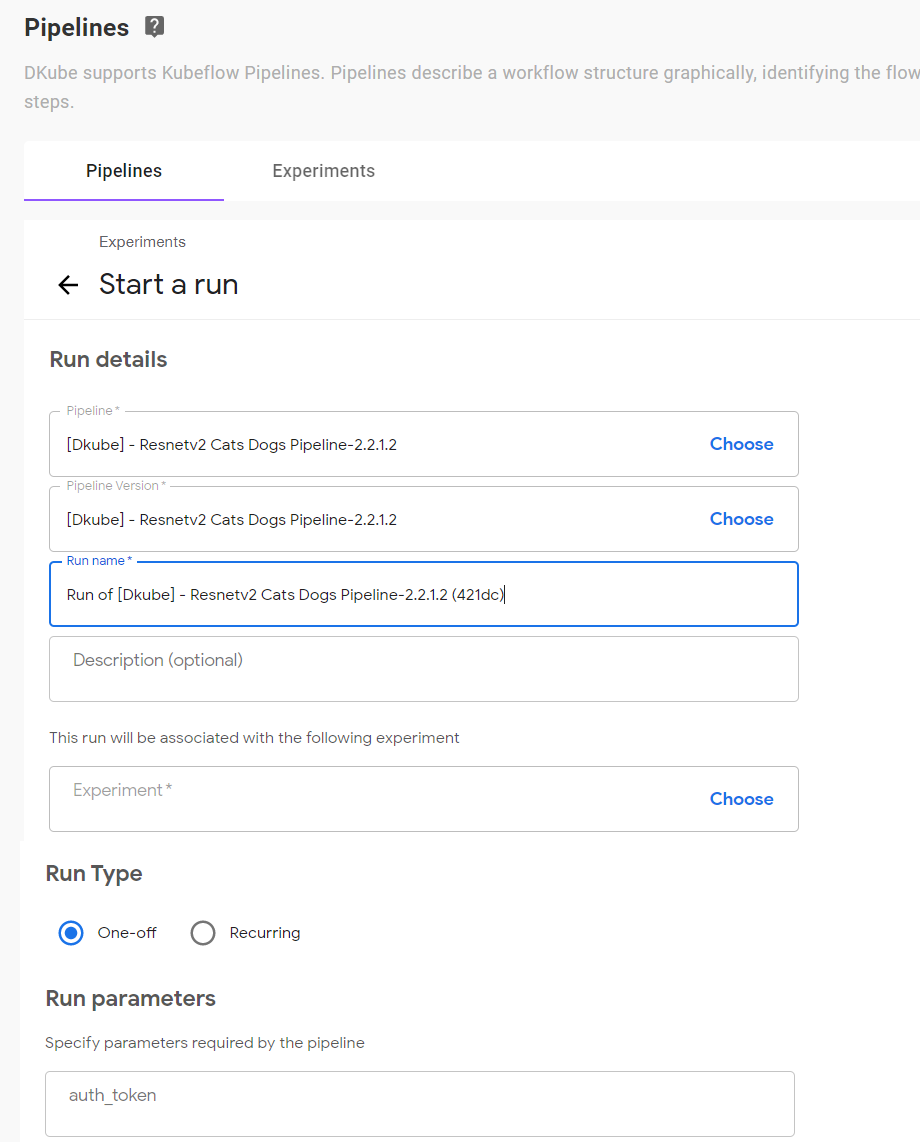

Create a Pipeline Run¶

A new Run can be created from a Pipeline by selecting the Pipeline name and choosing either a Run or an Experiment. The Experiment choice will put the Run into that Experiment.

The top input fields are common to any pipeline. The Run Parameters are specific to the Pipeline.

Field |

Description |

|---|---|

Pipeline |

Name of the Pipeline - defaults to Pipeline selected in previous step |

Pipeline Version |

Version of the Pipeline - defaults to Pipeline selected in previous setup |

Run name |

User-selected name for use in tracking |

Description |

Optional, user-selected field for providing more details |

Experiment |

Experiment to use for this Run |

Run Type |

Choose One-off or Recurring |

Run Parameters |

Input fields specific to the Pipeline |

Important

If the Run Type is selected as Recurring, there is a maximum number of 9 characters for the Run name

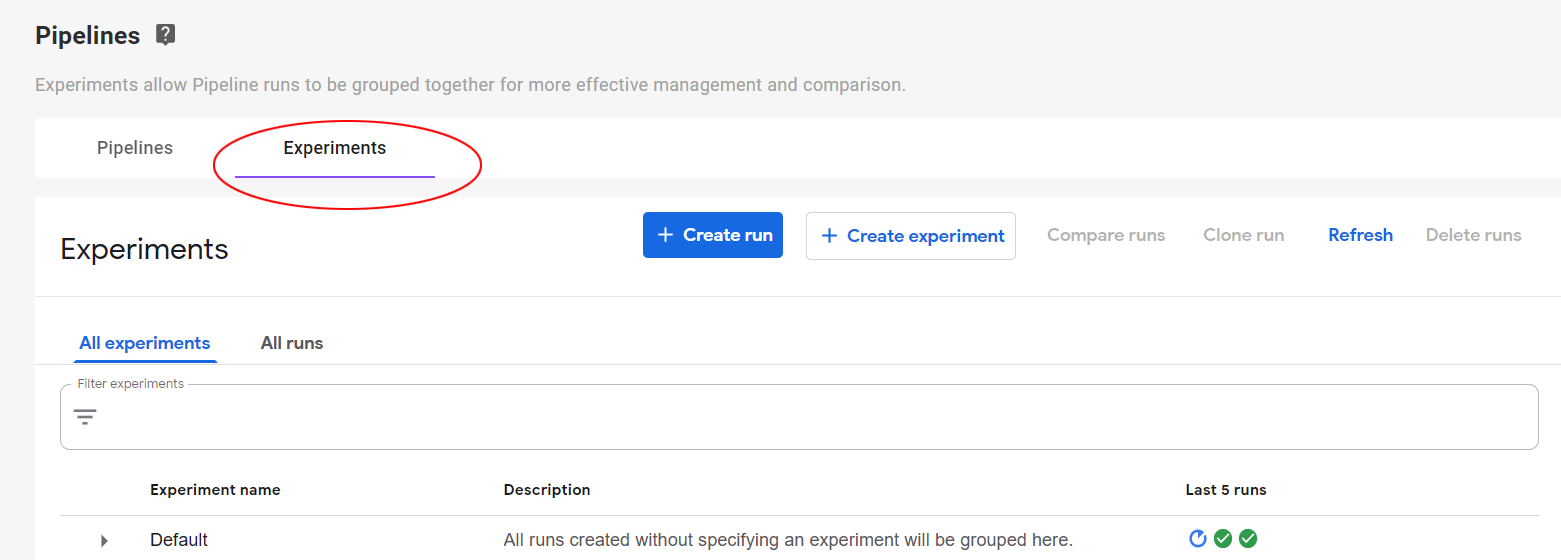

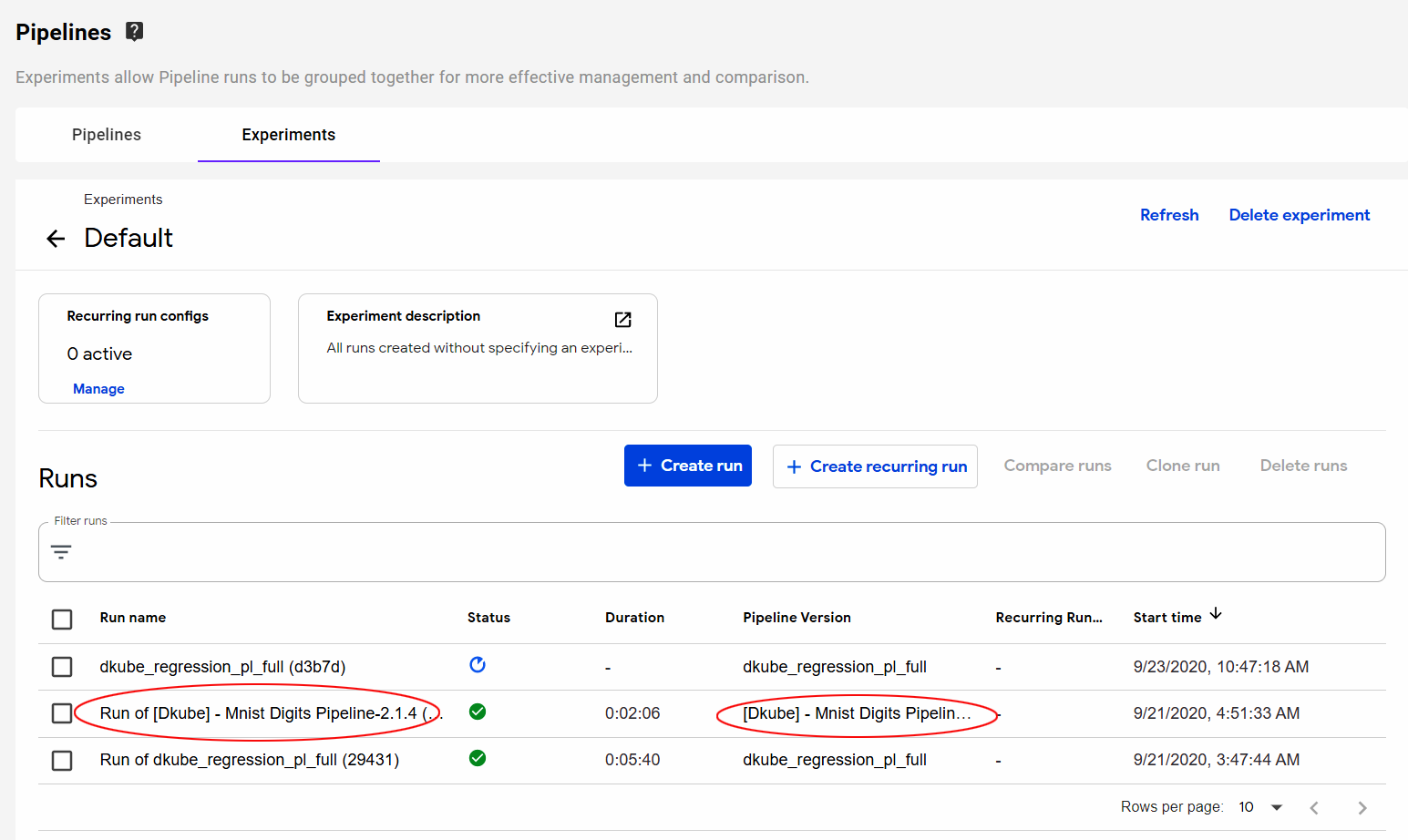

Manage Experiments and Runs¶

Experiments that have been created from pipelines are managed from the Experiments screen.

Within each experiment, the Runs that are part of that Pipeline are visible by selecting the Experiment name.

Selecting the name of the Run brings up the current state of the Pipeline. Selecting a Pipeline box within the “current state” screen brings up a window that provides more details on the configuration and status of the Run.

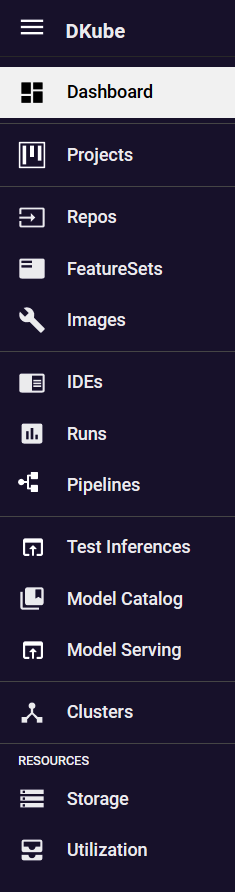

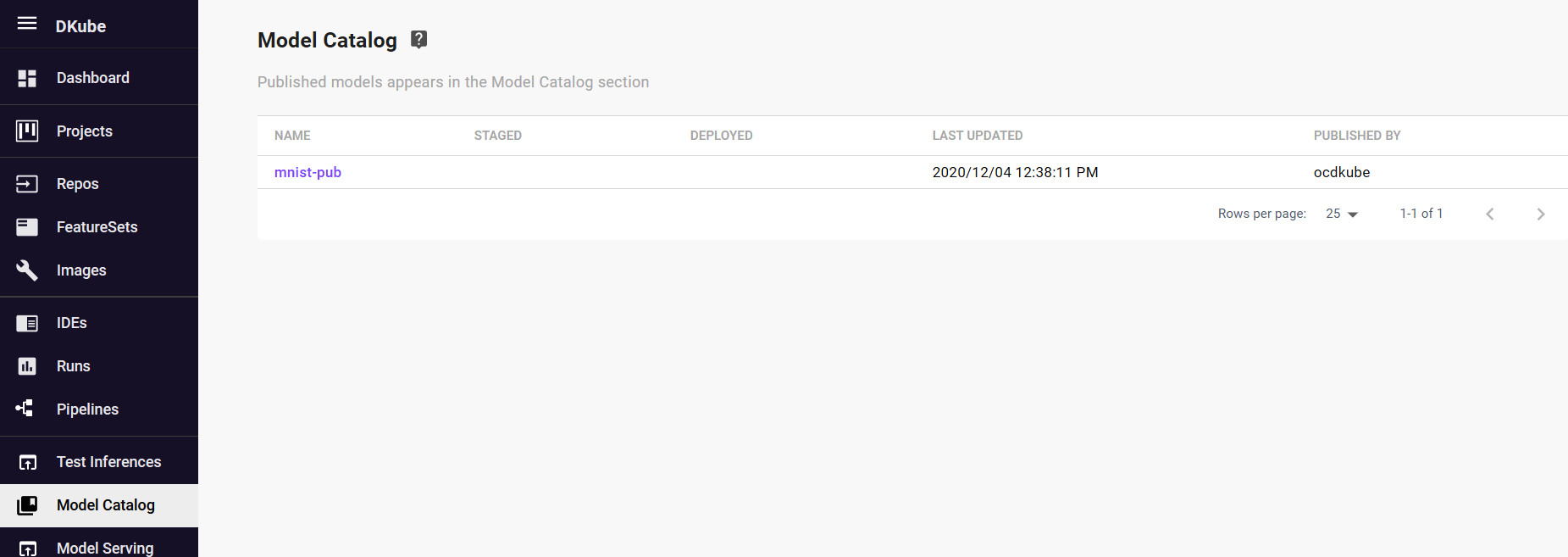

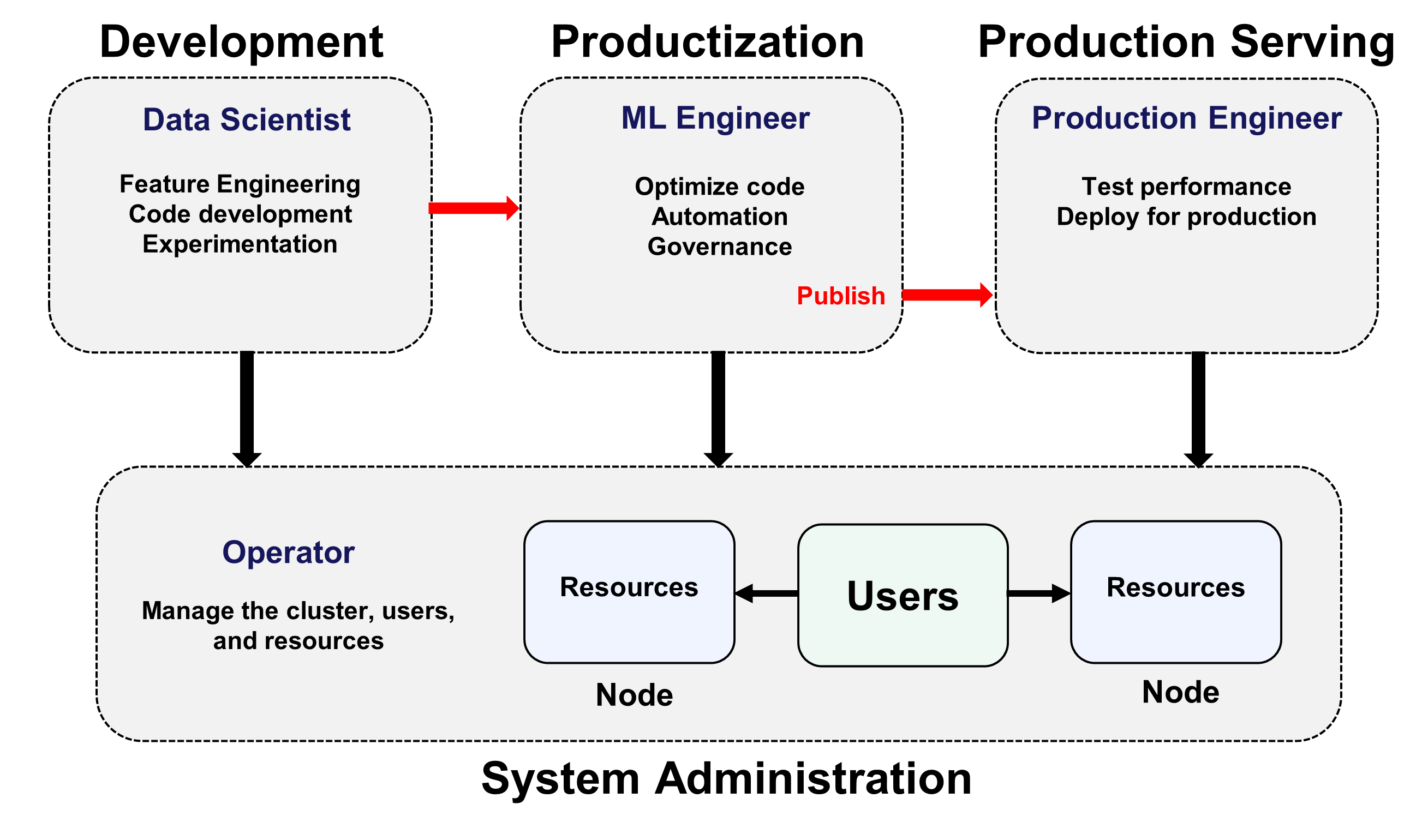

Production Engineer¶

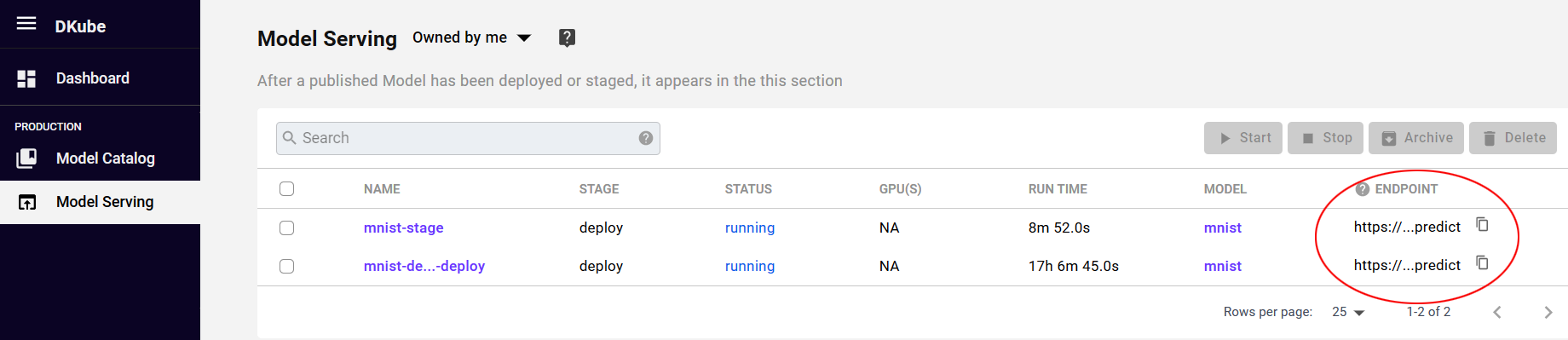

The Production Engineer (PE) takes the models that are optimized and published by the ML Engineer and deploys them for live inference after validating them and comparing them to the existing serving models.

Menu Item |

Function |

|---|---|

Model Catalog |

Optimized models, ready for deployment |

Model Serving |

Deployed models for inference |

The Model Catalog is the catalog described in the section Publish Model

Deployment Workflow¶

After a Model has been published by the ML Eng, the PE can deploy the model through the icon on the far right side of the screen under “Actions”.

Deploying the model will create a serving endpoint. This endpoint can be used to:

Test the model using inference data to ensure that it meets the goals

Compare the results to the existing live inference model

Provide the endpoint for live serving if it meets the goals

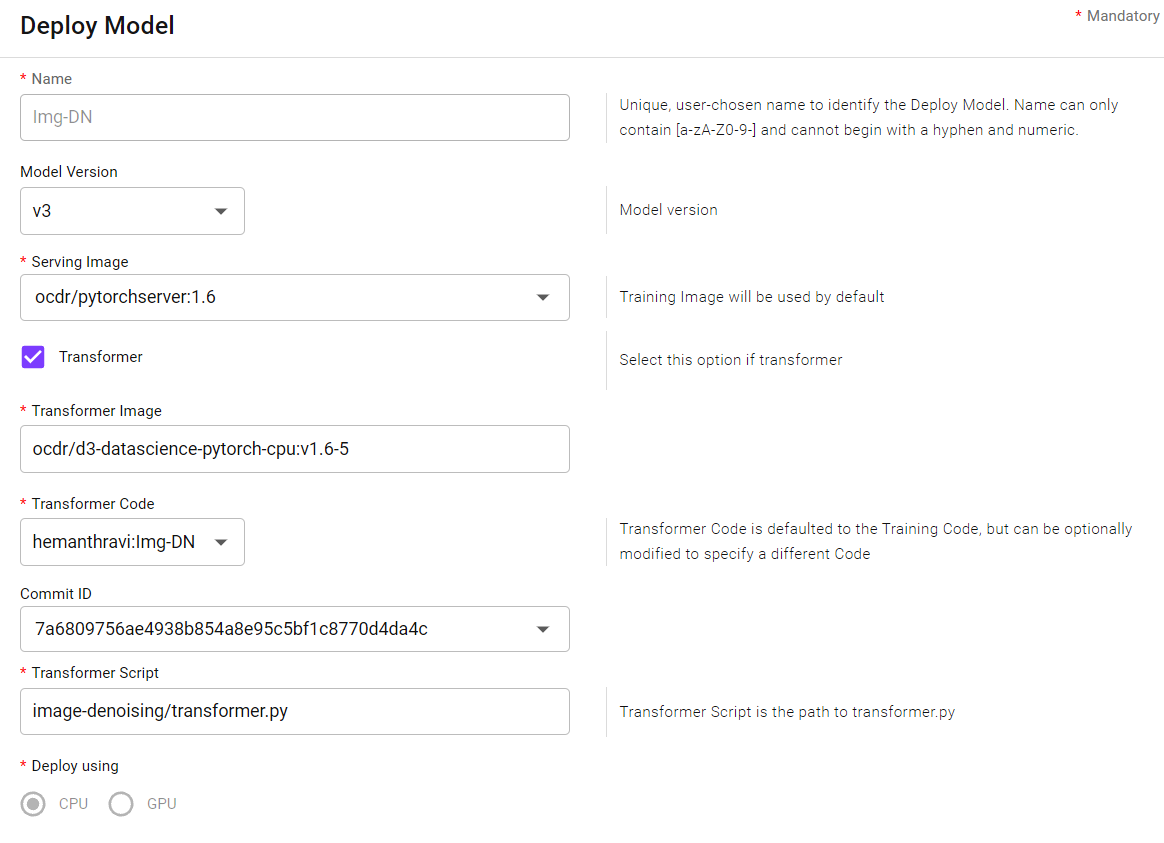

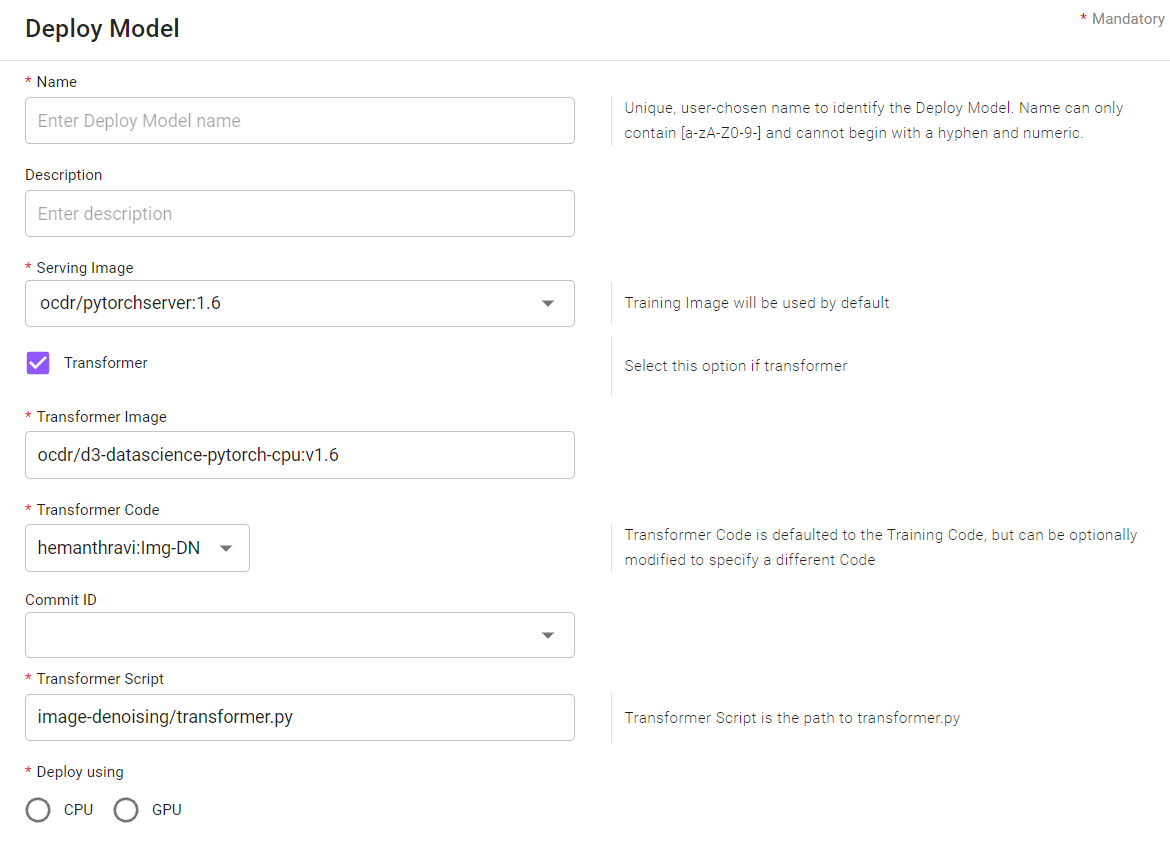

Selecting “Deploy Model” from the Action buttons on the right will cause a popup to appear where the deployment options can be provided.

Field |

Value |

|---|---|

Name |

User-chosen name for the Deployment |

Description |

Optional user-chosen name to provide more details for the Deployment |

Serving Image |

Defaults to the training image, but a different image can be used if required |

Transformer |

Select if the deployment requires preprocessing or postprocessing |

Transformer Image |

Image used for the transformer code |

Transformer Code |

Defaults to the training code repo, but a different repo can be used if required |

Commit ID |

Commit ID for the Transformer Code - if left blank it will choose the latest |

Transformer Script |

Program used for the Transformer - referenced from the top level of the GitHub Code repository |

CPU/GPU |

Type of inference |

Minimum Replicas |

Minimum number of inference pods that will run in the idle state with no inference requests, described at Configuring Scale Bounds |

Maximum Concurrent Requests |

Soft target for the number of concurrent requests that a single inference pod can serve for the Model, described at Configuring Concurency |

A deployed Model will appear in the Model Serving menu.

Change Model Deployment¶

After a Model has been deployed, it is associated with an endpoint URL. The Model associated with that endpoint can be changed from the Model Serving screen. Select the Edit Action button to the right of the Model name. This will cause a Popup to appear that allows the Model version and other associated information to be changed for that endpoint.