Using DKube¶

This section provides an overview of DKube, and allows you to get started immediately.

DKube Roles & Workflow¶

DKube is partitioned into 2 major screen views: Operator & Data Science. The workflow for each type is described in this section.

Role |

Screen View |

Function |

|---|---|---|

Operator (OP) |

Operator |

Manage the cluster, users, and resources |

Data Scientist (DS) & ML Engineer (ML) |

Data Science |

Create and optimize models based on specific goals and metrics |

Production Engineer (PE) |

Data Science |

Deploy models for live inference serving |

Each role has access to different screens, menus, and capabilities, based on the expected workflow described at MLOps Concepts

The following are the rules for access and capability based on the role:

Role |

Capability |

|---|---|

Operator (OP) |

Full access to every screen, menu, and capability |

Data Scientist (DS) |

Can develop models, but cannot publish them or view the Model Catalog |

ML Engineer (ML) |

Same capabilities as DS, but can publish models for possible deployment |

Production Engineer (PE) |

Cannot develop or modify models, but can test and deploy them |

Roles can be modified after onboarding by the Operator, explained at Add (On-Board) User

Note

A user can have multiple roles. In this case, the access to the screens and capabilities are a superset of the roles assigned.

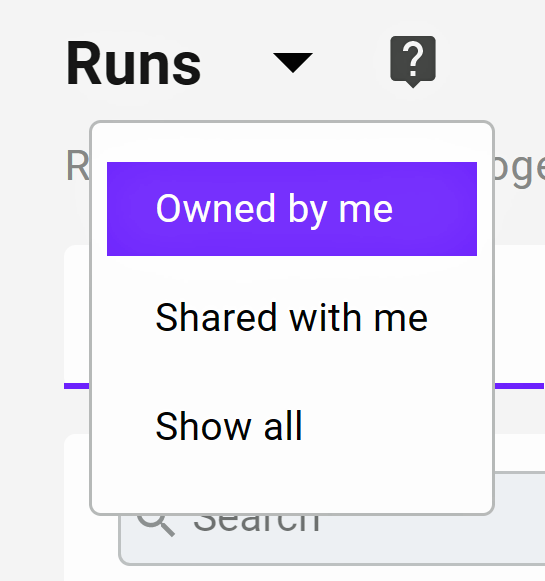

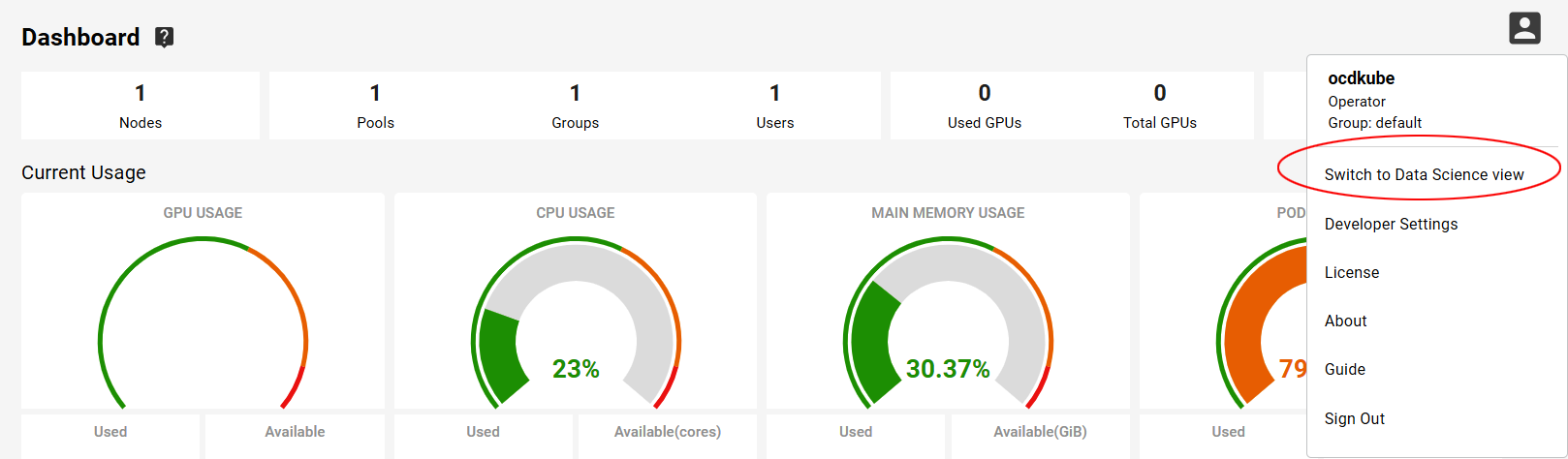

The screen view (Operator or Data Science) is selected at the top right-hand side of the screen. Once selected, the screens toggle between the views. The details of the screens are provided in the following sections.

First Time Users¶

If you want to jump directly to a guided example, go to the Data Science Tutorial . This steps you through the Data Science workflow using a simple example.

If you want to start with your own program and dataset, follow these steps:

Create a Notebook Create IDE

Otherwise, the following sections provide the concepts for the roles.

Operator Concepts¶

The Operator manages the cluster, users, and resources. By default, DKube enables operation without needing to do setup from the Operator. The Operator User is on-boarded and authenticated during the installation process.

Concept |

Definition |

|---|---|

User |

Operator or Data Science Engineer |

Group |

Aggregation of Users that share data |

GPU |

GPU devices connected to the Node, on the same server, or on another server in the cluster |

Node |

Execution entity on a physical host or a VM |

Pool |

Aggregation of GPUs from anywhere in the cluster |

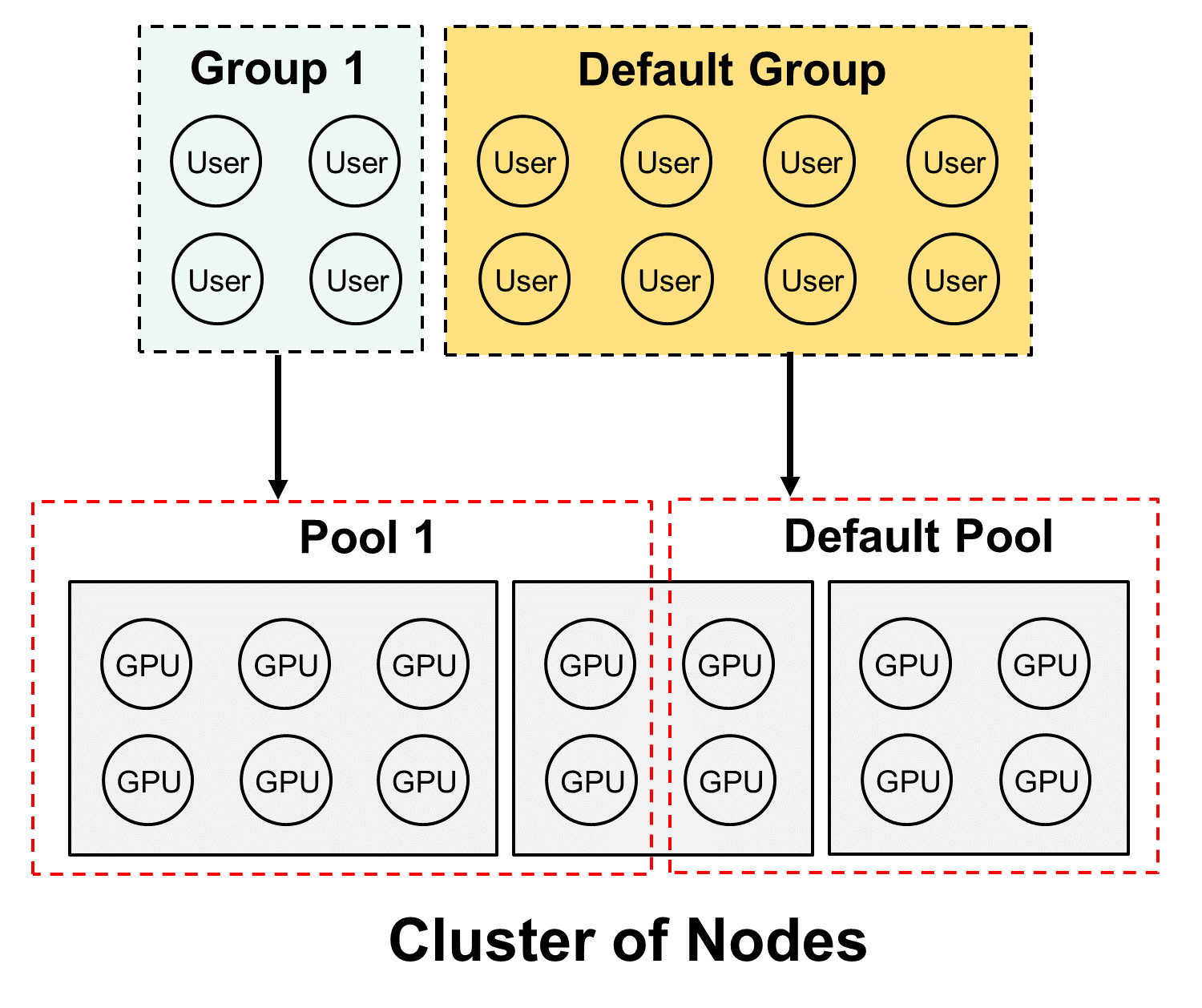

Operation of Groups¶

Groups of Users work together and share all of their inputs and outputs to enable collaboration. They can view each other’s code, datasets, models, runs, etc. In most cases one User in a Group can clone the work of another User and build on it. Only the Owner of the entity - the User who created it - can manage, delete, archive, etc.

Operation of Pools¶

Pools are collections of GPUs assigned to Groups. The GPUs in the Pool are shared by the Users in the Group.

A Pool can only contain one type of GPU; this includes any resources for that GPU, such as memory

The Users in a Group share the GPUs in the Pool

As GPUs are used by Runs or other entities, they reduce the number of GPUs available to other Users in the Group. Once the Run is complete (or stopped), the GPUs are made available for other Runs.

Clustered Pools¶

Pools behave differently depending upon whether the GPUs are spread across the cluster, or on a single node. If all of the GPUs in a Pool are on a single node, no special treatment is required to operate as described above.

If the GPUs in a pool are distributed across more than a single node, the Advanced option must be selected when submitting a Run. This process is described in the section Create Training Run

Default Pool and Group¶

DKube includes a Group and Pool with special properties, called the Default Group and Default Pool. They are both available when DKube is installed, and cannot be deleted. The Default Group and Pool allow Users to start their work as Data Scientists without needing to do a lot of setup.

The Default Pool contains all of the GPUs that have not been allocated to another Pool by the Operator. As the GPUs are discovered and automatically on-boarded, they are placed in the Default Pool.

As additional Pools are created, and GPUs are allocated to the new Pools, the number of GPUs in the Default Pool are reduced

As GPUs are removed from the other (non-Default) Pools, those GPUs are allocated back into the Default Pool

The total number of GPUs in all of the Pools will always equal the total number of GPUs across the cluster, since the Default Pool will always contain any GPU not allocated to any other Pool

The Default Group automatically gets the allocation of the Default Pool, and it contains all of the on-boarded Users who are allocated to the Default Group.

As new Users are on-boarded, they are assigned to the Default Group unless a different assignment is made during the on-boarding process

Users can be moved from the Default Group to another Group using the same steps as from any other Group

Initial Operator Workflow¶

At installation time, default Pools & Groups have been created, and the Operator is added to the Default Pool.

The Default Pool contains all of the resources

The Operator has been added to the Default Group

The Data Scientist can start without needing to do any resource configuration

If Pools and Groups are required in addition to the Default, the following steps can be followed:

Create Additional Pools ref:opd-create-pool

Assign Devices to the Pools

Create Additional Groups Create Group

Assign a Pool to each new Group

Add (On-Board) Users Add (On-Board) User

Assign Users to one of the new Groups

New Users can still be assigned to the Default Group if desired

If the Operator is the only User, or if all of the Users - including other Data Scientists - are in the same Group, nothing else needs to be done from the Operator workflow to get started.

The Operator should select the Data Science dashboard

The following section describes how to get started as a Data Scientist

Data Science Concepts¶

If you are responsible for development or production of models, you will only have access to the Data Science roles, menus, and screens.

A tutorial that takes you through your first usage is available at Data Science Tutorial

DKube Concepts¶

Term |

Definition |

|---|---|

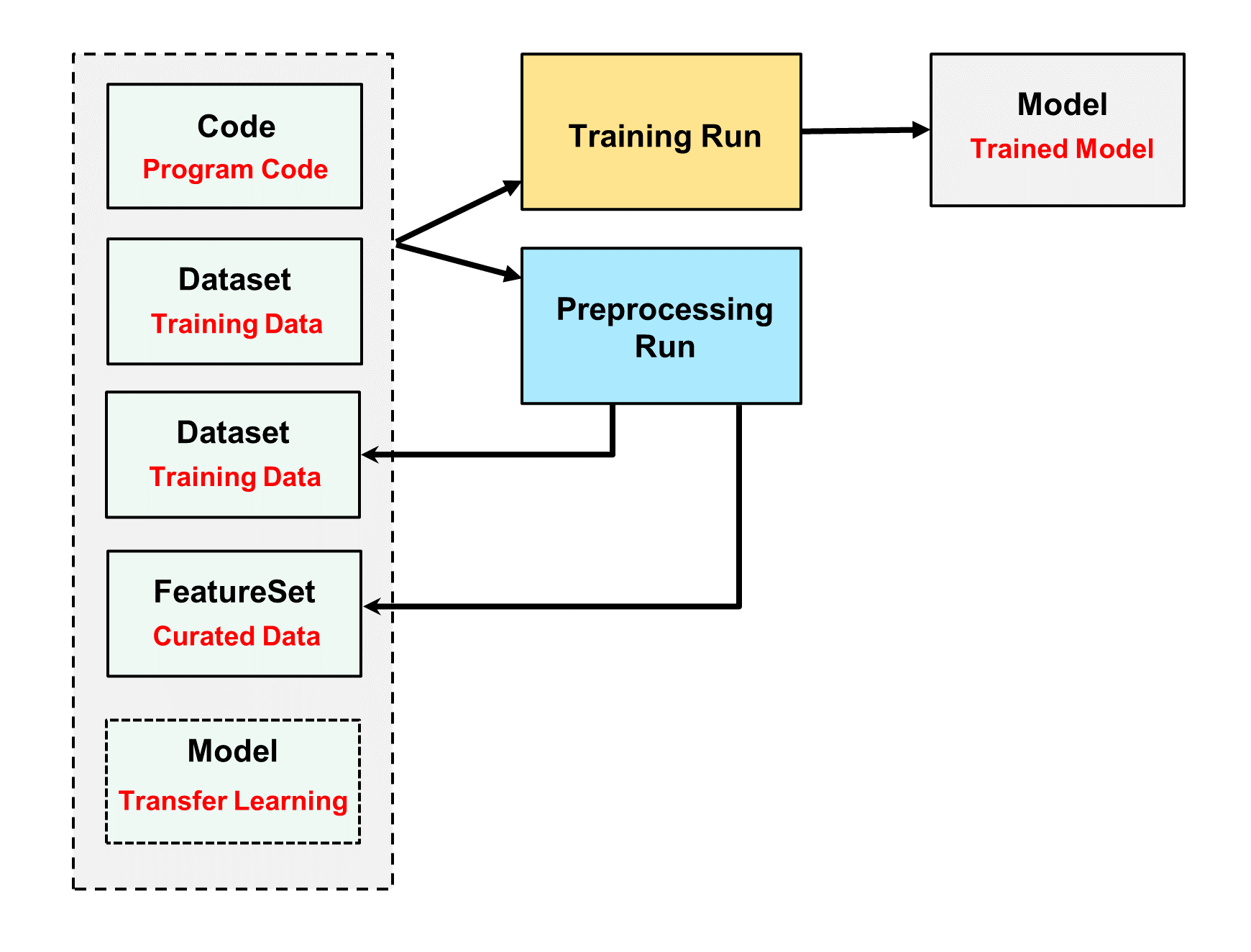

Projects |

Grouping of entities based on a name |

Code |

Directory containing program code for IDEs and Runs |

Datasets |

Directory containing training data for IDEs and Runs |

FeatureSets |

Extracted Datasets |

IDEs |

Experiment with different code, datasets, and hyperparameters |

Runs |

Formal execution of code |

Models |

Trained models, ready for deployment or transfer learning |

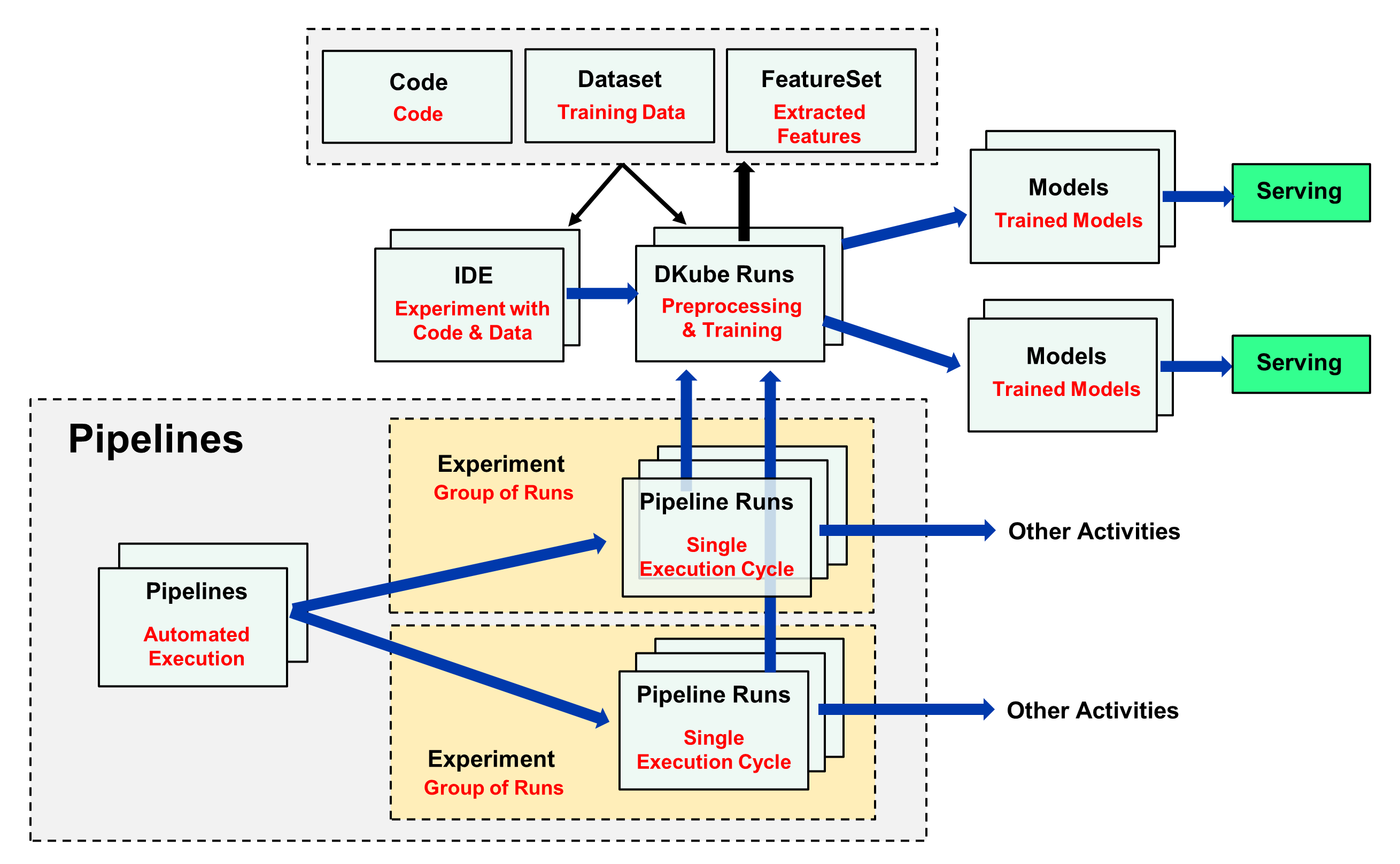

Pipeline Concepts¶

Concept |

Definition |

|---|---|

Pipeline |

Kubeflow Pipelines - Portable, visual approach to automated deep learning |

Experiments |

Aggregation of runs |

Runs |

Single cycle through a pipeline |

Note

The concepts of Pipelines are explained in section Kubeflow Pipelines

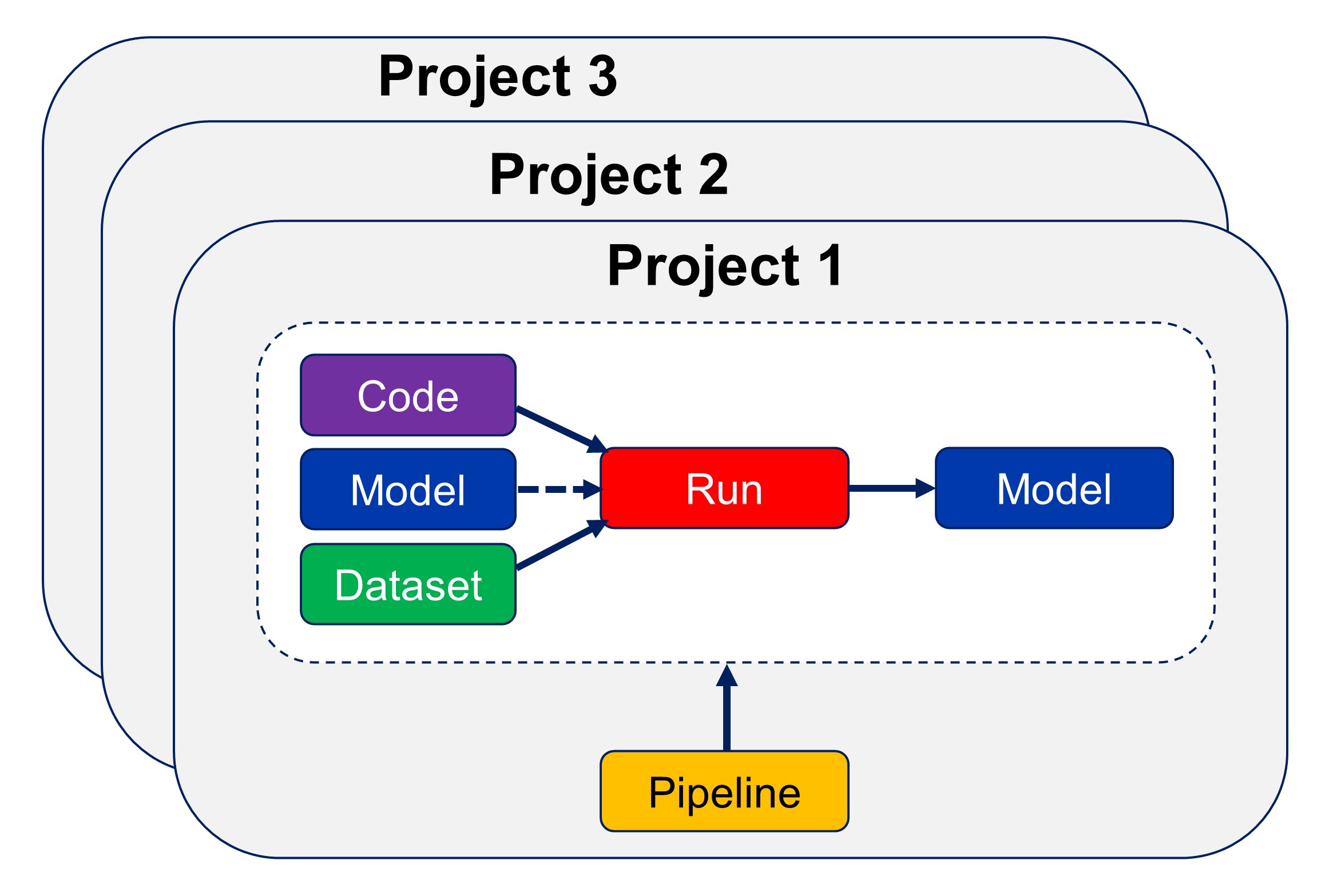

Projects¶

Projects allow the user to group entities into categories, and view them together. When a Project is selected, only the entities such as Code, Datasets, Models, Runs, etc for that Project will be shown. This is described in more detail at Projects

Projects & Groups¶

A Project is associated with a specific Group when it is created (Groups are described at Operation of Groups ). The Project, and all of its associated entities (Code, Datasets, Runs, etc) are all shared with other Users in the same Group.

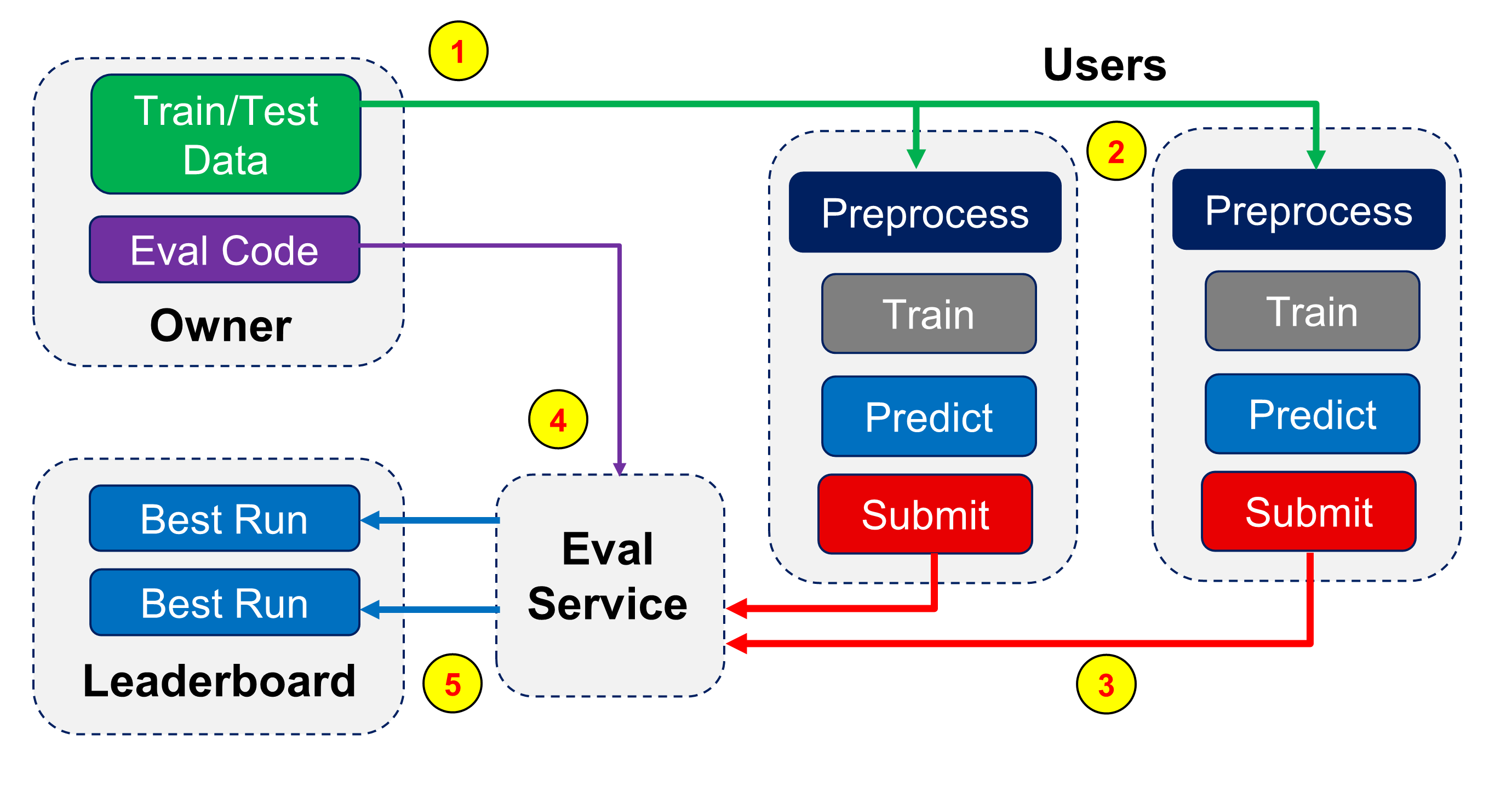

Leaderboard¶

Projects also allow different users to submit results to a leaderboard. The owner sets up the configuration and evaluation criteria for the Project, and the best results from each participating user is shown in a table. This is described in more detail at Leaderboard

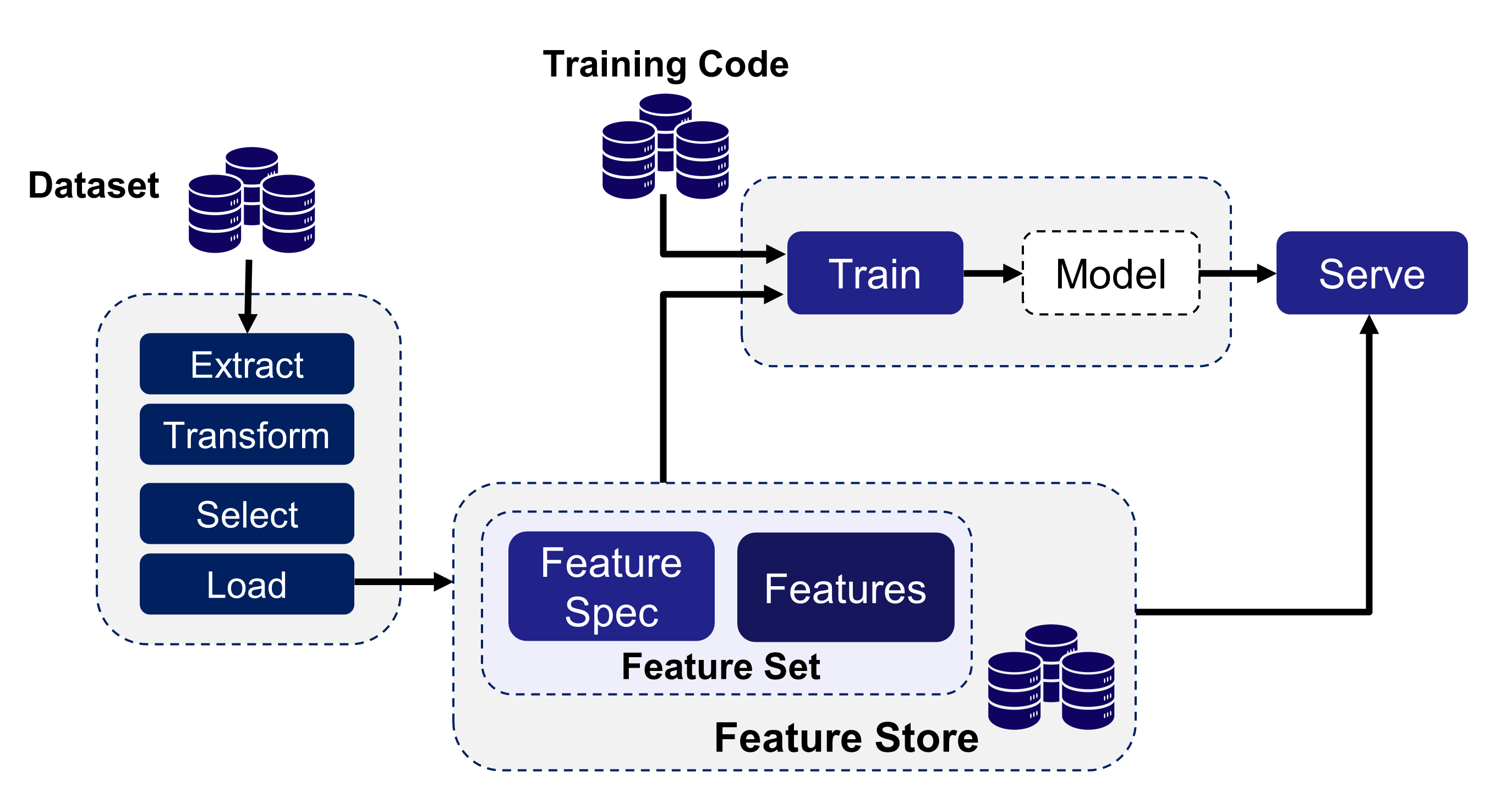

FeatureSets¶

The DKube FeatureSets capability supports feature engineering within the data science workflow. Features are extracted from raw data to improve the performance of the prediction. FeatureSets save the curated data for use in training. This is described in more detail at FeatureSets

Tags & Description¶

Most instances can have Tags associated with them, provided by the user when the instance is downloaded or created. Some instances, such as Runs, also have a Description field.

Tags and Descriptions provide an alphanumeric field that become part of the instance. They have no impact on the instance within DKube, but can be used to group entities together, or by a post-processing filter created by the Data Scientist to store information about the instance such as release version, framework, etc.

The fields can be edited after creation.

The Tag field can have as many as 256 characters.

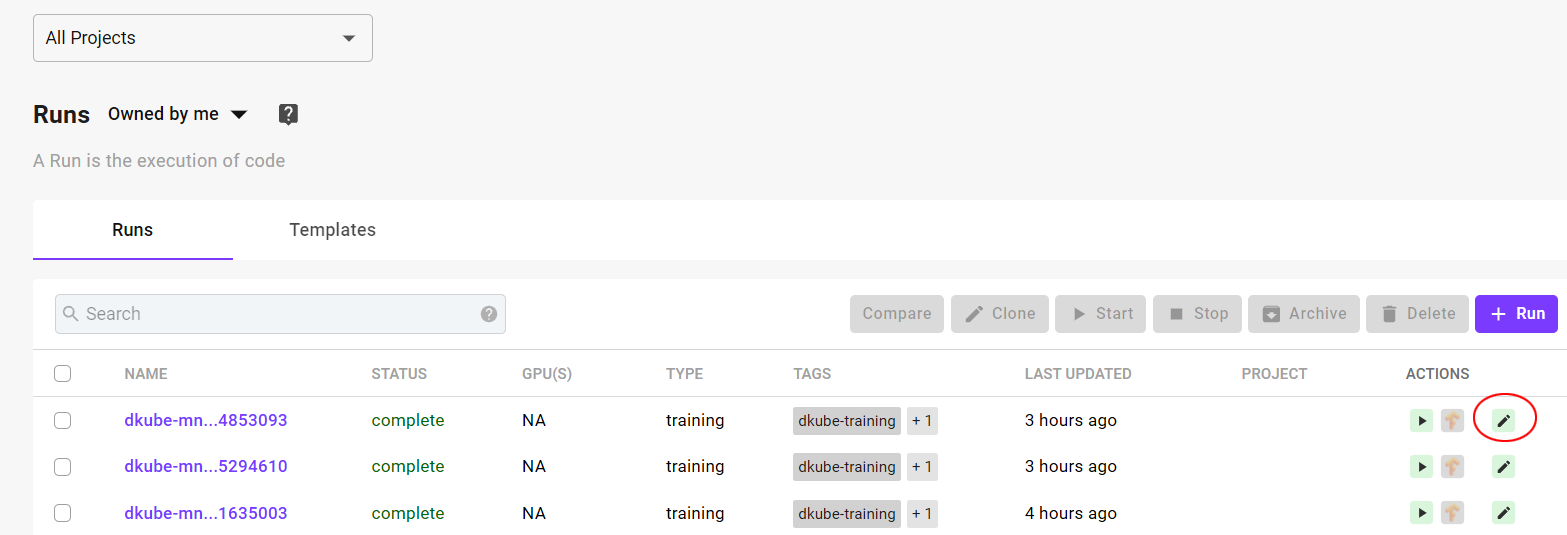

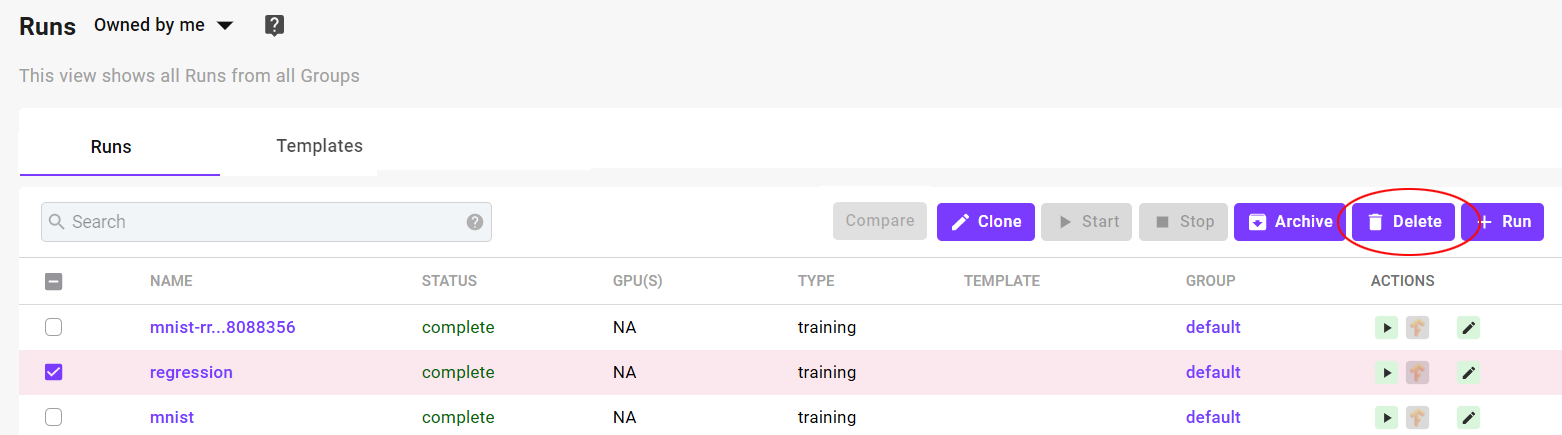

Delete and Archive¶

Entities within DKube (Repos, Runs, Models, etc) can be removed from the main list in 2 different ways.

Archive

Delete

Note

Entities must not be running in order to be archived or deleted

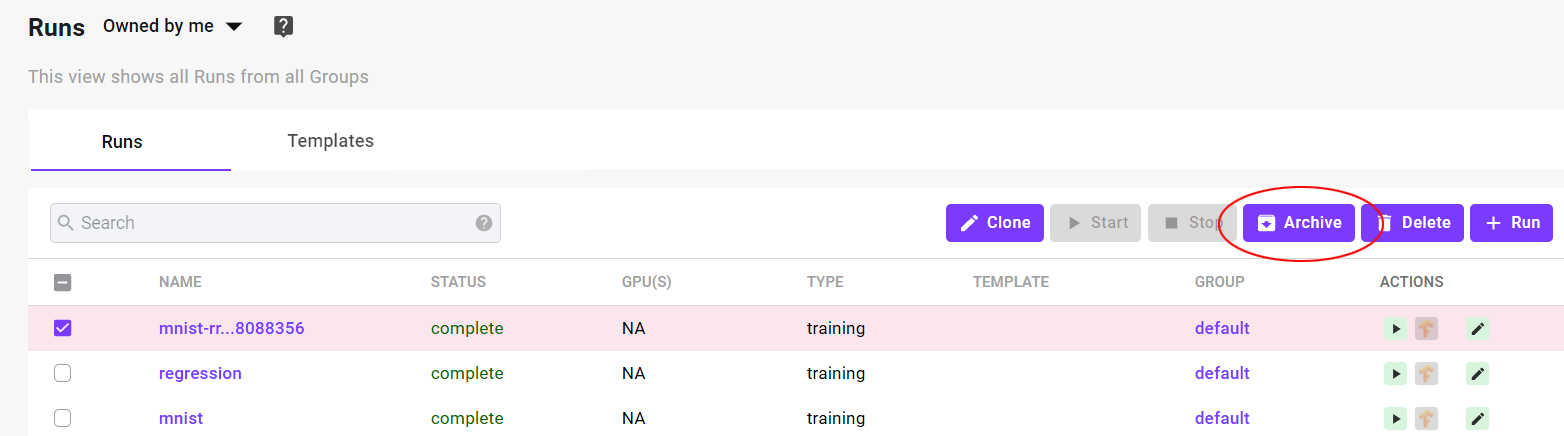

Archive¶

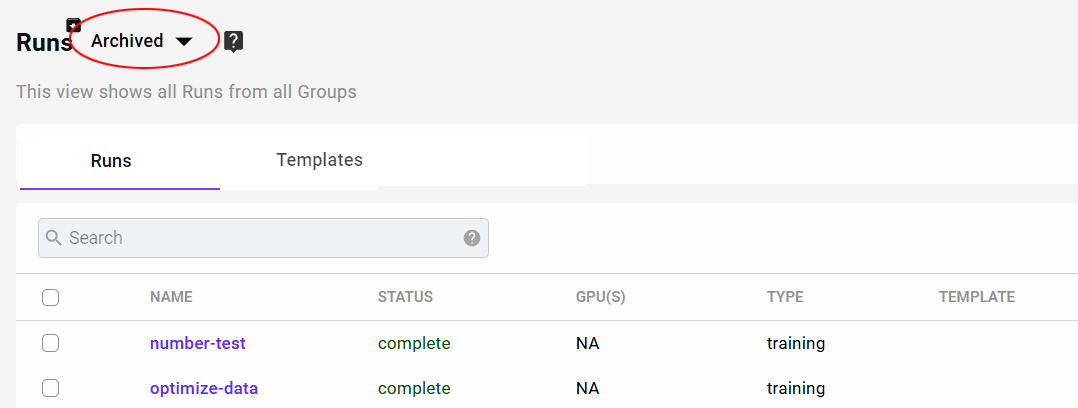

Archiving an entity places it into a special area without removing any of the data or metadata. It can be viewed by selecting the “Archived” menu item on the list screen.

Archived entities:

Are fully functional, and can be cloned, compared, published, etc, and they will show up in lineage and usage diagrams

Can be restored to the main area

Will be part of a DKube backup

Can be deleted, and will then be permanently removed from the DKube database

Delete¶

Deleting an entity removes it from the DKube storage.

Important

Deleting an entity permanently removes it from the DKube storage, and is non-recoverable

Run Scheduling¶

When a Run is submitted (see Runs ), DKube will determine whether there are enough available GPUs in the Pool associated with the shared Group. If there are enough GPUs, the Run will be scheduled immediately.

If there are not currently enough GPUs available in the Pool, the Run will be queued waiting for enough GPUs to become available. As the currently executing Runs are completed, their GPUs are released back into the Pool, and as soon as there are sufficient GPUs the queued Run will start.

It is possible to instruct the scheduler to initiate a Run immediately, without regard to how many GPUs are available. This directive is provided by the user in the GPUs section when submitting the Run.

Status Field of IDEs & Runs¶

The status field provides an indication of how the IDE or Run is progressing. The meaning of each status is provided here.

Status |

Description |

Applies To |

|---|---|---|

Queued |

Initial state |

All |

Waiting for GPUs |

Released from queue; waiting for GPUs |

All |

Starting |

Resources available; Run is starting |

All |

Running |

Run is active |

All |

Training |

Training Run is running |

Training Run |

Complete |

Run is complete; resources released |

All |

Error |

Run failure |

All |

Stopping |

Run in process of stopping |

All |

Stopped |

Run stopped; resources released |

All |

MLOps Concepts¶

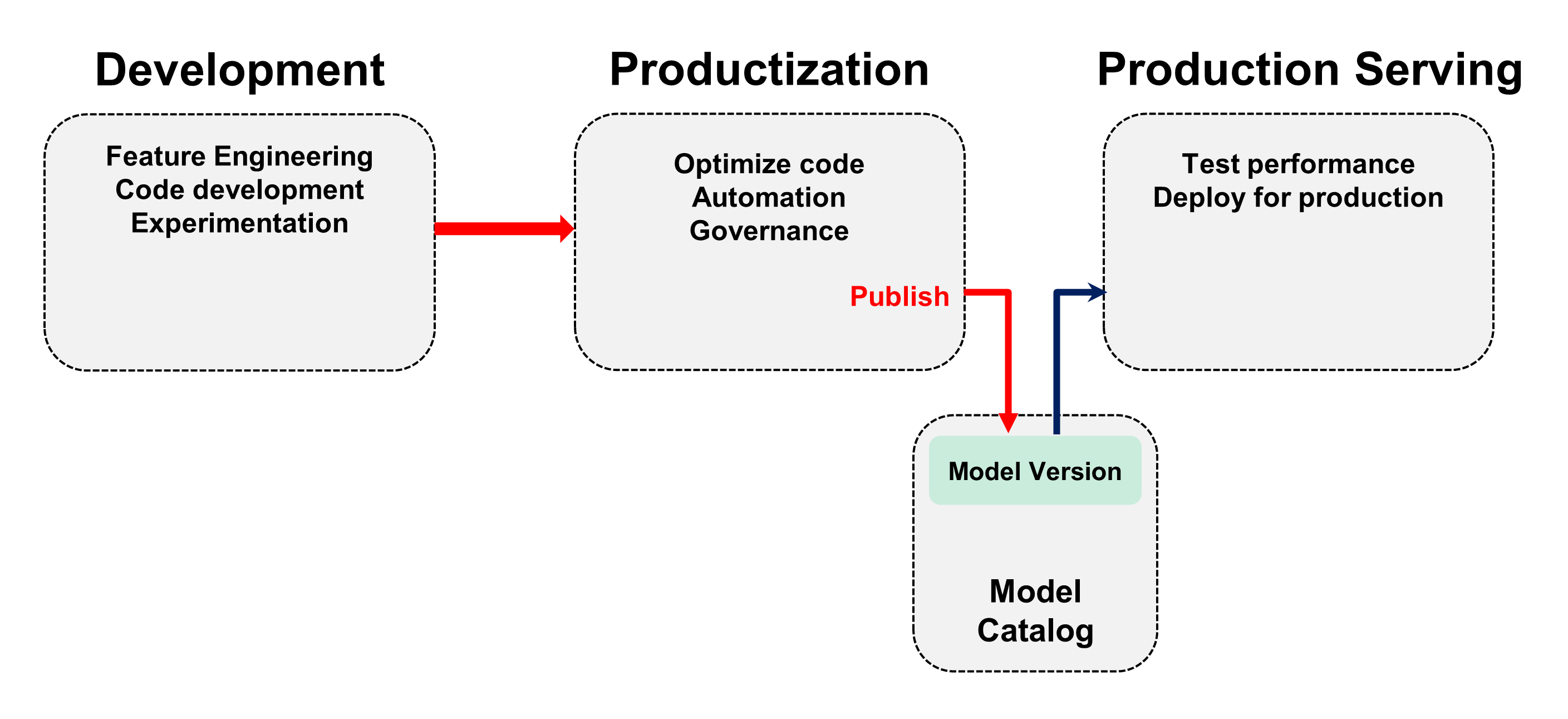

DKube supports a full MLOps workflow. Although the application is very flexible and can accommodate different workflows, the expected MLOps workflow is:

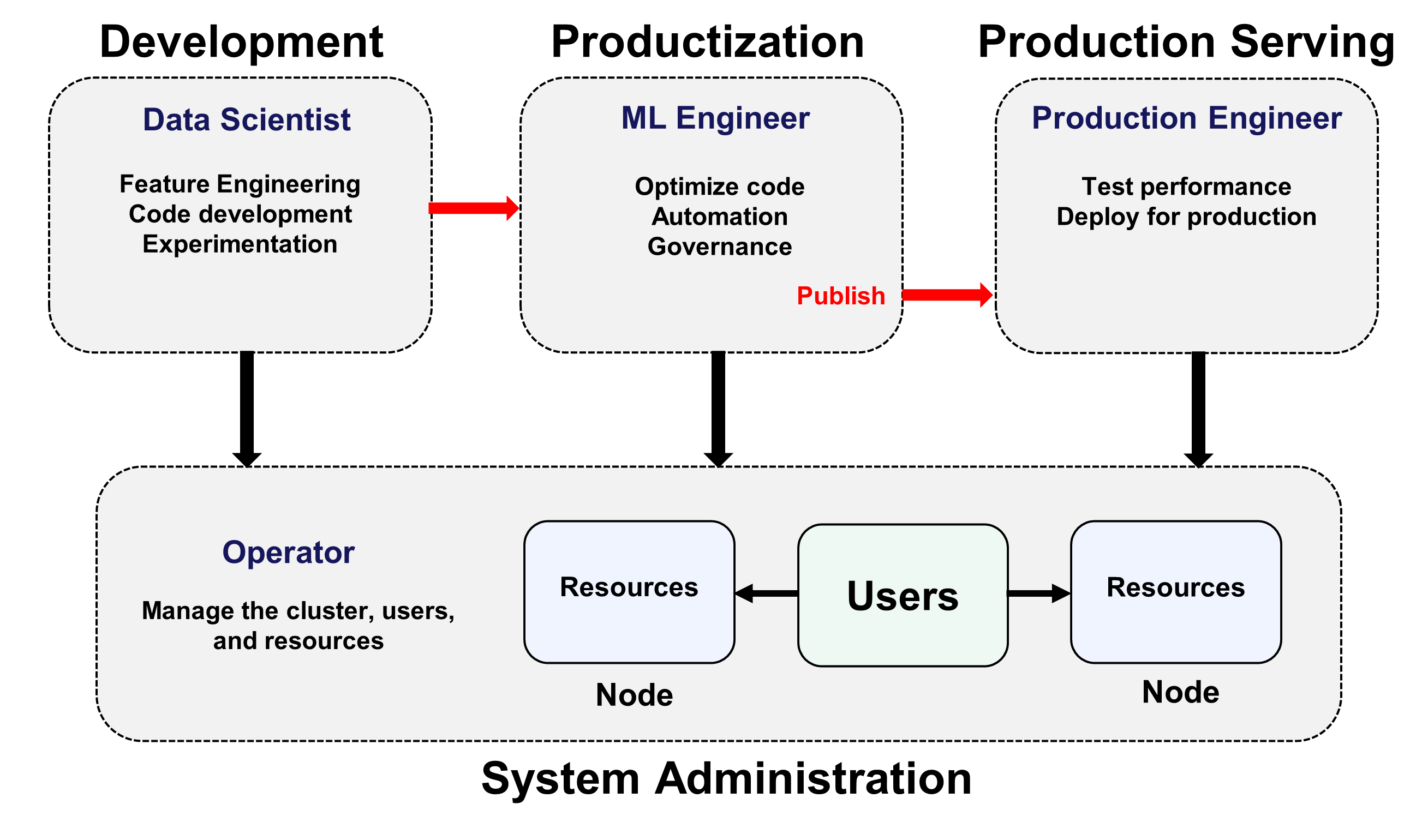

Code development and experimentation are performed by a Data Scientist. Many Models will be generated as the Data Scientist does basic development. The Model that is best suited to solving the problem is then released to the ML Engineer.

The ML Engineer takes that Model and prepares it for production. The ML Engineer will also be generating many Models during the optimization and productization phase of development. The resulting optimized Model is then Published to identify that it is ready for the Production Engineer to review and deploy.

The Published Model is added to the Model Catalog. The Model Catalog contains all of the Models that have been completed by the ML Eng, and are candidates for deployment.

The release process provides the full context of the Model as described in Tracking and Lineage . This allows reproducibility, and lets the ML Eng start with the existing Model and create more runs with different datasets, hyperparameters, and environments.

The details of this workflow are provided in the section Models

Note

Roles can be combined in any way, so that a small organization can assign a user to be both a Data Scientist and an ML Eng, or even all 3 roles. More formal organizations can split them up to provide structure. This assignment is done by the Operator.

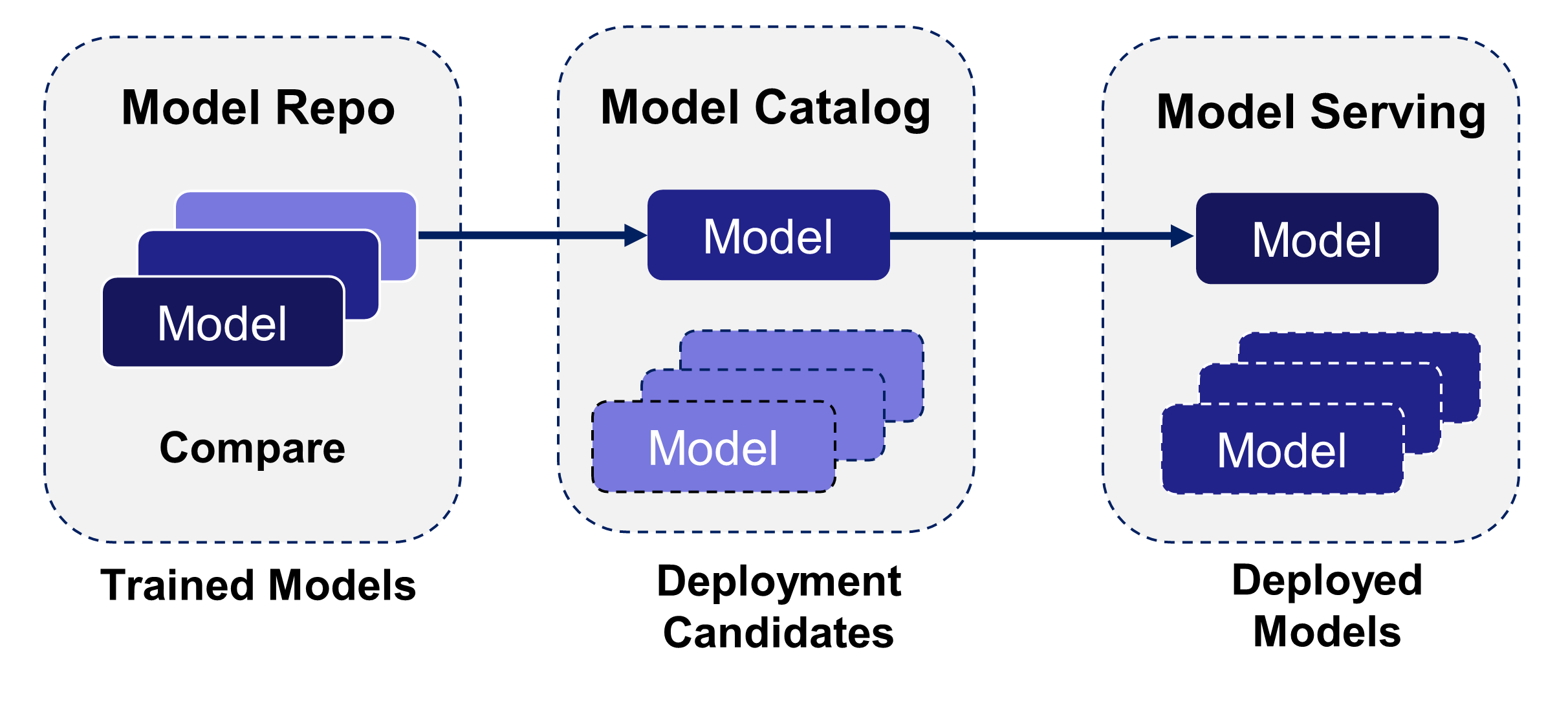

Model Stages¶

Trained Models go through a series of stages before being deployed for inference serving. Once served, they are run on the server in several different ways, depending upon the role and goals. In each case, once served, the model APIs are exposed so that an inference client can be used to manage the inference.

The Data Scientist and ML Engineer are responsible for developing and optimizing the models. Many models are trained before finding the few that best address the project goals. The models that are believed to best achieve those goals are Published to the Model Catalog. The Model Catalog contains the Models that a candidates for deployment.

The Production Engineer is responsible for testing and validating the published models, then deploying the best versions for live inference.

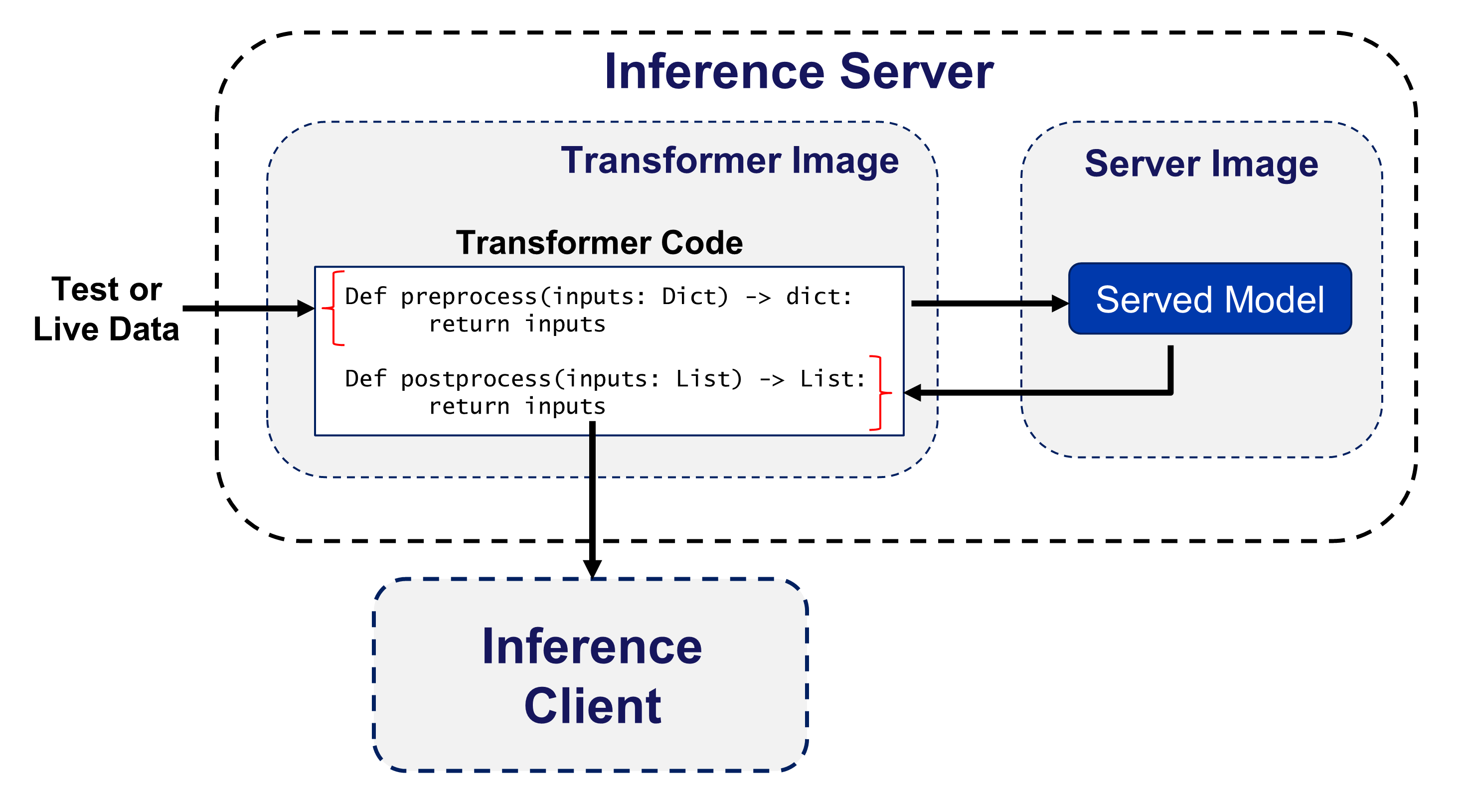

Model Serving Transformer¶

Model serving can optionally include a Transformer, which provides preprocessing and postprocessing to the inference serving.

The test or live data is preprocessed by the preprocess() function of the Transformer code

The preprocessed data is executed on the served Model

The output of the Model is postprocessed by the postprocess() function of the Transformer code

The output of the postprocessing is sent to the inference client

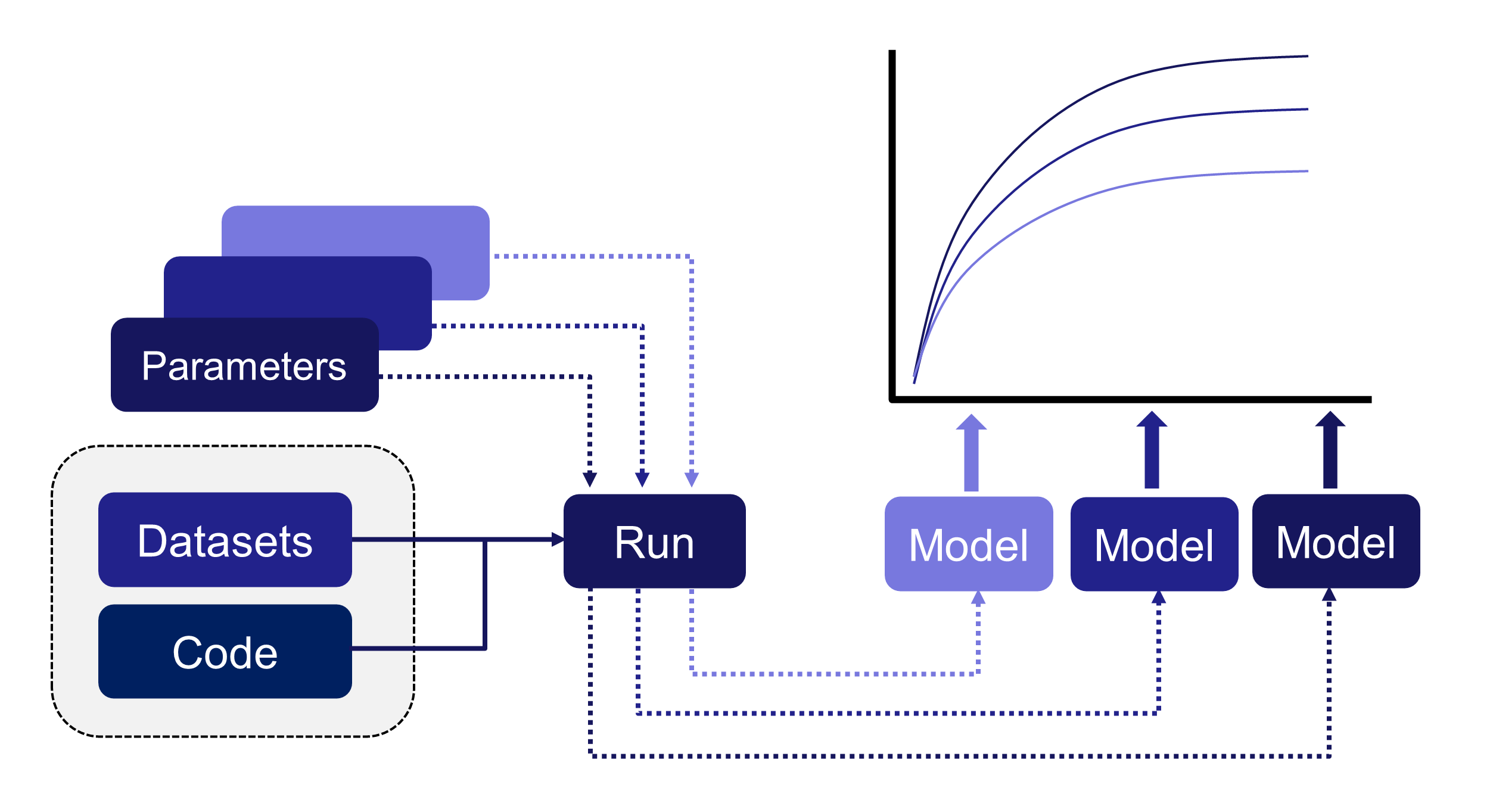

Comparing Models¶

As part of the standard model delivery workflow, Data Scientists and ML Engineers need to be able to compare several models to understand how the key metrics trend. This is described in the section Compare Models

Tracking and Lineage¶

When working with large numbers of complex models, it is important to be able to understand how different inputs lead to corresponding outputs. This is always valuable, since the user might want to go back to a previous Run and either reproduce it or make modifications from that base. And in certain markets it is mandatory for regulatory or governance reasons.

Run, Model, and Dataset Lineage¶

DKube tracks the entire path for every Run and Model, and for each Dataset that is created from a Preprocessing Run. It is saved for later viewing or usage. This is called Lineage. Lineage is available from the detailed screens for Runs, Models, and Datasets. Run lineage is described in the section Lineage

Dataset Usage¶

DKube keeps track of where each version of each Dataset is used, and shows them in the detailed screens for the Dataset version. This can be used to determine if the right distribution of Datasets is being implemented.

Versioning¶

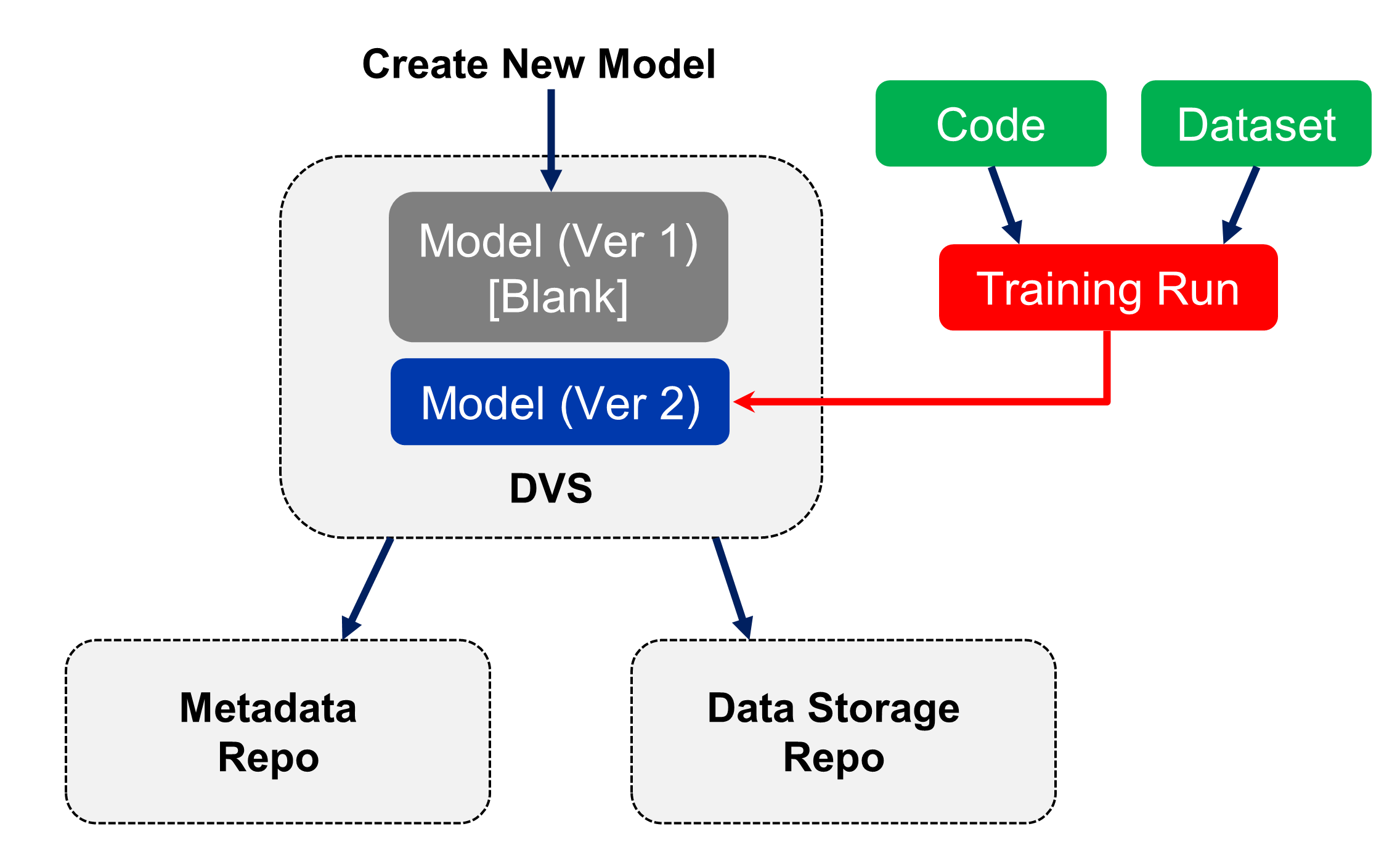

Datasets and Models are provided version control within DKube. The version control is part of the workflow and UI.

In order to set up the version control system within DKube, the versioned repository must first be created. This is explained DVS

The metadata information (including the version information) and the data storage repo are set up. The location for the data is specified, and the repo is given a name. This will create version 1 of the entity (Dataset or Model).

The DVS repo name is used when creating a Dataset or Model, as explained in section Repos

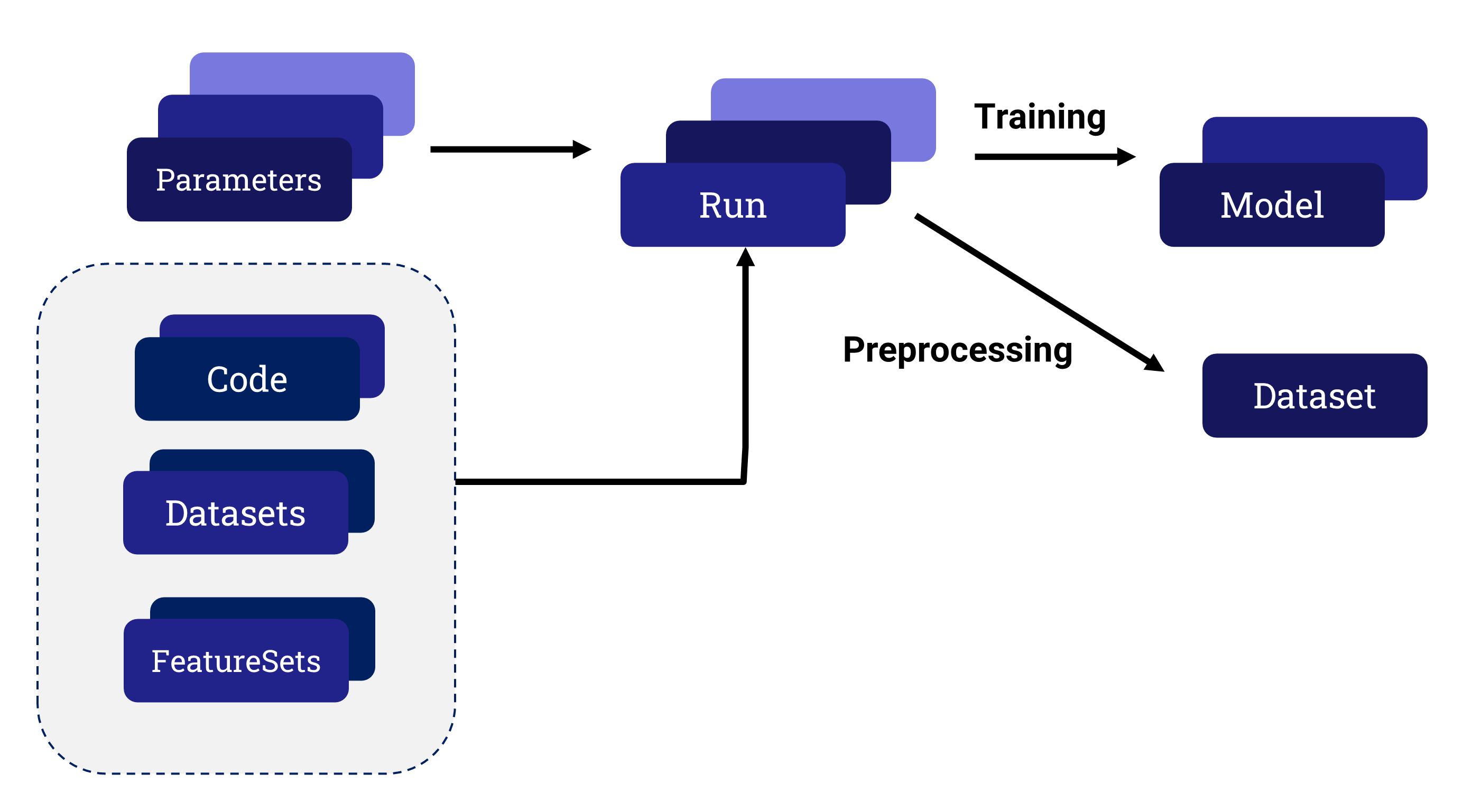

When a Run in executed:

A new version of a Model is created by a Training Run

A new version of a Dataset is created by a Preprocessing Run

The version system will automatically create a new version of the Model or Dataset, incrementing the version number after each successful Run.

The available versions of the Model or Dataset are available by selecting the detailed screen for that entity. The lineage and usage screens will identify what version of the Model or Dataset are part of the Run.

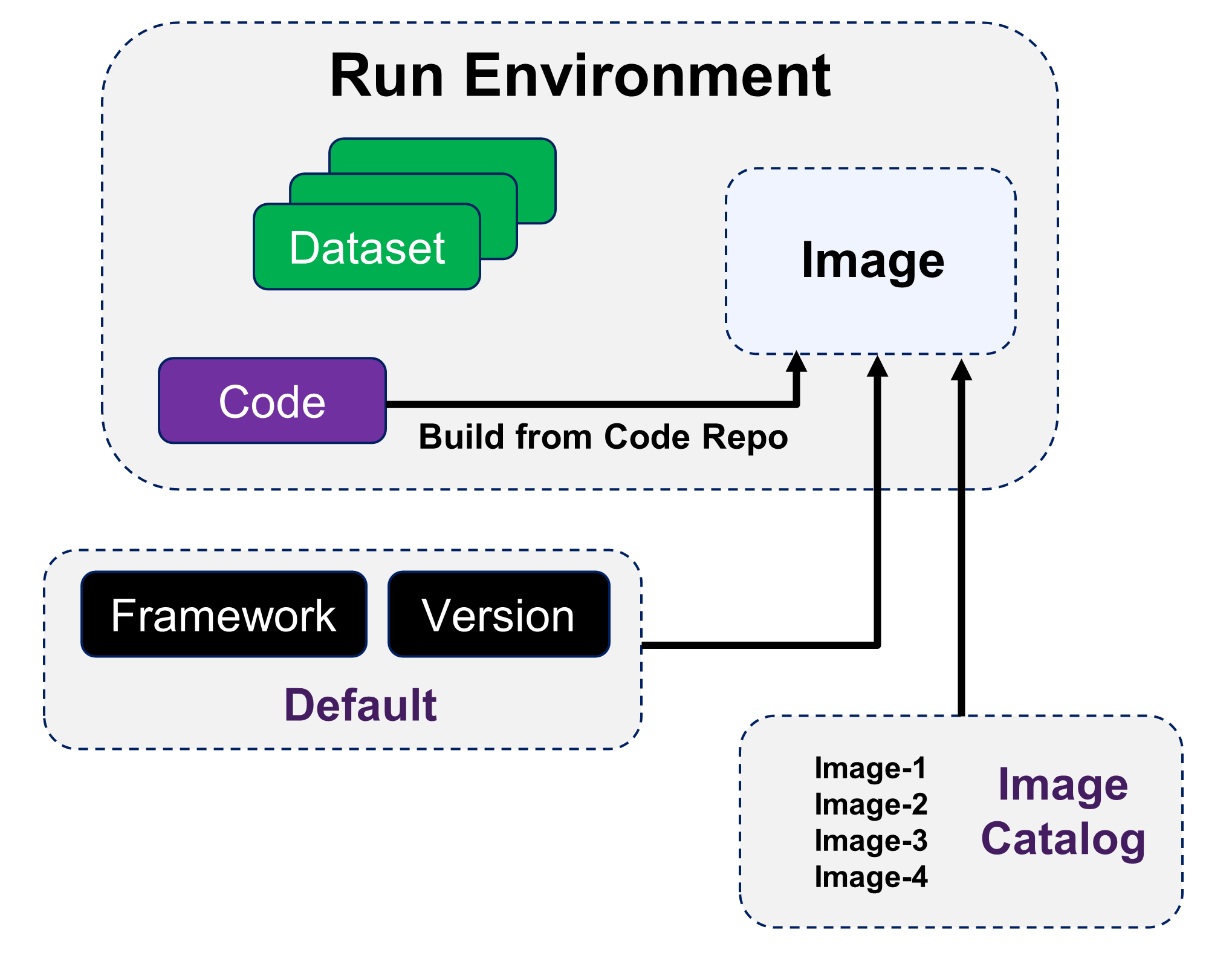

Custom Container Images¶

DKube Jobs run within container images. The image is selected when the Job is created. The image can be from several sources:

DKube provides standard images based on the framework, version, and environment

An image can be created when the Job is executed based on the code repo Build From Code Repo

A user-generated catalog of pre-built images can be used for the Job Images

Building custom images is explained at Custom Container Images

Hyperparameter Optimization¶

DKube implements Katib-based hyperparameter optimization. This enables automated tuning of hyperparameters for a Run, based upon target objectives.

This is described in more detail at Katib Introduction.

Katib Within DKube¶

The section Hyperparameter Optimization provides the details on how to use this feature within DKube.

Kubeflow Pipelines¶

Support for Kubeflow Pipelines has been integrated into DKube. Pipelines facilitate portable, automated, structured machine learning workflows based on Docker containers.

The Kubeflow Pipelines platform consists of:

A user interface (UI) for managing and tracking experiments and runs

An engine for scheduling multi-step machine learning workflows

An SDK for defining and manipulating pipelines and components

Notebooks for interacting with the system using the SDK

An overall description of Kubeflow Pipelines is provided below. The reference documentation is available at Pipelines Reference.

Pipeline Definition¶

A pipeline is a description of a machine learning workflow, including all of the components in the workflow and how they combine in the form of a graph. The pipeline includes the definition of the inputs (parameters) required to run the pipeline and the inputs and outputs of each component.

After developing your pipeline, you can upload and share it through the Kubeflow Pipelines UI.

The following provides a summary of the Pipelines terminology.

Term |

Definition |

|---|---|

Pipeline |

Graphical description of the workflow |

Component |

Self-contained set of code that performs one step in the workflow |

Graph |

Pictorial representation of the run-time execution |

Experiment |

Aggregation of Runs, used to try different configurations of your pipeline |

Run |

Single execution of a pipeline |

Recurring Run |

Repeatable run of a pipeline |

Run Trigger |

Flag that tells the system when a recurring run spawns a new run |

Step |

Execution of a single component in the pipeline |

Output Artifact |

Output emitted by a pipeline component |

Pipeline Component¶

A pipeline component is a self-contained set of user code, packaged as a Docker image, that performs one step in the pipeline. For example, a component can be responsible for data preprocessing, data transformation, model training, etc.

The component contains:

Term |

Definition |

|---|---|

Client Code |

The code that talks to endpoints to submit Runs |

Runtime Code |

The code that does the actual Run and usually runs in the cluster |

A component specification is in YAML format, and describes the component for the Kubeflow Pipelines system. A component definition has the following parts:

Term |

Definition |

|---|---|

Metadata |

Name, description, etc. |

Interface |

Input/output specifications (type, default values, etc) |

Implementation |

A specification of how to run the component given a set of argument values for the component’s inputs. The implementation section also describes how to get the output values from the component once the component has finished running. |

You must package your component as a Docker image. Components represent a specific program or entry point inside a container.

Each component in a pipeline executes independently. The components do not run in the same process and cannot directly share in-memory data. You must serialize (to strings or files) all the data pieces that you pass between the components so that the data can travel over the distributed network. You must then deserialize the data for use in the downstream component.

Kubeflow Pipelines Within DKube¶

The section Kubeflow Pipelines provides the details on how this capability is implemented in DKube. One Convergence provides templates and examples for pipeline creation described at Kubeflow Pipelines Template

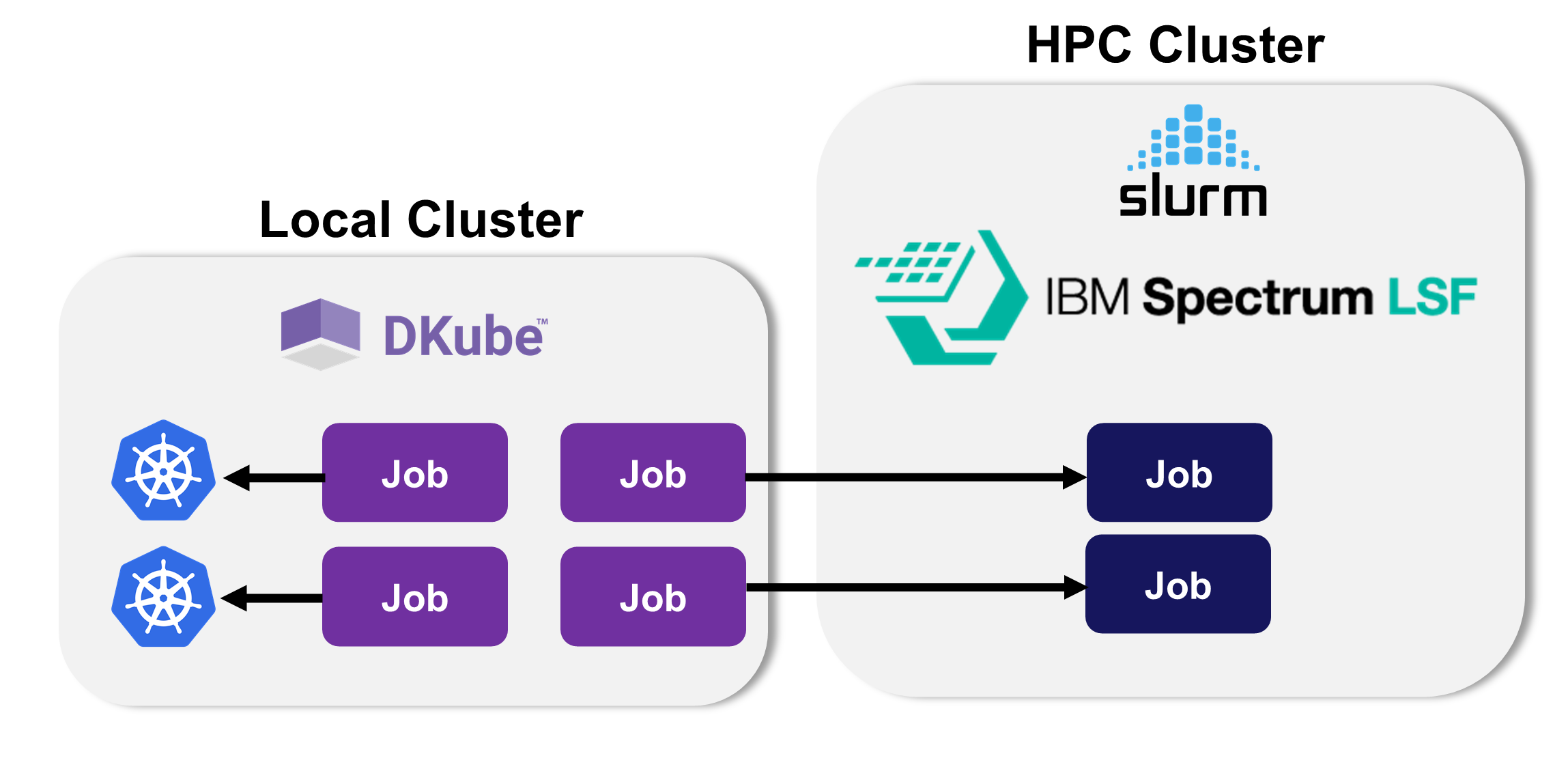

Multicluster Execution¶

DKube provides the ability to execute jobs on the local Kubernetes cluster, or it can send them to another cluster for execution. A remote cluster is first added to the DKube cluster database. Then, a Job can be submitted to the remote cluster, with DKube taking care of any necessary translation through a plug-in.

The Job metadata is kept on the local cluster so that the MLOps workflow - tracking, lineage, etc - are all available from the remote execution. This is described in more detail at Multicluster Operation

The basic flow of adding and using an external cluster are:

Set up the external cluster as described at Multicluster Management

Log into the cluster, if required, as described at Multicluster Operation

Submit the job to the remote cluster as described at Configuration Submission Screen